Rambus Quietly Builds a Core Piece of the AI Server Memory Shift as SOCAMM2 Gains Momentum

Rambus has officially introduced its new SOCAMM2 server chipset, a move that may look understated on the surface but is actually a major step for the next generation of AI server memory. The company says the chipset is designed to enable low power, high performance LPDDR5X based SOCAMM2 modules for AI server platforms, positioning Rambus as a key enabler in the emerging transition away from soldered LPDDR toward modular, serviceable memory in the data center.

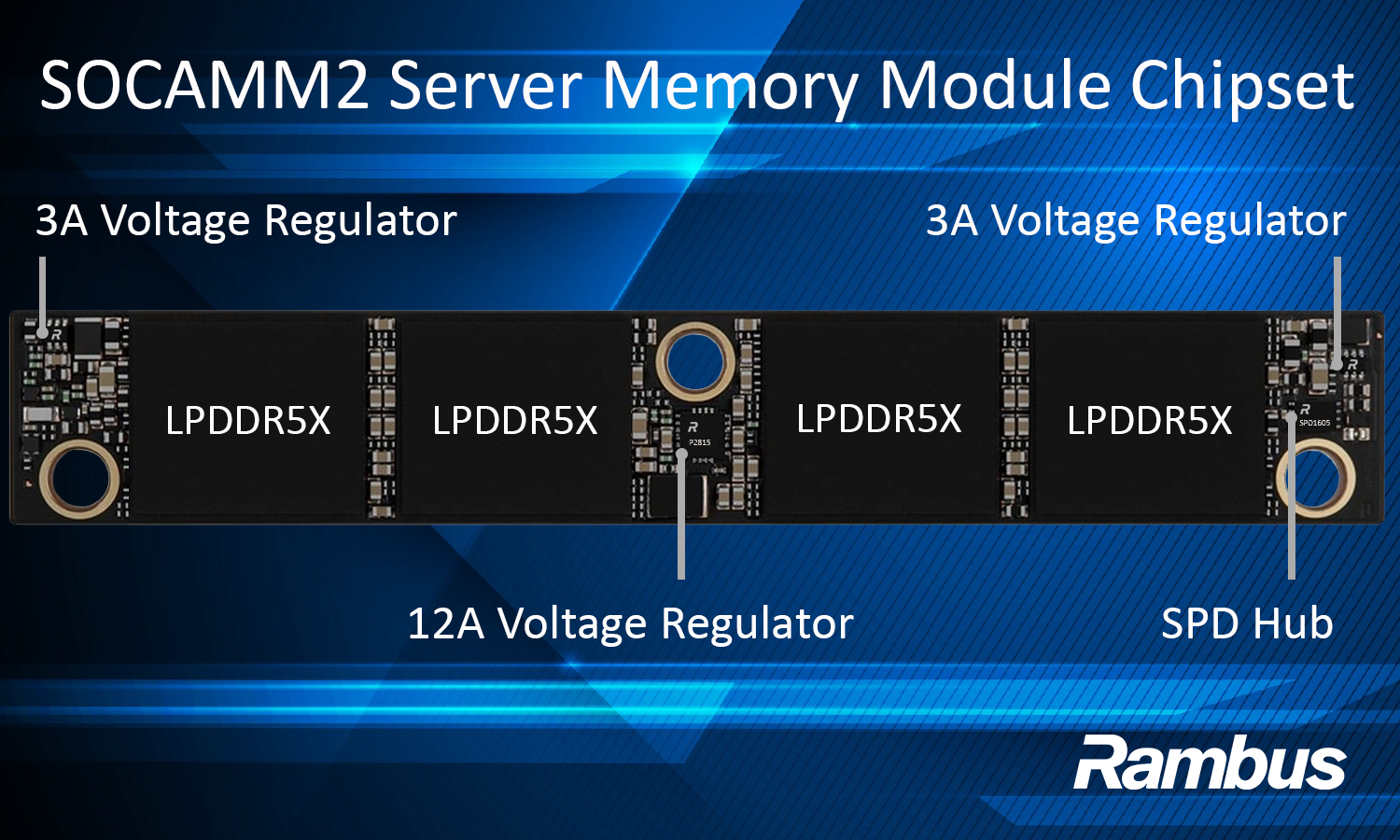

That matters because SOCAMM2 is increasingly being viewed as one of the most practical memory form factors for AI systems that need better power efficiency, strong bandwidth, and higher memory density without giving up field serviceability. Rambus says its chipset includes 12A and 3A voltage regulators plus an SPD Hub, all of which are meant to provide the power delivery, module identification, configuration, and telemetry functions required for JEDEC standard SOCAMM2 server modules. In other words, Rambus is not simply releasing another memory support chip. It is filling in the infrastructure layer that helps SOCAMM2 become deployable at scale in real AI servers.

The company is also tying the announcement directly to LPDDR5X’s role in AI infrastructure. On its official product page, Rambus says the chipset enables LPDDR5X based SOCAMM2 modules up to 9.6 Gb/s, while also solving one of the long standing limitations of LPDDR in server systems: the fact that LPDDR DRAM previously had to be soldered directly onto the motherboard. SOCAMM2 changes that by turning LPDDR into a modular memory format that can still deliver the efficiency and board area benefits of LPDDR, but with the flexibility and reliability expected in server deployments.

That is why this announcement carries more strategic weight than it may first appear. AI servers are no longer only about raw compute. They are increasingly about how much memory can be delivered, how efficiently it can be powered, and how practical it is to service and upgrade systems once they are deployed. Rambus is clearly leaning into that reality. Its own description of the chipset says SOCAMM2 modules are an architectural response to growing pressure around power, efficiency, form factor, and scalability in AI data center infrastructure.

From a technical standpoint, Rambus is emphasizing 3 core functions in the new chipset.

12A and 3A voltage regulators

These regulators take higher voltage inputs and step them down to the levels needed for LPDDR DRAM and other active module components, improving local power efficiency and giving finer control over supply currents.SPD Hub with integrated temperature sensor

The SPD Hub communicates over I3C and provides configuration data plus thermal telemetry, which is critical for server level reliability and monitoring.LPDDR5X SOCAMM2 support up to 9.6 Gb/s

Rambus says the chipset enables operation of LPDDR5X based SOCAMM2 modules at up to 9.6 Gb/s, giving the format the performance headroom needed for modern AI platforms.

This also fits into a broader Rambus roadmap. The company says the SOCAMM2 chipset is the first step in a wider LPDDR based server module strategy, showing that it expects SOCAMM2 and similar memory formats to become more important as AI data center workloads continue to diversify. Rambus is effectively placing itself in the middle of the memory interface layer just as the industry starts to experiment with more compact, lower power server memory architectures for next wave AI systems.

The industry language around the launch supports that direction. In the company’s release, Micron’s Praveen Vaidyanathan described SOCAMM2 as a key step toward efficient, scalable, CPU connected memory for future AI servers, while IDC’s Soo Kyoum Kim said memory architectures like SOCAMM2 represent an important evolution in how systems balance performance and efficiency. Those comments are not independent validation of market dominance, but they do show that major ecosystem players increasingly see LPDDR based modular memory as a real part of the AI server conversation.

The bigger picture is simple. SOCAMM2 is becoming attractive because it brings together 4 qualities AI servers need more of: lower power, compact density, modular serviceability, and scalable bandwidth. Rambus may not be making the memory chips themselves, but by providing the power and telemetry logic that lets these modules work reliably in server environments, it is helping build one of the missing pieces needed for SOCAMM2 adoption to accelerate. As AI servers keep evolving beyond traditional memory assumptions, this kind of supporting silicon may end up mattering almost as much as the DRAM itself.

Do you think SOCAMM2 will become the preferred memory format for next generation AI servers, or will traditional server memory still hold the advantage for longer?