Samsung Unveils HBM4E With Up to 4 TB/s Bandwidth Per Stack, While HBM4 Enters Mass Production for NVIDIA Vera Rubin

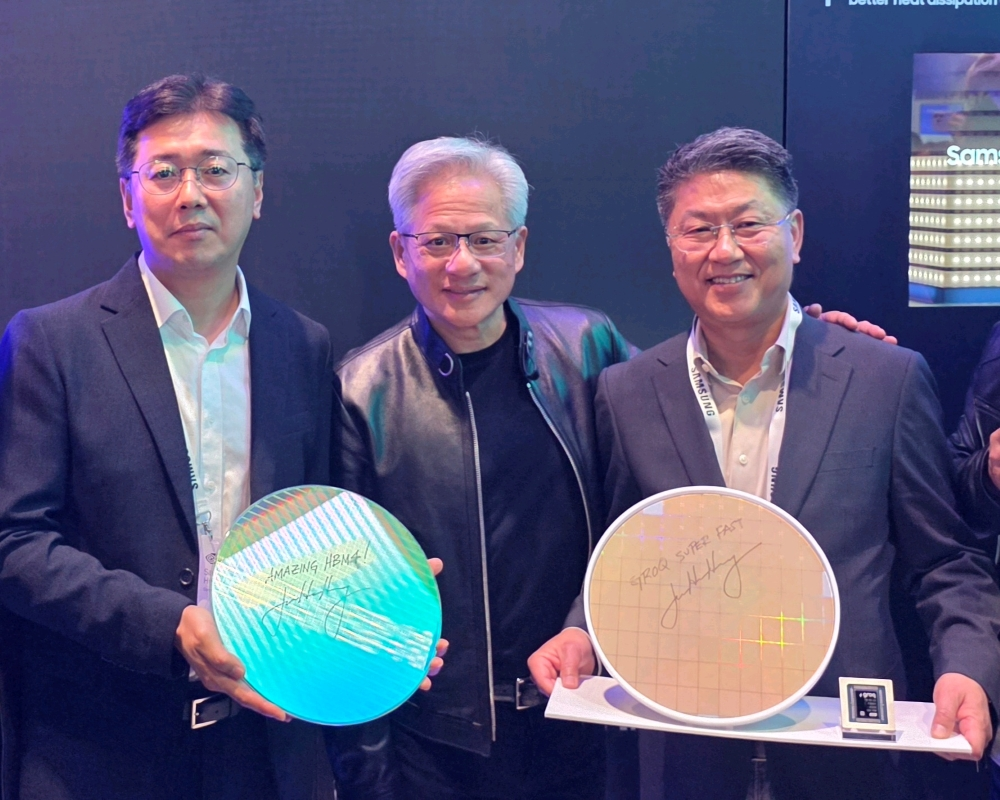

Samsung has used GTC 2026 to outline a broader AI memory and storage push, led by the public debut of its next generation HBM4E and the confirmation that its HBM4 is now in mass production for the NVIDIA Vera Rubin platform. In Samsung’s official announcement, the company says its sixth generation HBM4 is already in mass production and designed for NVIDIA Vera Rubin, while HBM4E is being shown for the first time with 16 Gbps per pin and 4.0 TB/s of bandwidth per stack.

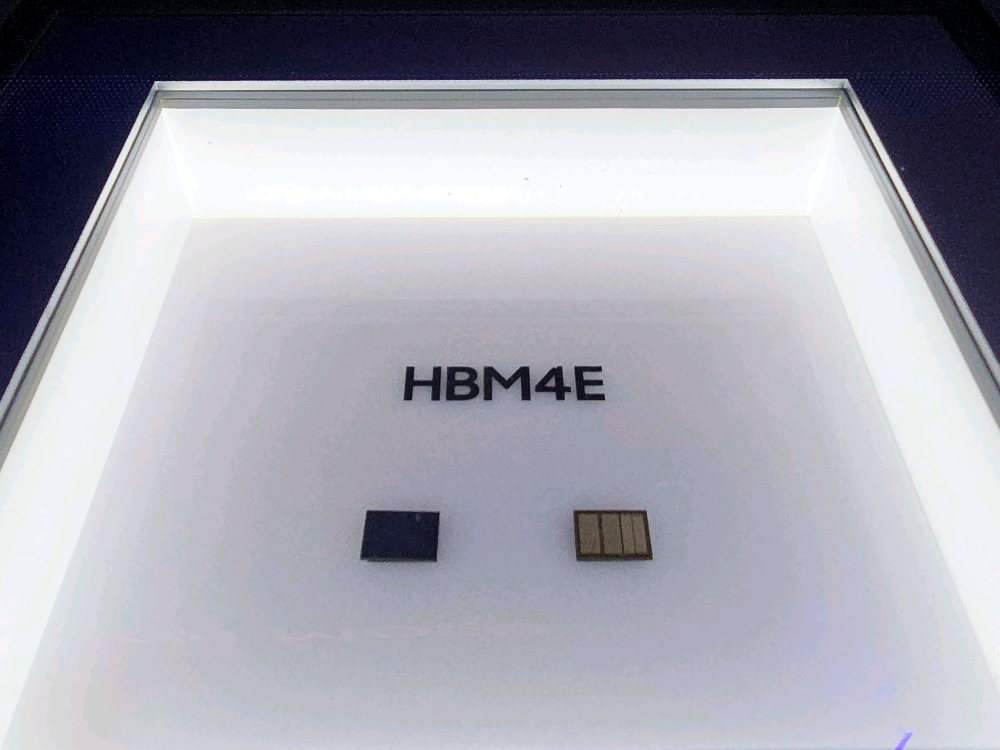

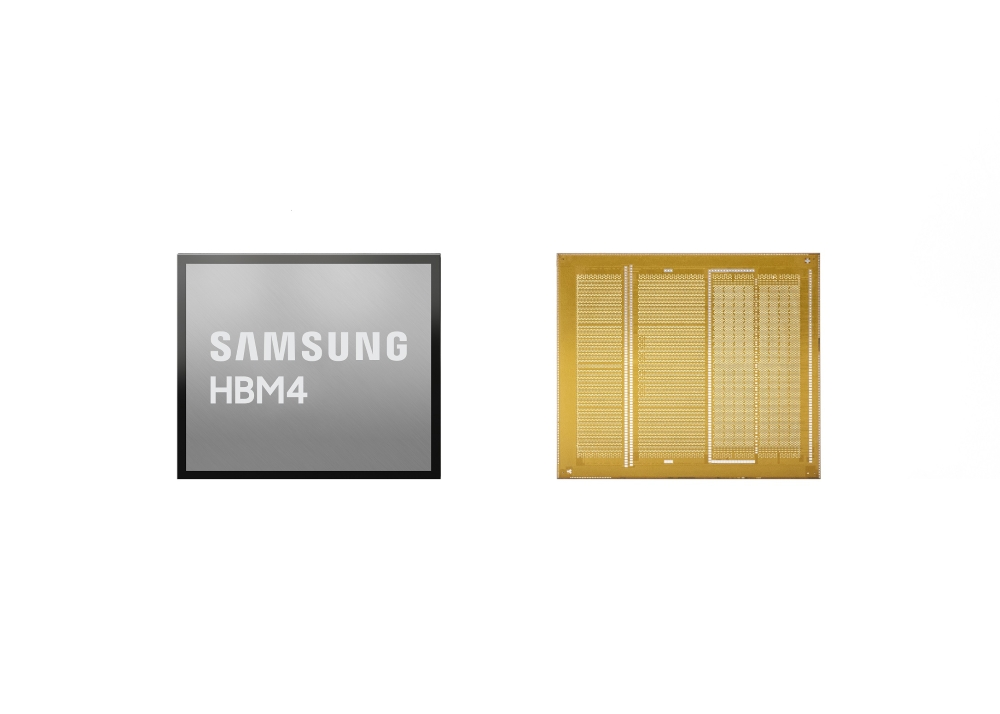

The key distinction is important. HBM4 is the product Samsung says is now in mass production for Vera Rubin, with 11.7 Gbps processing speeds that can be enhanced to 13 Gbps. HBM4E, meanwhile, is the follow up technology Samsung is showcasing as its faster next step, delivering 16 Gbps per pin and 4.0 TB/s bandwidth, but Samsung does not say in this announcement that HBM4E itself is already in mass production. That means the more accurate reading is that HBM4 is the current production ready solution for Rubin era deployments, while HBM4E represents the next performance step Samsung is putting on display.

Samsung also ties this memory roadmap directly to NVIDIA’s infrastructure plans. The company says its showcase includes products designed for NVIDIA AI infrastructure, with HBM4, SOCAMM2, and PM1763 SSD highlighted inside a dedicated NVIDIA gallery area. NVIDIA’s own Rubin platform page positions Rubin as the foundation for scalable AI reasoning and large AI factory deployments, which is exactly the type of environment where the jump from HBM4 to HBM4E could become strategically important.

Another major part of the story is packaging. Samsung says visitors at GTC 2026 will also get a look at its hybrid copper bonding technology, which it describes as a new method that can enable future HBM to reach 16 or more layers while reducing heat resistance by more than 20 percent compared with thermal compression bonding. That matters because next generation HBM is no longer only about raw speed. Thermal behavior, stack height, yield stability, and power efficiency are becoming just as decisive as bandwidth in AI accelerator design.

As for capacity, the draft figure of 48 GB per stack aligns with the idea of a 16 high HBM4E stack using 3 GB dies, but Samsung’s announcement does not explicitly state that number in the text I reviewed. So it is safer to frame the bandwidth and per pin speed as the confirmed official specifications, while treating exact stack capacity assumptions as informed extrapolation unless Samsung publishes a dedicated product sheet with full density details. The same applies to theoretical full GPU totals for a future Rubin Ultra class configuration. Those numbers may be directionally reasonable, but they are not directly confirmed in the official Samsung post.

Samsung also used the announcement to reinforce that its broader AI memory stack is moving in parallel. The company says its SOCAMM2 is already in mass production, making it the first in the industry to reach that stage, while its PM1763 PCIe 6.0 SSD is being shown as part of next generation AI storage efforts. Samsung further notes that its PM1753 SSD will be demonstrated within the NVIDIA BlueField 4 STX reference architecture for the Vera Rubin platform. In other words, Samsung is not only talking about high bandwidth memory. It is presenting a full ecosystem pitch around AI memory, storage, and packaging.

From a market standpoint, this announcement matters because Samsung is making it clear that it intends to stay aggressive in the AI memory race at every level, from production HBM4 for current Rubin class systems to HBM4E for future platforms. The larger competitive signal is straightforward. AI infrastructure is now moving into a phase where memory bandwidth, capacity scaling, and packaging innovation are as important as accelerator compute itself. Samsung wants to be present across all of those layers, and GTC 2026 gave the company a timely stage to show exactly that.

Do you think the next major AI hardware battle will be decided more by memory and packaging innovation than by raw GPU compute alone?