NVIDIA Refreshes DGX Station With the GB300 Blackwell Ultra Desktop Superchip, Bringing Up to 748 GB of Coherent Memory and 20 PFLOPs of AI Compute

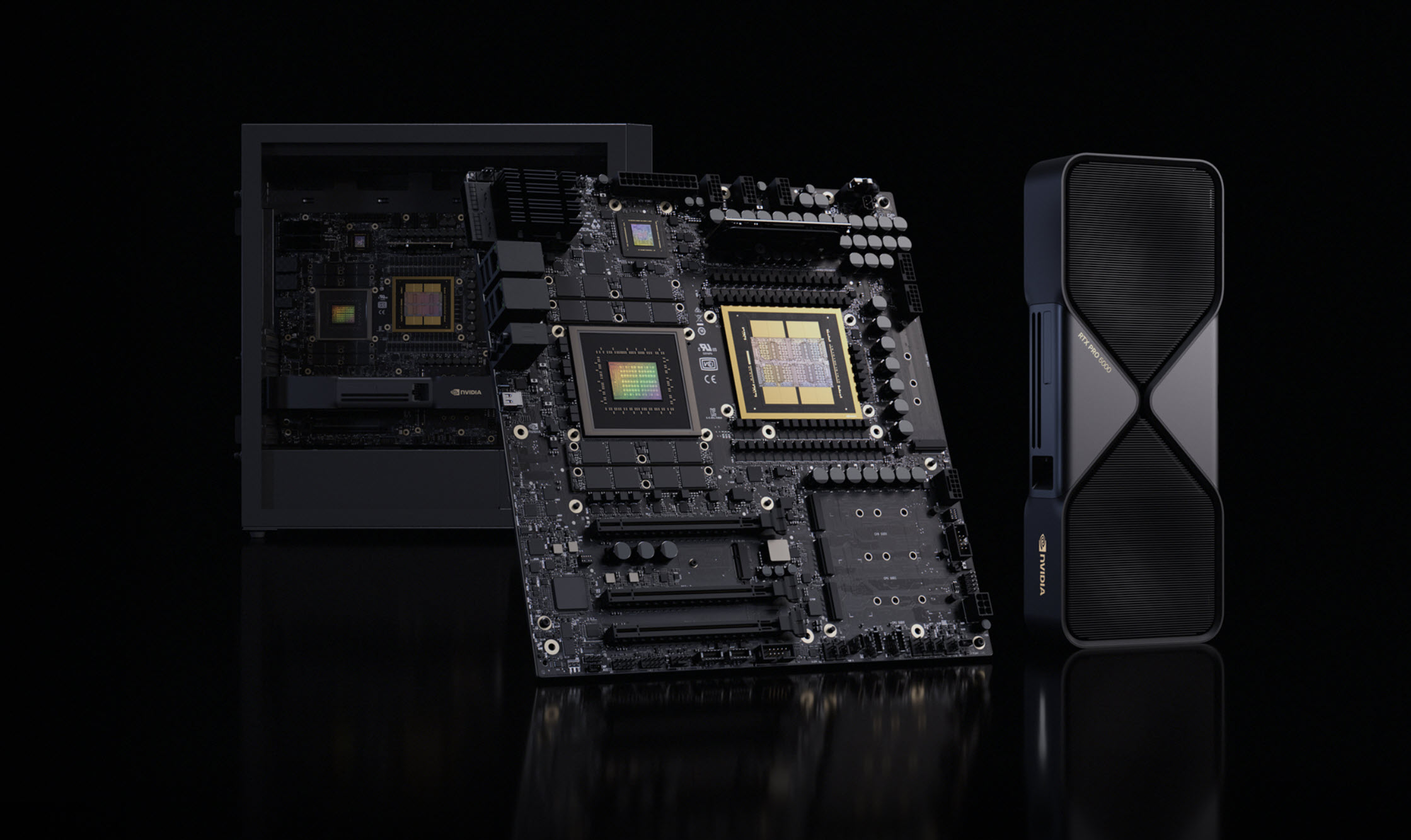

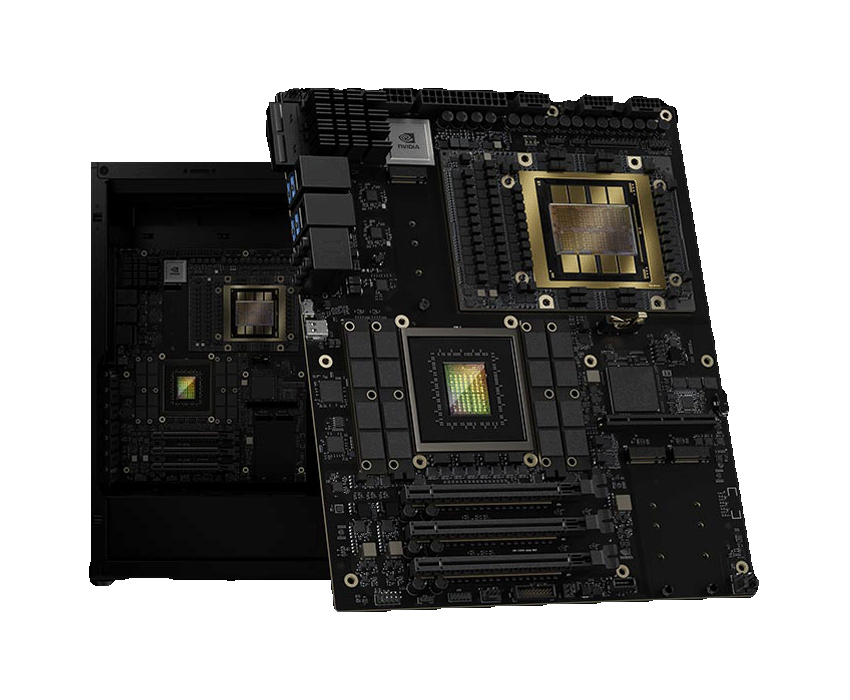

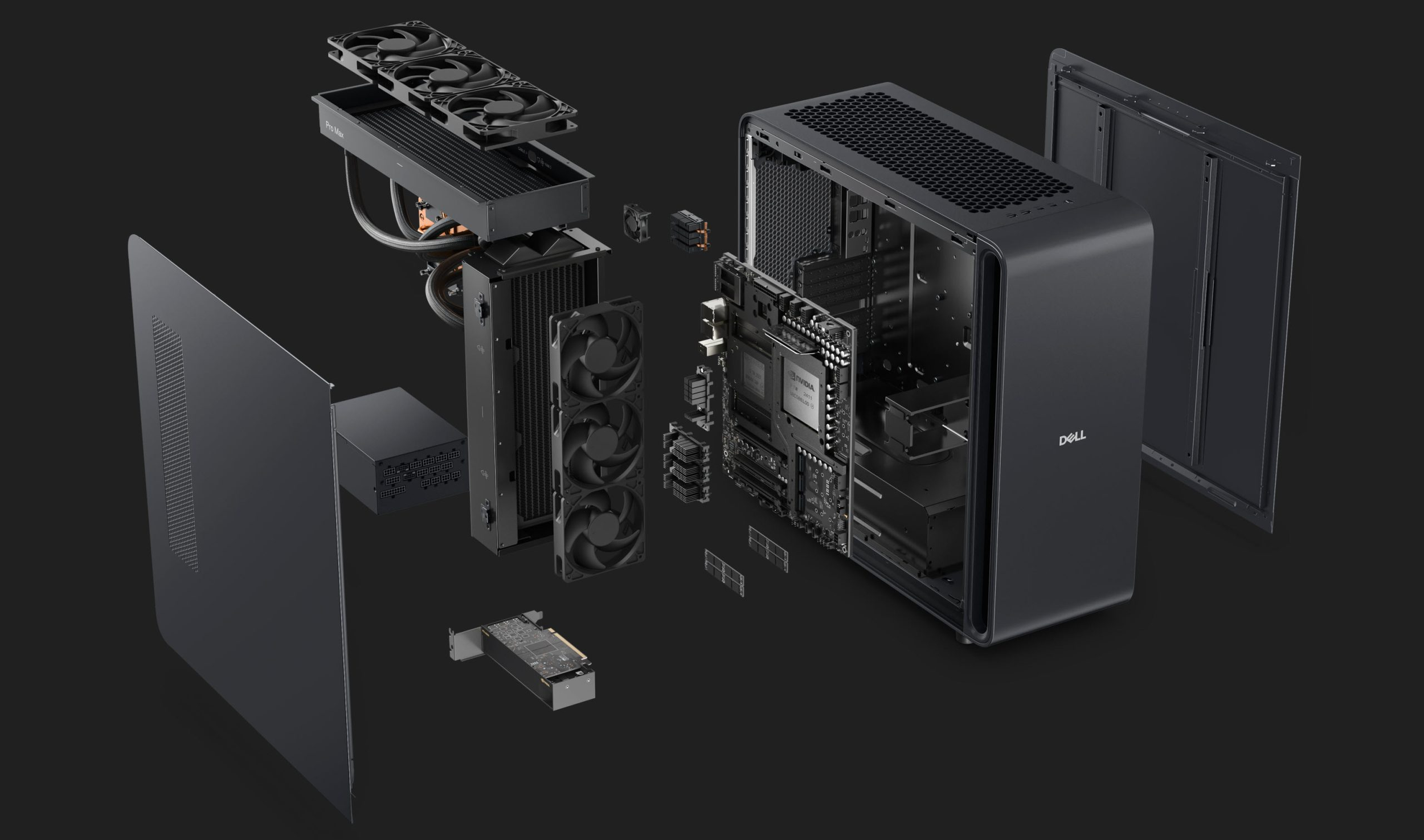

NVIDIA has officially updated its DGX Station with the GB300 Grace Blackwell Ultra Desktop Superchip, positioning the system as a deskside AI development platform for researchers, developers, and enterprise teams that want data center class AI capability in a workstation form factor. According to NVIDIA’s official product page, the new DGX Station delivers up to 20 petaFLOPs of AI compute and 748 GB of coherent memory, with support for models of up to 1 trillion parameters.

One important correction is worth making up front. The DGX Station is not a 2026 system replacing a prior GB200 based DGX Station. NVIDIA’s own earlier announcement from March 18, 2025 already introduced DGX Station as the first desktop system built with the GB300 Grace Blackwell Ultra Desktop Superchip, and described it at that time with 784 GB of coherent memory. The current official DGX Station product page now lists 748 GB, so the most current number to use is NVIDIA’s live product page, while also noting that earlier launch materials cited a higher total memory figure.

On the core hardware side, NVIDIA says DGX Station combines the GB300 Grace Blackwell Ultra Desktop Superchip with the NVIDIA AI software stack and ConnectX 8 SuperNIC connectivity. NVIDIA’s newsroom announcement states the platform supports networking speeds of up to 800 Gb/s, allowing multi station scaling and high speed data movement for local AI development. That makes DGX Station less like a conventional enthusiast workstation and more like a compact AI lab node designed to bridge local experimentation and larger deployment environments.

| Feature | Hopper | Blackwell | Blackwell Ultra |

|---|---|---|---|

| Manufacturing Process | TSMC 4N | TSMC 4NP | TSMC 4NP |

| Transistors | 80B | 208B | 208B |

| Dies per GPU | 1 | 2 | 2 |

| NVFP4 Dense | Sparse Performance | – | 10 | 20 PetaFLOPS | 15 | 20 PetaFLOPS |

| FP8 Dense | Sparse Performance | 2 | 4 PetaFLOPS | 5 | 10 PetaFLOPS | 5 | 10 PetaFLOPS |

| Attention Acceleration (SFU EX2) | 4.5 TeraExponentials/s | 5 TeraExponentials/s | 10.7 TeraExponentials/s |

| Max HBM Capacity | 80 GB HBM (H100) 141 GB HBM3E (H200) |

192 GB HBM3E | 288 GB HBM3E |

| Max HBM Bandwidth | 3.35 TB/s (H100) 4.8 TB/s (H200) |

8 TB/s | 8 TB/s |

| NVLink Bandwidth | 900 GB/s | 1,800 GB/s | 1,800 GB/s |

| Max Power (TGP) | Up to 700W | Up to 1,200W | Up to 1,400W |

The memory configuration remains one of the biggest selling points. Dell’s official GB300 overview, which aligns with NVIDIA’s platform positioning, describes the system as offering up to 288 GB of HBM3e GPU memory plus 496 GB of LPDDR5X system memory, while ASUS describes its own NVIDIA based deskside implementation as offering up to 748 GB of large coherent memory. That large unified memory pool is exactly what makes these systems attractive for local model development, fine tuning, and inference on much larger models than a traditional workstation would normally support.

The partner ecosystem is also real and already visible. NVIDIA’s DGX Station concept is being implemented by multiple OEMs, including the ASUS ExpertCenter Pro ET900N G3, Dell Pro Max with GB300, Gigabyte W775 V10 L01, MSI XpertStation WS300, and Supermicro Super AI Station. ASUS says its ET900N G3 is part of a new class of deskside AI supercomputers, while Supermicro is branding its version as a development platform purpose built for the age of AI.

There is, however, a second specification point that needs to be handled carefully. Some partner materials and PDFs do not all show identical totals. For example, a recent Supermicro datasheet describes the platform as combining an NVIDIA B300 GPU and Grace CPU with 279 GB of HBM3e GPU memory and a 775 GB unified memory pool, while NVIDIA’s current live DGX Station page says 748 GB. That means the cleanest publication approach is to anchor the article to NVIDIA’s latest official live product page for the headline specification, while recognizing that partner materials may reflect slightly different configurations or prelaunch figures.

As for pricing, there is still no official public list price on the product pages I checked. The same goes for a universal shipping date across all partners. Dell has announced it is shipping GB300 desktop systems, but broad partner availability appears to depend on vendor rollout timing rather than a single synchronized launch date. So while the system is very clearly real and commercially moving forward, it is safer not to state that every partner unit is shipping immediately unless each vendor explicitly says so.

From an industry standpoint, DGX Station is part of a wider NVIDIA push to bring AI development closer to the desktop without abandoning the company’s larger AI factory narrative. Instead of forcing every developer workflow into the data center from day one, NVIDIA is building a path where teams can prototype, fine tune, and test locally on a system that closely mirrors the software environment and platform logic of larger Blackwell deployments. That gives DGX Station a very different role from a classic workstation. It is not simply about raw local power. It is about making enterprise AI development more portable, more immediate, and easier to scale upward once the workload is ready.

Would you rather see AI development move toward powerful local systems like DGX Station, or do you think the future will stay centered almost entirely on cloud and rack scale infrastructure?