NVIDIA Vera Rubin Unveils a New AI Data Center Blueprint With 288 GB HBM4, 22 TB/s Bandwidth and 50 PFLOPs Per GPU

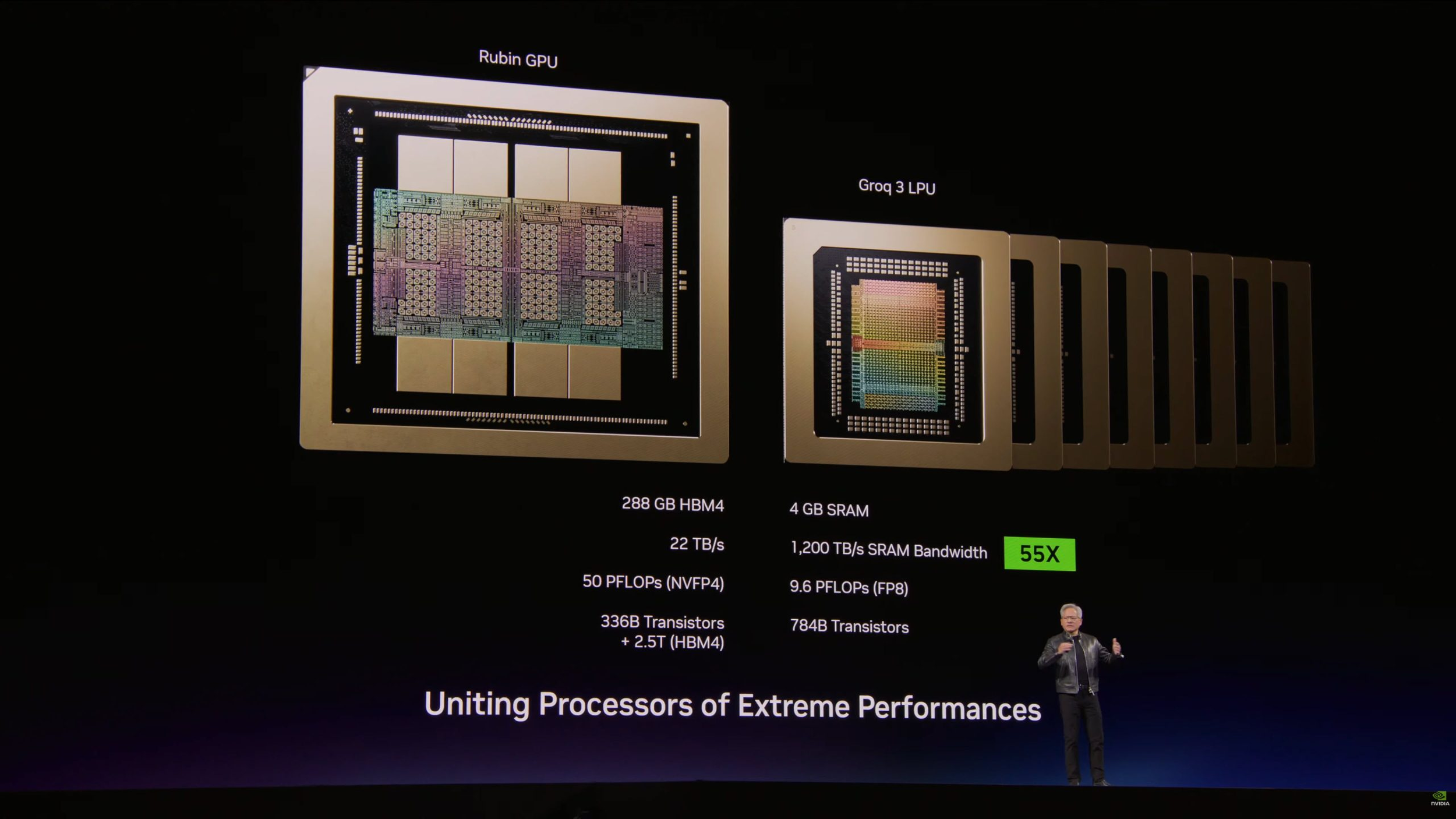

NVIDIA has officially expanded the Vera Rubin story at GTC 2026, positioning the platform as its next major step for agentic AI, reasoning workloads, large context inference and hyperscale AI factory deployments. At the center of the announcement is the Rubin GPU, which NVIDIA says delivers up to 50 PFLOPs of NVFP4 inference compute per chip alongside up to 288 GB of HBM4 memory and up to 22 TB/s of bandwidth. The broader Vera Rubin NVL72 system brings together 72 Rubin GPUs and 36 Vera CPUs inside a rack scale design aimed at pushing both training and inference economics forward for the next generation of enterprise and frontier AI deployments.

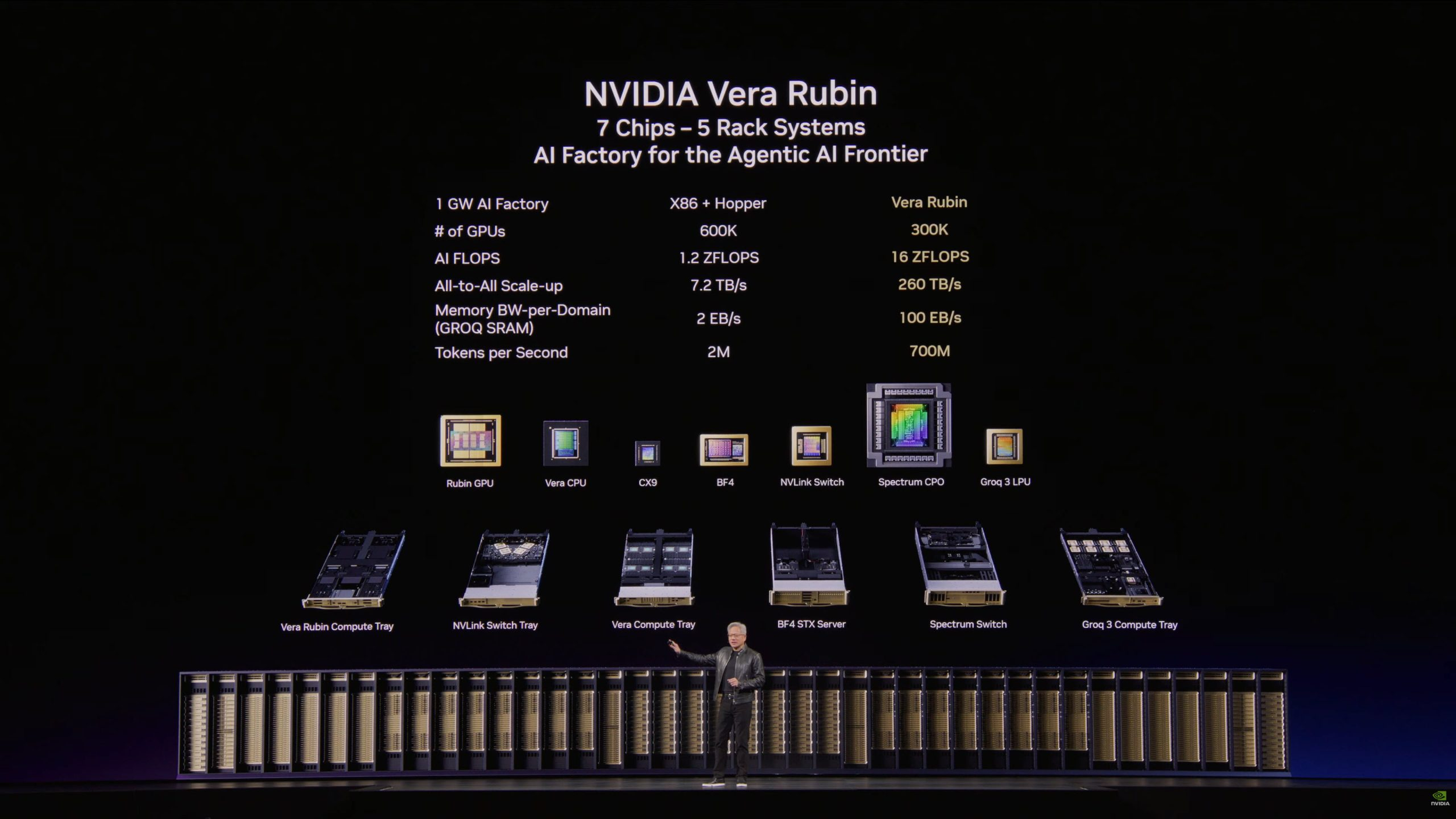

What makes this launch especially important is that NVIDIA is no longer talking only about a faster accelerator. Vera Rubin is framed as a full platform architecture. NVIDIA says the design spans seven chip categories across compute, networking, storage and inference acceleration, including Rubin GPUs, Vera CPUs, ConnectX 9 SuperNICs, BlueField 4 DPUs, NVLink 6 switches, Spectrum X optics and the new Groq 3 LPU path for low latency inference. That shift matters because the AI market is moving away from standalone GPU conversations and toward complete AI factory infrastructure, where power efficiency, memory bandwidth, networking density and deployment speed increasingly decide real world ROI.

On raw platform specs, NVIDIA is aiming for a meaningful leap. The company says each Vera Rubin NVL72 rack can deliver 3.6 exaflops of NVFP4 compute, 1.6 PB/s of HBM4 bandwidth and 260 TB/s of NVLink scale up bandwidth. NVIDIA also says the platform can drive AI training with one fourth the GPUs and AI inference at one tenth the cost per million tokens versus Blackwell in certain workloads, while the NVLink 6 fabric provides 3.6 TB/s of bandwidth per GPU to keep large reasoning and mixture of experts models fed at scale.

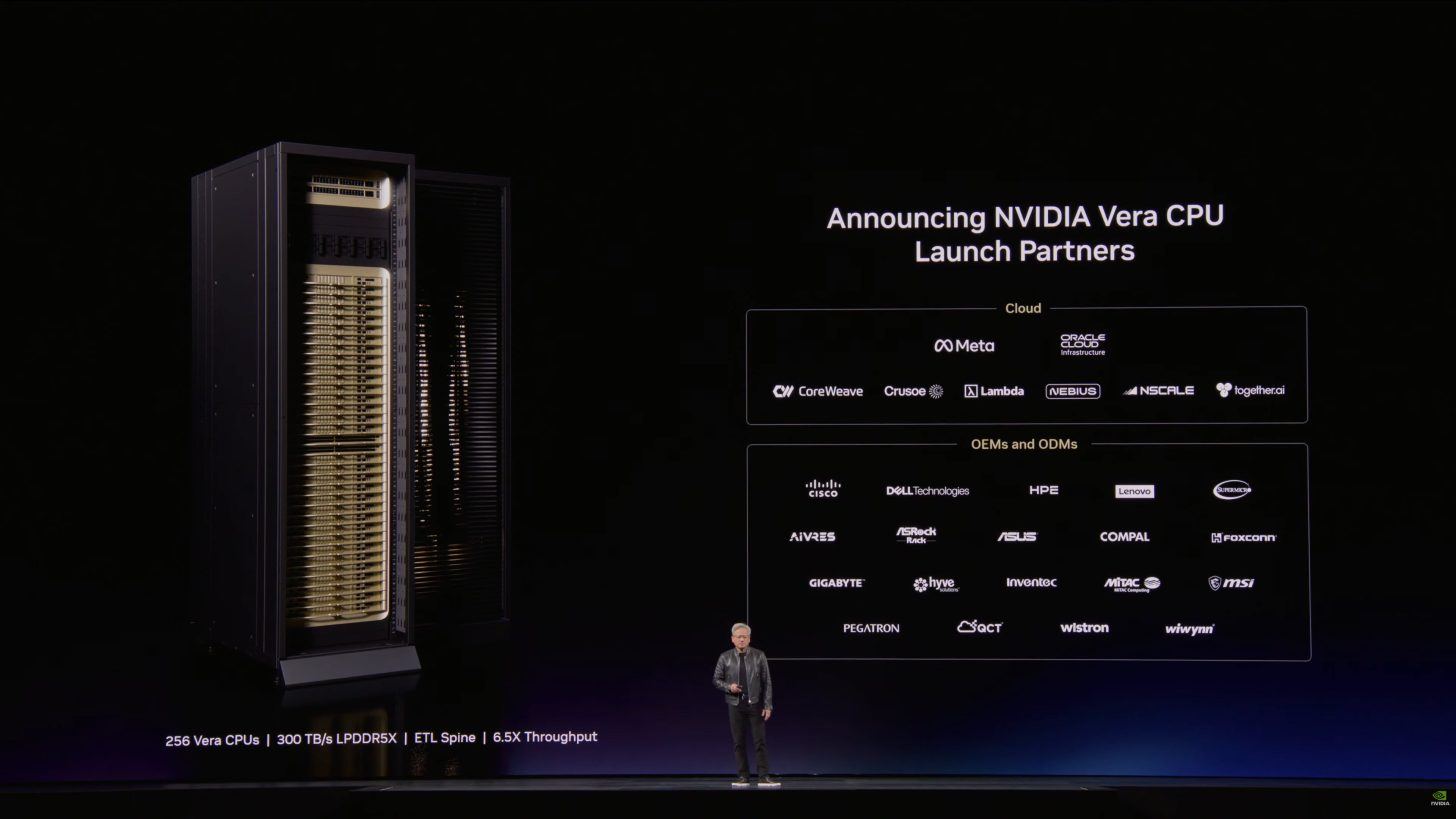

The Vera CPU is another strategic angle in this rollout. NVIDIA describes it as a purpose built data center CPU for data movement and agentic reasoning, with 88 custom Olympus cores, Arm v9.2 compatibility and a strong performance per watt focus. The company is also positioning Vera as a standalone opportunity beyond Rubin racks, which could broaden NVIDIA’s role in the data center stack and put more pressure on traditional CPU vendors as AI infrastructure buying shifts toward tightly integrated system level platforms.

The most forward looking piece may be the heterogeneous inference story. NVIDIA’s new Groq 3 LPX rack is designed as a companion for latency sensitive inference, with 256 LPUs, 128 GB of SRAM, 40 PB/s of memory bandwidth and 640 TB/s of scale up bandwidth per rack. NVIDIA says pairing Vera Rubin NVL72 with LPX can deliver up to 35 times higher inference throughput per megawatt and up to 10 times more revenue opportunity for trillion parameter models relative to Blackwell era systems. In practical terms, NVIDIA is building for a future where AI services need both giant throughput and near instant response, which is exactly where gaming adjacent cloud AI, real time assistants, multimodal experiences and large scale simulation workflows are heading.

| Specification | NVIDIA Vera Rubin NVL72 | NVIDIA Vera Rubin Superchip | NVIDIA Rubin GPU |

|---|---|---|---|

| Configuration | 72 NVIDIA Rubin GPUs | 36 NVIDIA Vera CPUs | 2 NVIDIA Rubin GPUs | 1 NVIDIA Vera CPU | 1 NVIDIA Rubin GPU |

| NVFP4 Inference | 3,600 PFLOPS | 100 PFLOPS | 50 PFLOPS |

| NVFP4 Training | 2,520 PFLOPS | 70 PFLOPS | 35 PFLOPS |

| FP8 / FP6 Training | 1,260 PFLOPS | 35 PFLOPS | 17.5 PFLOPS |

| INT8 | 18 POPS | 0.5 POPS | 0.25 POPS |

| FP16 / BF16 | 288 PFLOPS | 8 PFLOPS | 4 PFLOPS |

| TF32 | 144 PFLOPS | 4 PFLOPS | 2 PFLOPS |

| FP32 | 9,360 TFLOPS | 260 TFLOPS | 130 TFLOPS |

| FP64 | 2,400 TFLOPS | 67 TFLOPS | 33 TFLOPS |

| FP32 SGEMM | 28,800 TFLOPS | 800 TFLOPS | 400 TFLOPS |

| FP64 DGEMM | 14,400 TFLOPS | 400 TFLOPS | 200 TFLOPS |

| GPU Memory | Bandwidth | 20.7 TB HBM4 | 1,580 TB/s | 576 GB HBM4 | 44 TB/s | 288 GB HBM4 | 22 TB/s |

| NVLink Bandwidth | 260 TB/s | 7.2 TB/s | 3.6 TB/s |

| NVLink-C2C Bandwidth | 65 TB/s | 1.8 TB/s | - |

| CPU Core Count | 3,168 custom NVIDIA Olympus cores (Arm compatible) | 88 custom NVIDIA Olympus cores (Arm compatible) | - |

| CPU Memory | 54 TB LPDDR5X | 1.5 TB LPDDR5X | - |

| Total NVIDIA + HBM4 Chips | 1,296 | 30 | 12 |

Deployment is also part of the pitch. NVIDIA’s technical material says the new rack and tray approach is modular and cable free, with liquid cooled compute trays and a design built to accelerate installation and serviceability. That is significant because hyperscalers and AI cloud builders are now battling on time to deployment almost as much as on silicon performance. In this cycle, infrastructure friction is a competitive variable. Vera Rubin appears designed to reduce that friction while scaling up power density and memory throughput at the same time.

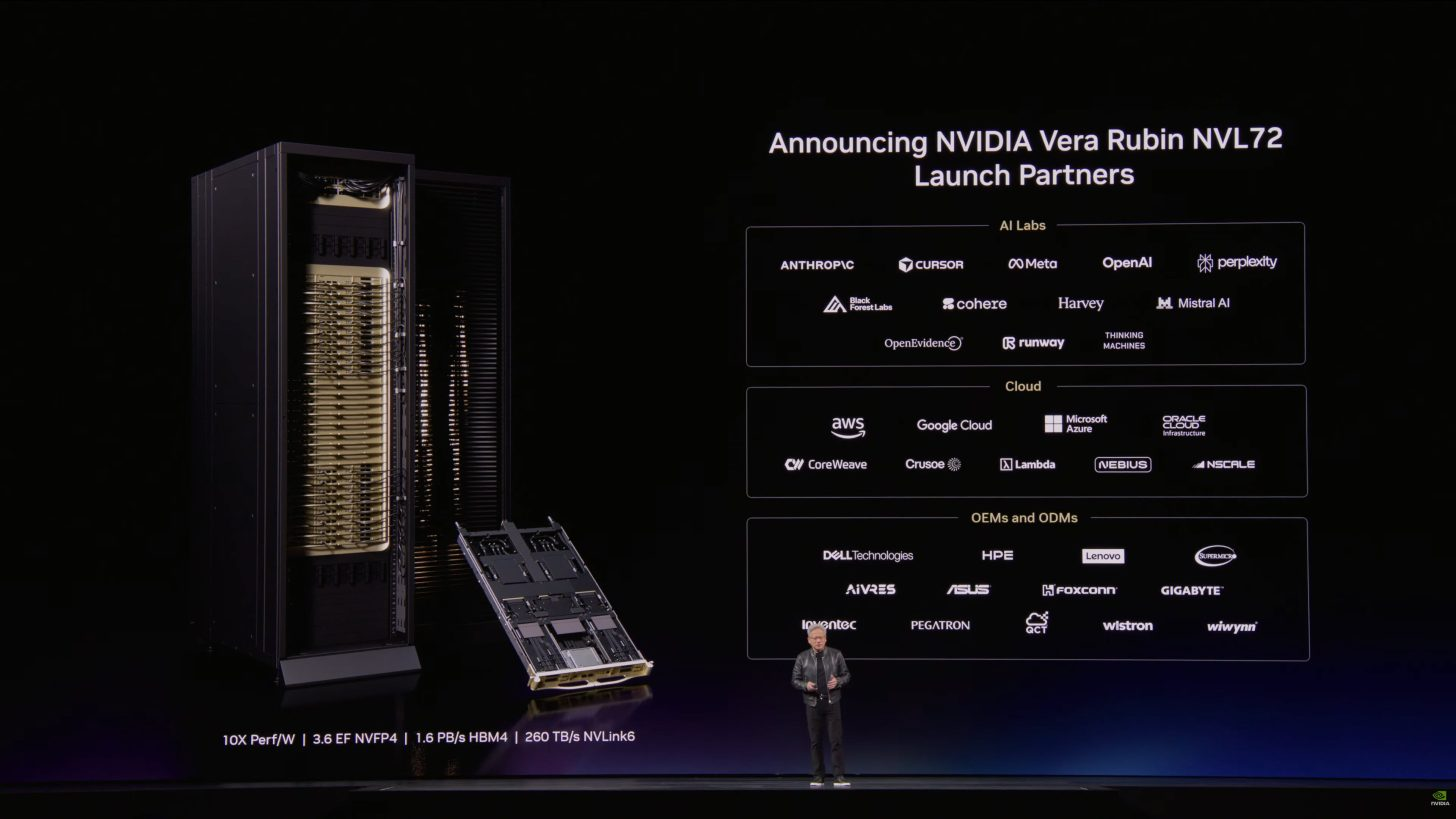

Commercial momentum looks broad. NVIDIA says Vera Rubin based products will be available from partners starting in the second half of 2026, with support spanning Amazon Web Services, Google Cloud, Microsoft Azure, Oracle Cloud Infrastructure, CoreWeave, Crusoe, Lambda, Nebius, Nscale and Together AI. On the systems side, NVIDIA says vendors including Cisco, Dell, HPE, Lenovo, Supermicro, Aivres, ASUS, Foxconn, GIGABYTE, Inventec, Pegatron, QCT, Wistron and Wiwynn are preparing infrastructure based on the platform.

The headline claim around 40 million times more compute in 10 years is not the core metric NVIDIA emphasized in the official product pages I reviewed, so it is best treated as a keynote framing point rather than the cleanest stand alone spec. The more grounded story is that NVIDIA is using Vera Rubin to redefine the AI data center as a unified product, not just a GPU refresh. For the industry, that is the real takeaway. This launch is about controlling the full performance stack from CPU to memory to interconnect to optics to inference specialization. For developers, cloud providers and enterprise buyers, Vera Rubin signals that the next competitive battleground is no longer just who has the biggest chip. It is who can deliver the most scalable, power efficient and production ready AI factory.

Does Vera Rubin look like the moment NVIDIA moved from selling accelerators to owning the entire AI factory conversation?