NVIDIA Feynman Roadmap Points to 3D Stacked Design, Custom HBM and a New Rosa CPU for 2028

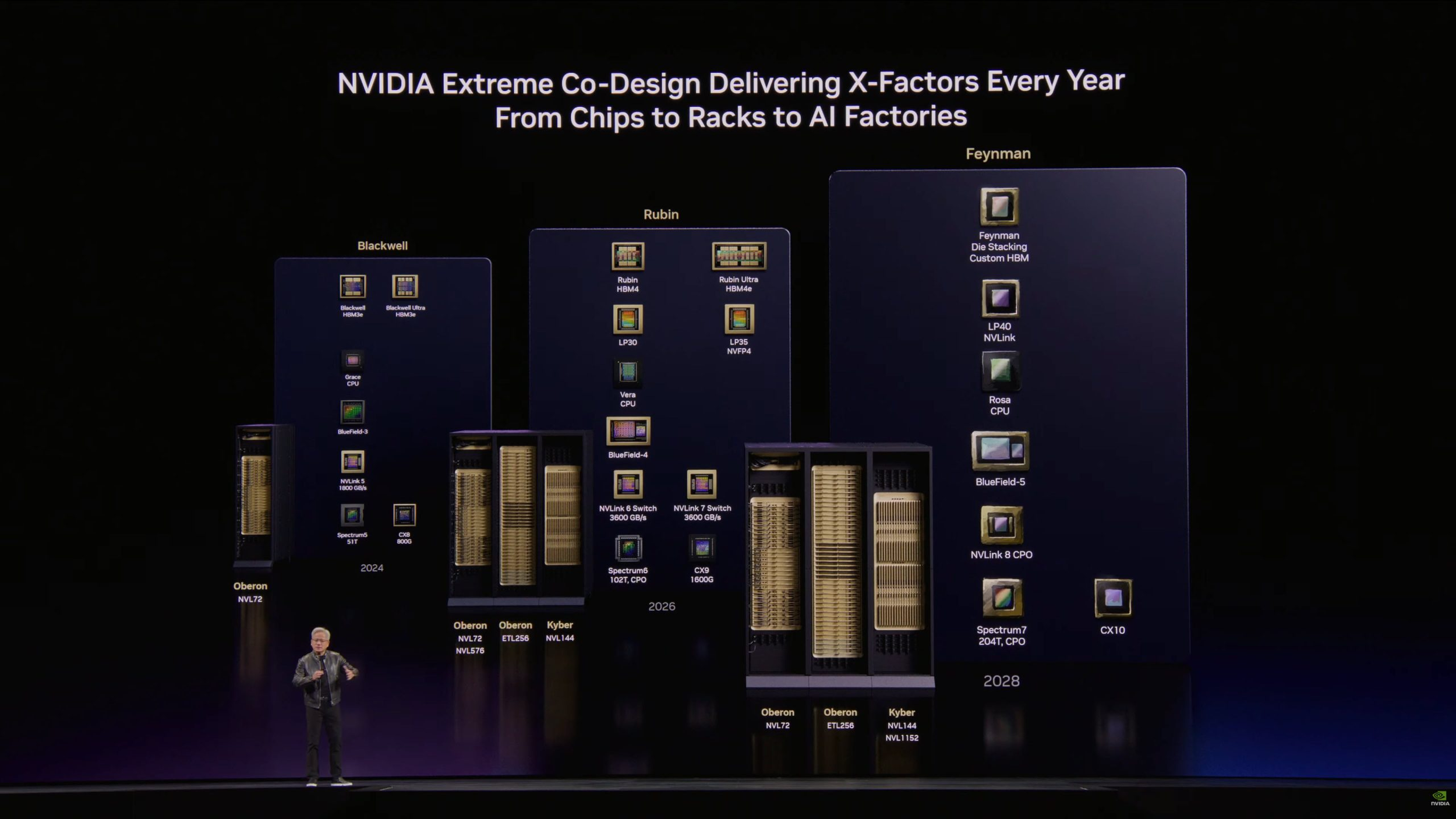

NVIDIA has used GTC 2026 to put more shape around its long term AI roadmap, and the biggest forward looking reveal beyond Vera Rubin is clearly the Feynman generation scheduled for 2028. NVIDIA’s own GTC live coverage confirms that Feynman will introduce a new CPU called Rosa, along with BlueField 5, CX10, and Kyber connectivity for both copper and co packaged optics scale up. Independent live coverage from Tom’s Hardware also reported that Huang presented Feynman as a 2028 platform with a new GPU, new LPU, and the new Rosa CPU at its core.

The most talked about detail from early third party coverage is the claim that Feynman will move to 3D die stacking and a custom HBM approach. NVIDIA is now signaling custom HBM for Feynman instead of standard next generation HBM language. If that direction holds, it would suggest NVIDIA wants even tighter control over memory bandwidth, thermals, power delivery, and packaging than it already has with Rubin and Rubin Ultra. At this stage, though, NVIDIA has not published a public technical brief that fully details Feynman’s exact memory specification or confirms a final HBM generation name.

That distinction matters, because there is a line between what NVIDIA officially confirmed and what the supply chain and media are inferring from roadmap slides. Officially, NVIDIA has confirmed the Rosa name, the broader Feynman platform direction, and the 2028 timing. The 3D stacked GPU die claim and the custom HBM interpretation are, for now, based on third party reporting rather than a standalone NVIDIA technical announcement. In practical terms, the industry should treat those details as strong signals, but not yet as fully documented product specifications.

One important correction also needs to be made around the Rosa name. An early circulating summary tied Rosa to Rosalyn Sussman Yalow, but NVIDIA’s official GTC 2026 live blog says Rosa is actually named after Rosalind Franklin, the scientist whose X ray crystallography work helped reveal the structure of DNA. That makes the branding more consistent with NVIDIA’s recent habit of naming major architectures after landmark scientific figures.

There is also growing discussion around Intel’s possible role in the Feynman era. TrendForce, said NVIDIA could potentially leverage Intel packaging technologies such as EMIB for future Feynman products, while Intel has separately confirmed its Xeon 6 role inside DGX Rubin NVL8 systems. Even so, no official NVIDIA source I reviewed directly confirms that Intel will package or manufacture Feynman. That part of the story remains a supply chain level possibility rather than an official design disclosure.

From a market perspective, the bigger strategic signal is that NVIDIA is extending its platform play far beyond just the GPU. Reuters reported that Huang used GTC 2026 to reinforce a roadmap that stretches from Rubin to Feynman while doubling down on inference, networking, and full AI factory infrastructure. In other words, Feynman is not shaping up as just another silicon refresh. It looks like the next stage of NVIDIA’s effort to own compute, memory, interconnect, storage, and deployment architecture as one integrated stack.

If the 3D die stacking and custom HBM pieces are ultimately confirmed in full, Feynman could become one of NVIDIA’s most important architecture shifts of the decade. A 3D stacked GPU design would open the door to more aggressive transistor density and shorter data paths, while a custom HBM solution could give NVIDIA another lever to differentiate performance in large scale training and inference. For hyperscalers, frontier labs, and enterprise AI builders, that is the kind of vertical optimization that can decide who wins the next phase of AI infrastructure. For now, the safest conclusion is this: Feynman is real, Rosa is official, 2028 remains the target, and the most exciting technical details are beginning to emerge, even if some of them still sit in the rumor to roadmap transition zone.

Do you think NVIDIA’s biggest long term advantage will come from raw GPU performance, or from controlling the entire AI platform stack from CPU to memory to optics?