NVIDIA Adds Groq 3 LPX to Vera Rubin to Push Harder Into Low Latency AI Inference

NVIDIA has officially unveiled NVIDIA Groq 3 LPX, a new rack scale low latency inference accelerator designed for the NVIDIA Vera Rubin platform, marking one of the clearest signs yet that the company wants to expand more aggressively into the inference segment of the AI market. NVIDIA describes Groq 3 LPX as part of the Vera Rubin platform and says it is purpose built for low latency, large context inference workloads, especially the kind tied to agentic AI and real time serving.

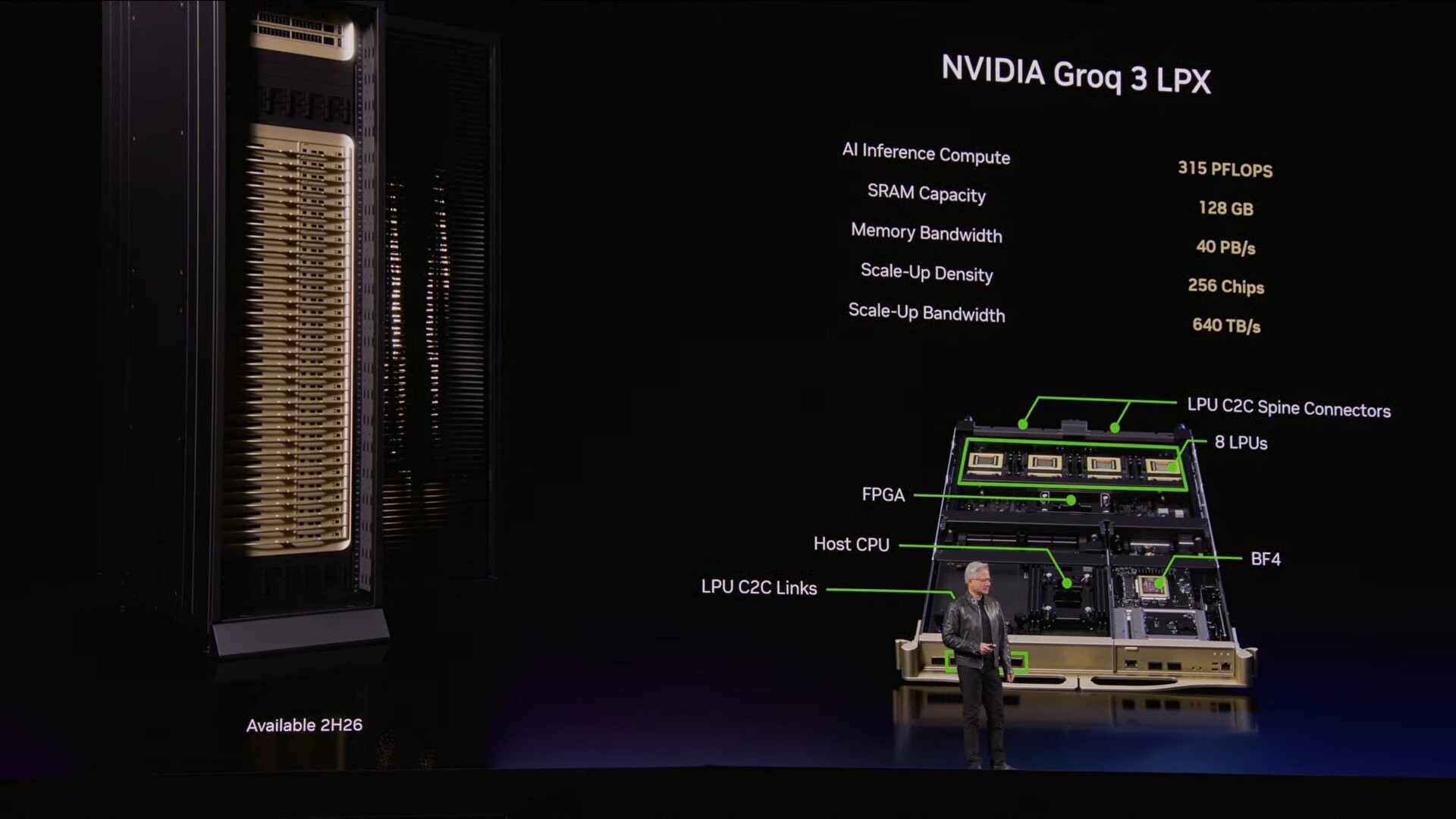

According to NVIDIA’s technical disclosure, Groq 3 LPX is a dedicated rack scale system that combines 256 Groq LPUs and is aimed at decode heavy inference tasks, while Vera Rubin handles the prefill stage. Reuters reported that NVIDIA is explicitly splitting inference into these 2 phases, with Rubin handling prefill and Groq handling decode, which gives the partnership a very practical architectural role rather than making it a vague branding alliance.

NVIDIA says the combined Groq 3 LPX and Vera Rubin approach delivers up to 35x higher inference throughput per megawatt for certain workloads. In the official technical blog, NVIDIA frames LPX as optimized for trillion parameter models and million token context scenarios, while saying the codesigned system is intended to maximize efficiency across power, memory, and compute.

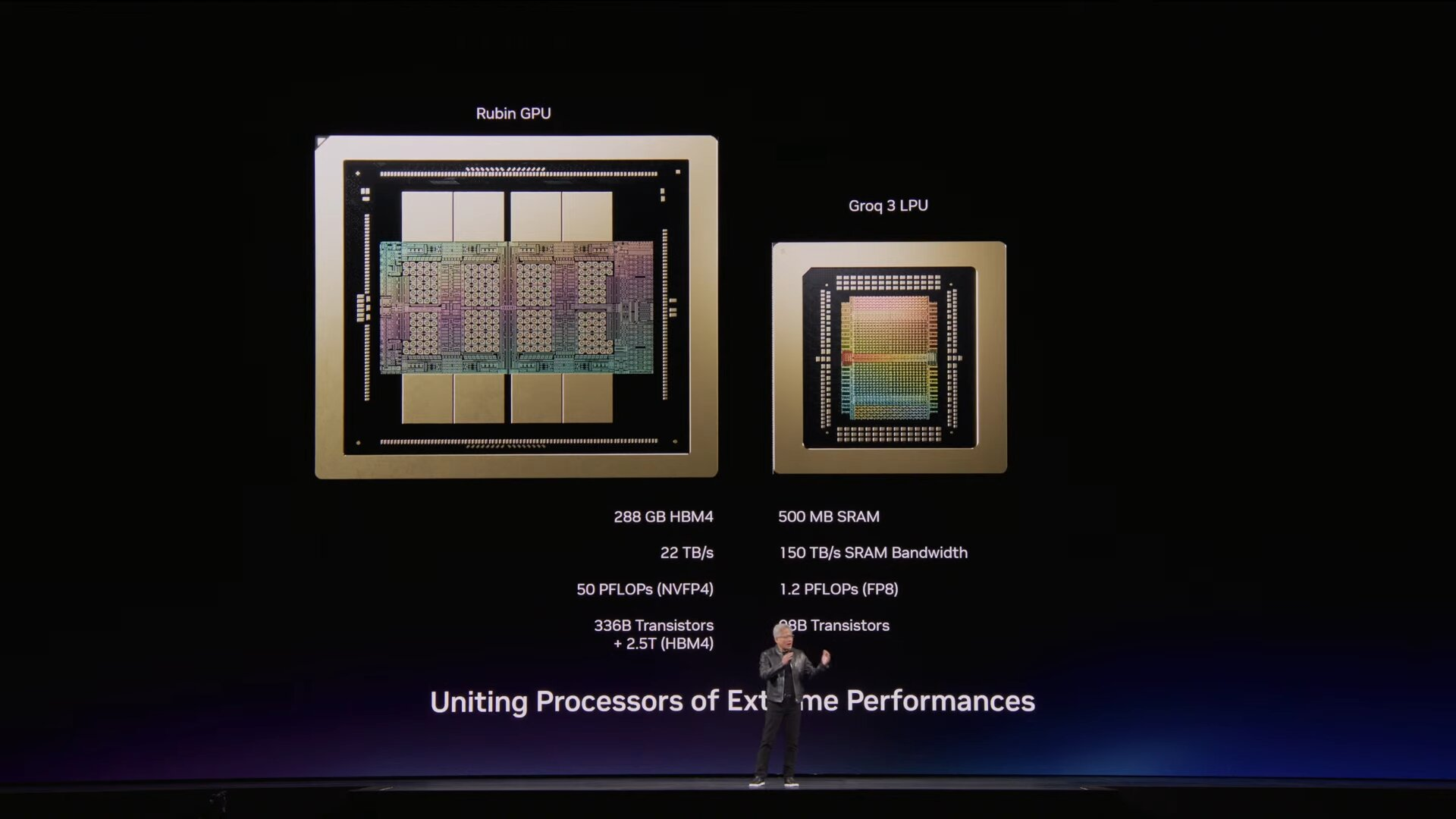

The published hardware details are substantial. NVIDIA says the LPX rack includes 256 LPUs, 128 GB of on chip SRAM, and 640 TB/s of scale up bandwidth. For each individual Groq 3 processor, NVIDIA lists 500 MB of SRAM, 150 TB/s of SRAM bandwidth, and 1.2 PFLOPS FP8 performance. NVIDIA’s technical blog also says the full Vera Rubin plus LPX tray reaches up to 315 PFLOPS of AI inference compute.

This matters because NVIDIA is clearly trying to address one of the biggest competitive openings in AI hardware right now. The company has dominated training infrastructure, but low latency inference has become a more contested battleground, with specialized rivals pushing harder on efficiency, responsiveness, and token economics. Reuters noted ahead of GTC that NVIDIA was expected to reveal products tied to its Groq deal as part of a broader push into inference and agentic AI.

There is also a strategic nuance here. NVIDIA is not presenting Groq 3 LPX as a replacement for Rubin, but as a complementary component inside the Vera Rubin ecosystem. That suggests NVIDIA sees the best path into ultra fast inference not as forcing one chip type to do everything, but as pairing different compute elements for different parts of the pipeline. Based on NVIDIA’s own split between prefill and decode, this is a hybrid architecture play rather than a pure Rubin only expansion.

At the same time, it is important to avoid overstating the market framing. The claim that NVIDIA has “never been first” in inference is too broad to treat as settled fact. What is clearly supported is that NVIDIA is now making inference a major GTC focus and is introducing Groq based products specifically to strengthen its position there. Reuters, Barron’s, and Business Insider all describe inference as a central theme of GTC 2026 and part of NVIDIA’s effort to capture the next wave of AI demand.

This announcement also fits into Jensen Huang’s wider GTC message that AI has hit an “inflection point” around inference. NVIDIA is increasingly arguing that the next infrastructure boom will be driven not just by training bigger models, but by serving them continuously, cheaply, and with lower latency in production. Groq 3 LPX looks like one of the company’s most direct answers to that challenge so far.

So the bigger takeaway is not just that NVIDIA and Groq are working together. It is that NVIDIA is formalizing a new inference architecture inside the Rubin era stack, and doing so in a way that acknowledges inference has different priorities than training. If Groq 3 LPX performs in the field the way NVIDIA claims on paper, this could become one of the more important product moves in the company’s effort to stay dominant as AI spending shifts toward live, agent driven deployment.

What do you think, is NVIDIA’s Groq powered LPX approach the right way to attack low latency inference, or do you still expect more pressure from specialized competitors in that part of the market?