NVIDIA Taps Taiwan’s Nanya Technology for Vera Rubin LPDDR5X Memory as Agentic AI Drives CPU Demand

NVIDIA is reportedly bringing Taiwan based Nanya Technology into the memory supply chain for its next generation Vera Rubin AI platform, marking a major milestone for Taiwan’s domestic memory industry and another sign that AI infrastructure is entering a new phase where CPU memory capacity matters almost as much as GPU acceleration.

According to reporting cited by UDN and summarized by WCCFTECH , Nanya Technology has been selected as a supply chain partner for LPDDR5X memory used in NVIDIA’s Vera CPUs. This would make Nanya the first Taiwanese memory manufacturer to enter NVIDIA’s AI server main memory system, a space that has historically been dominated by Korean and American memory suppliers.

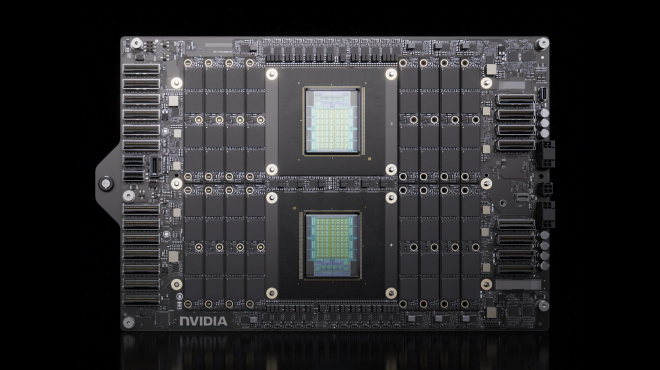

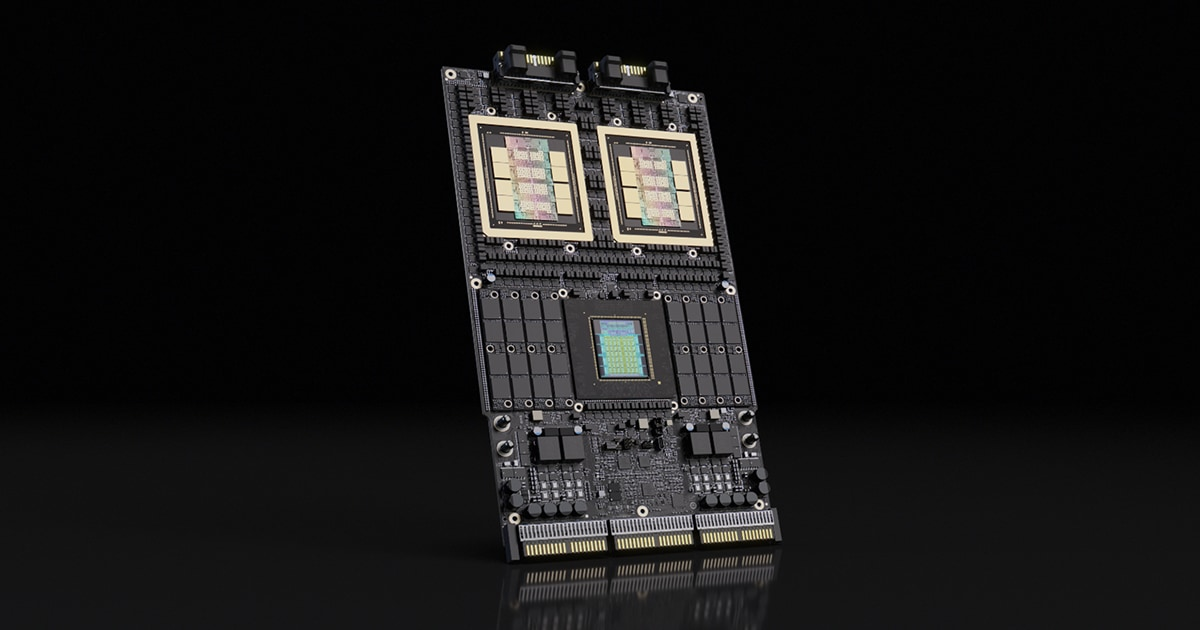

The Vera Rubin platform uses 2 different memory classes for different parts of the system. Rubin GPUs use HBM4, which is designed for extremely high bandwidth and compact integration close to the accelerator. Vera CPUs use LPDDR5X, which is more power efficient and offers higher memory density for CPU side workloads. This split is important because AI infrastructure is no longer just about feeding GPUs with HBM. As agentic AI grows, CPUs need much larger memory pools to manage orchestration, context, scheduling, data movement, and multi agent workflows.

NVIDIA has already been working with major memory suppliers such as Micron and SK hynix on SOCAMM2 memory solutions for Vera Rubin, but adding Nanya expands the supplier base and gives NVIDIA more flexibility as demand scales. HBM4 remains difficult to manufacture and is limited to a few major suppliers, while LPDDR5X is more widely deployed across the memory industry. That makes supplier diversification especially valuable as NVIDIA prepares for massive Vera Rubin platform demand.

For Nanya Technology, this is a major win. Taiwanese memory makers have traditionally been stronger in consumer and commodity DRAM markets rather than AI server main memory for leading edge platforms. The reported qualification suggests that Nanya has managed to meet NVIDIA’s demanding AI platform requirements, which is especially notable given the technical gap many local memory manufacturers have faced in the AI server segment.

The Vera Rubin platform is expected to deliver a major memory upgrade over Grace Blackwell generation servers. Each Vera Rubin Superchip reportedly supports up to 1.5TB of LPDDR5X memory with up to 1.2TB/s of bandwidth, representing a 3x increase in memory capacity and more than a 50% bandwidth boost compared with the previous Grace Blackwell generation. At rack scale, 256 Vera CPUs can provide up to 400TB of memory and up to 315TB/s of aggregate bandwidth.

| NVIDIA Vera CPU Rack | NVIDIA Vera CPU | NVIDIA Vera CPU |

|---|---|---|

| Configuration | 256 Vera CPUs | 1 Vera CPU |

| Cores | Threads | 22,528 NVIDIA Olympus cores 45,056 threads |

88 NVIDIA Olympus cores 176 threads |

| Memory Capacity | Up to 400 TB | Up to 1.5 TB |

| Aggregate Bandwidth | Up to 315 TB/s | Up to 1.2 TB/s |

| N/S Networking | NVIDIA BlueField-4 DPU | N/A |

| Cooling | Liquid Cooled | N/A |

This scale explains why LPDDR5X matters so much. In a traditional AI training system, GPUs and HBM dominate the discussion because model training is extremely compute and bandwidth intensive. However, agentic AI changes the system balance. Agentic workloads require CPUs to coordinate complex workflows, manage long running tasks, route requests, handle tools, maintain memory, and support real time inference pipelines. Even when newer models reduce KV cache usage through compression, the overall need for large, efficient CPU memory remains significant.

That is why Vera is just as strategically important as Rubin. Rubin GPUs will provide the accelerator power, but Vera CPUs will handle much of the orchestration and system level workload around those accelerators. With agentic AI pushing CPU side infrastructure harder, NVIDIA needs a deep and stable LPDDR5X supply chain to support rack scale deployments.

Nanya’s reported entry also strengthens Taiwan’s position beyond foundry and packaging. Taiwan is already central to AI hardware through TSMC’s advanced process nodes and CoWoS packaging ecosystem. If Taiwanese memory makers can also qualify for next generation AI server memory, the country’s role in the AI supply chain becomes even more strategic.

For NVIDIA, the move is practical. Vera Rubin platforms will require enormous memory volumes, and relying too heavily on a small group of suppliers could create bottlenecks. Bringing Nanya into the supply chain helps reduce risk, improve sourcing flexibility, and prepare for large scale deployment as AI customers continue expanding infrastructure.

This also reflects a broader industry trend: memory is becoming one of the most important constraints in AI. HBM shortages have already affected accelerator availability, while LPDDR5X demand is rising as AI servers require more CPU side capacity and bandwidth. The companies that can secure enough memory supply will have a major advantage in delivering systems on schedule.

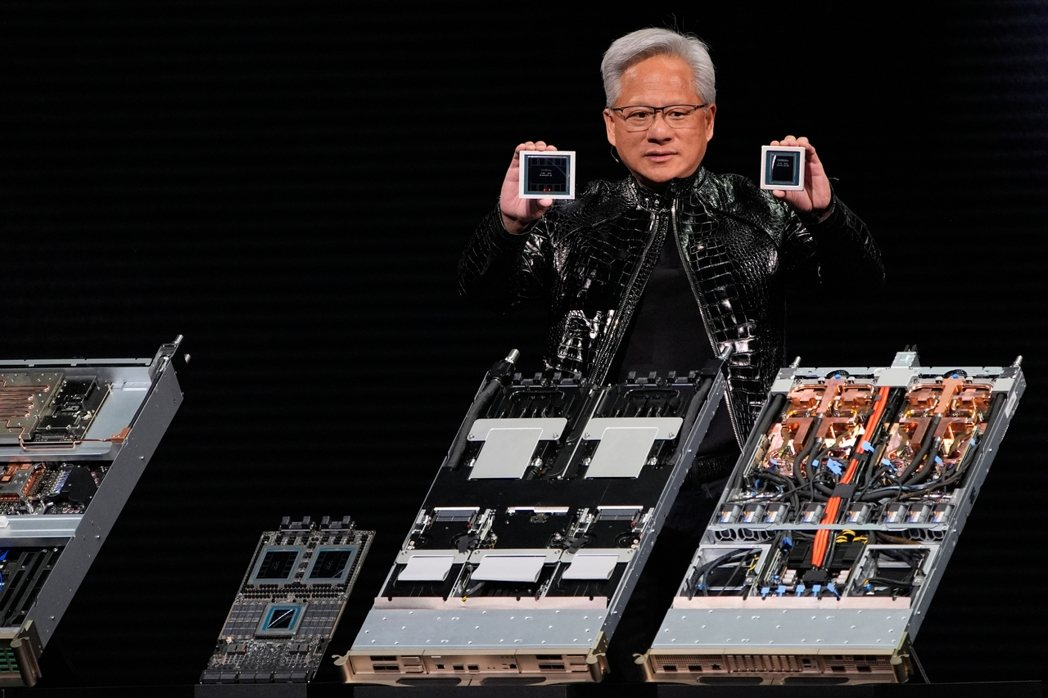

The Vera Rubin generation is expected to be one of NVIDIA’s most important platform transitions, combining HBM4 equipped Rubin GPUs with high capacity LPDDR5X based Vera CPUs. If Nanya’s selection is confirmed at scale, it would represent a meaningful shift in the AI memory supply chain and a major validation point for Taiwan’s memory industry.

As AI workloads become more agentic, distributed, and memory hungry, NVIDIA’s decision to diversify LPDDR5X suppliers may prove just as important as its GPU roadmap.

Will Nanya’s reported role in Vera Rubin help Taiwan become a bigger player in AI memory, or will Korean and American suppliers continue to dominate the market?