Intel’s Xeon 6 Enters NVIDIA DGX Rubin NVL8 Systems as the Host CPU for Agentic AI Infrastructure

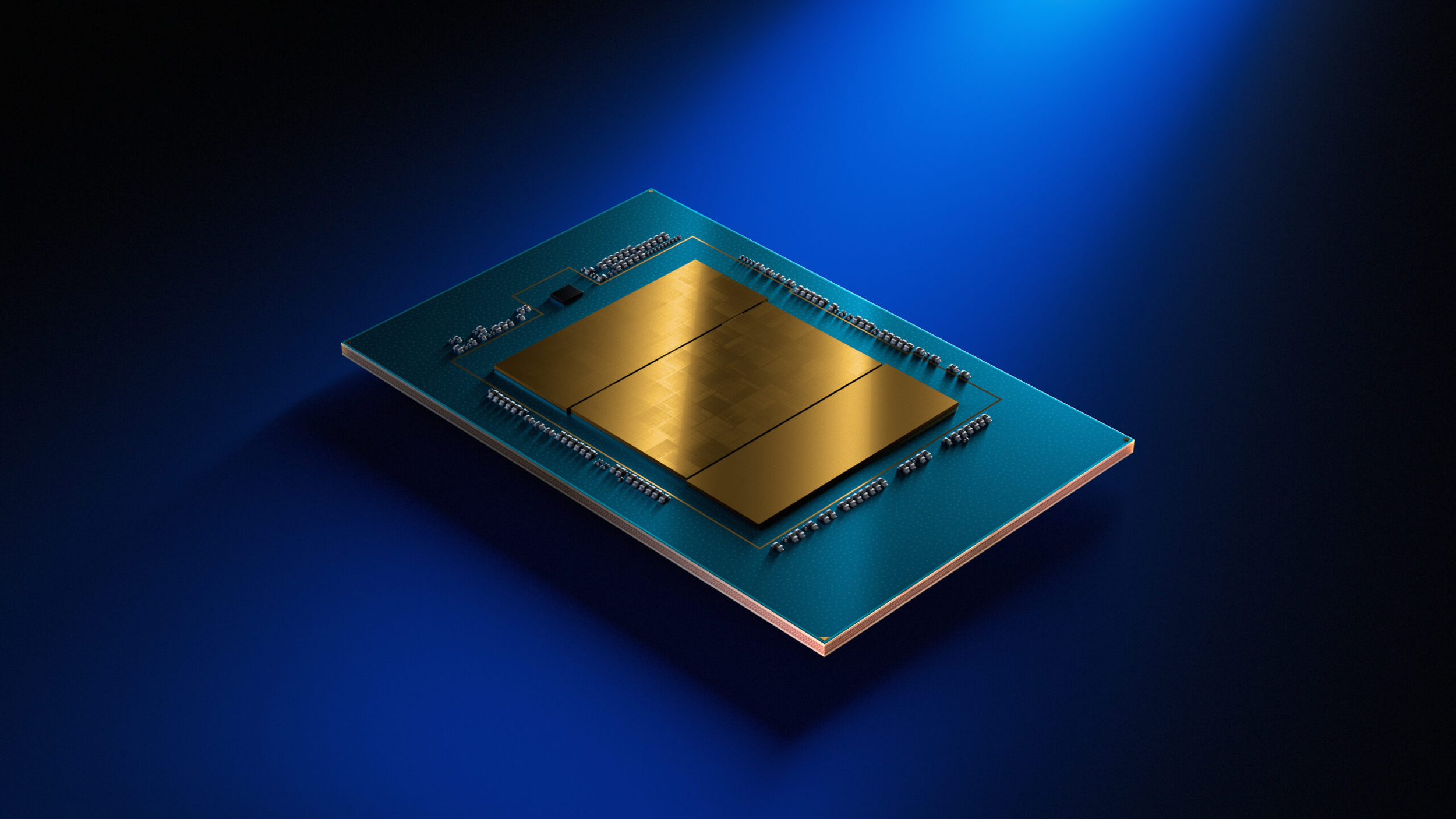

Intel has secured an important position inside NVIDIA’s next generation AI platform stack, with the company confirming that Xeon 6 processors will serve as the host CPUs for NVIDIA DGX Rubin NVL8 systems. Intel announced the milestone during NVIDIA GTC 2026, describing Xeon 6 as the processor foundation for orchestrating, scaling, and securing modern AI infrastructure as workloads increasingly shift toward large scale real time inference and agentic AI.

That is a meaningful development for Intel because it places Xeon directly inside one of NVIDIA’s flagship Rubin era configurations at a time when the role of the CPU is becoming more visible again in AI server design. Intel says host CPUs are becoming increasingly important for functions such as orchestration, memory management, data movement, and model security, all of which matter more as AI systems move beyond raw training and toward more complex inference and multi agent deployment models.

Intel’s official framing is that Xeon 6 helps complement GPU accelerated inference by handling mission critical host tasks while improving performance per watt, supporting the broader AI software ecosystem, and enabling more effective orchestration across heterogeneous compute environments. The company also specifically highlighted new support for NVIDIA Dynamo, which it says will help enable heterogeneous inference across CPUs and forthcoming GPUs.

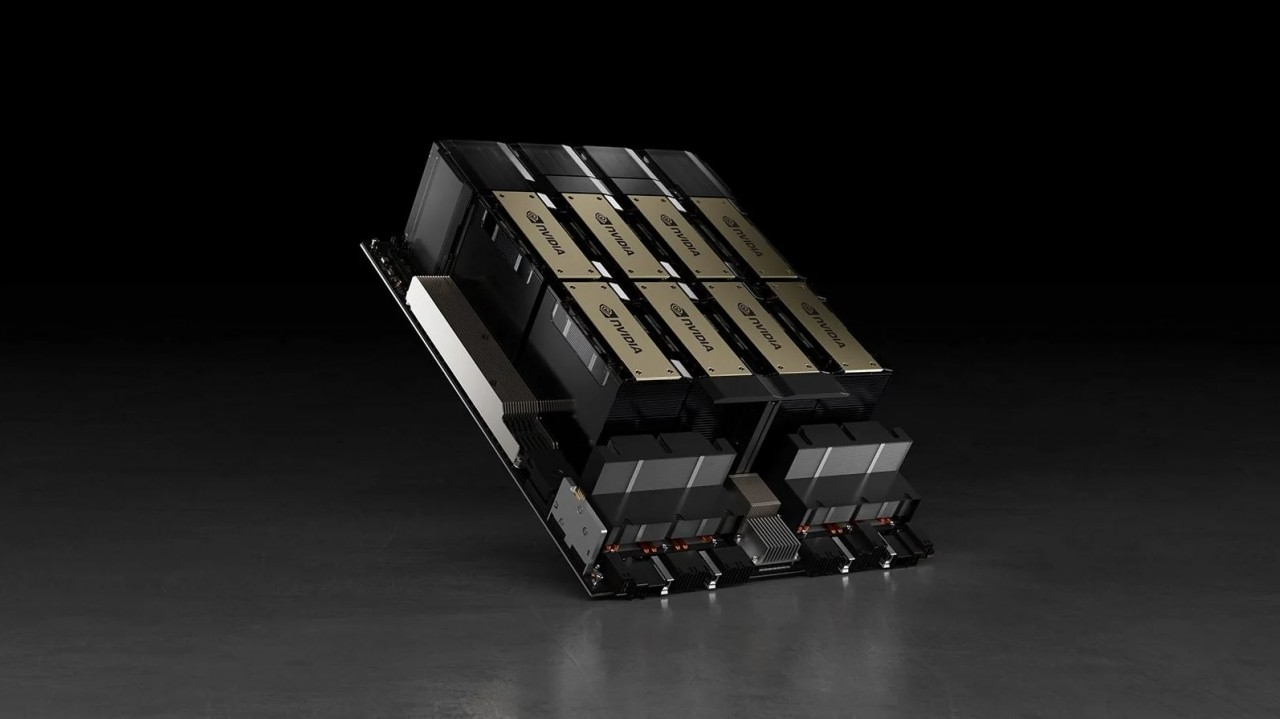

On NVIDIA’s side, the official DGX Rubin NVL8 page describes the platform as an 8 GPU Rubin system designed for agentic AI, with up to 400 petaFLOPS of inference performance and 160 TB/s of HBM bandwidth. NVIDIA’s product page focuses primarily on the GPU side and system architecture, while Intel’s announcement fills in the important host CPU detail by confirming that Xeon 6 is part of that baseline NVL8 design.

What is especially notable here is the continuity. Intel says the Xeon 6 integration in DGX Rubin NVL8 builds on earlier collaboration in NVIDIA systems such as DGX B300 and DGX H200, reinforcing the idea that NVIDIA still sees a strong role for x86 host processors even as it develops more of its own vertically integrated AI platform technologies.

There is, however, an important limit to what has been confirmed so far. Intel has not publicly specified the exact Xeon 6 SKU in its official release, and neither Intel nor NVIDIA has announced that the same Xeon 6 host CPU arrangement extends across the full Rubin rack scale lineup. That means claims about broader adoption beyond DGX Rubin NVL8 remain speculative unless and until NVIDIA or its partners formally confirm them.

In fact, the wider Rubin ecosystem appears more mixed and still in motion. While Intel has officially confirmed Xeon 6 in DGX Rubin NVL8, NVIDIA’s broader Rubin messaging also includes its own Vera CPU roadmap for future rack scale AI systems. At the same time, some partner announcements around Rubin based rack deployments are already referencing Xeon 6 in specific integrated solutions, which suggests the market may not be moving toward a single CPU story across every Rubin configuration. That indicates a more flexible infrastructure strategy rather than a complete handoff to one processor path.

From an industry standpoint, this is a strategically valuable win for Intel. The company does not need to displace NVIDIA in accelerators to remain relevant in AI infrastructure. If Xeon can become the trusted host processor layer inside high value NVIDIA systems, Intel keeps a meaningful foothold in the fastest growing part of the data center market. In practical terms, this also helps reinforce the argument that agentic AI is not making CPUs less important, but more important in a different way. That is an inference based on Intel’s official explanation of the host CPU role and the structure of DGX Rubin NVL8.

For now, the confirmed takeaway is clear: Xeon 6 is officially inside NVIDIA DGX Rubin NVL8 systems, and Intel is using that announcement to position itself as a mission critical part of the AI inference stack. The next question is whether this remains a focused NVL8 deployment or becomes the foundation for broader Intel participation across more of NVIDIA’s Rubin era infrastructure.

What do you think, is Intel’s role as the host CPU inside DGX Rubin NVL8 a meaningful long term win, or just a limited foothold before NVIDIA pushes further toward its own CPU roadmap?