Google TurboQuant Will Not Solve the AI Memory Crunch as Rising Efficiency Keeps Expanding Overall Demand

When Google introduced TurboQuant in March 2026, the announcement immediately caught the attention of the AI and memory industries. Google said the new compression method could reduce key value cache memory requirements by at least 6x in some long context tests while preserving model accuracy, and also reported up to 8x faster attention logit computation over a 32 bit baseline on H100 GPUs in its experiments. On paper, that looked like exactly the kind of breakthrough that might finally cool the pressure on AI memory demand.

But that is not what has happened.

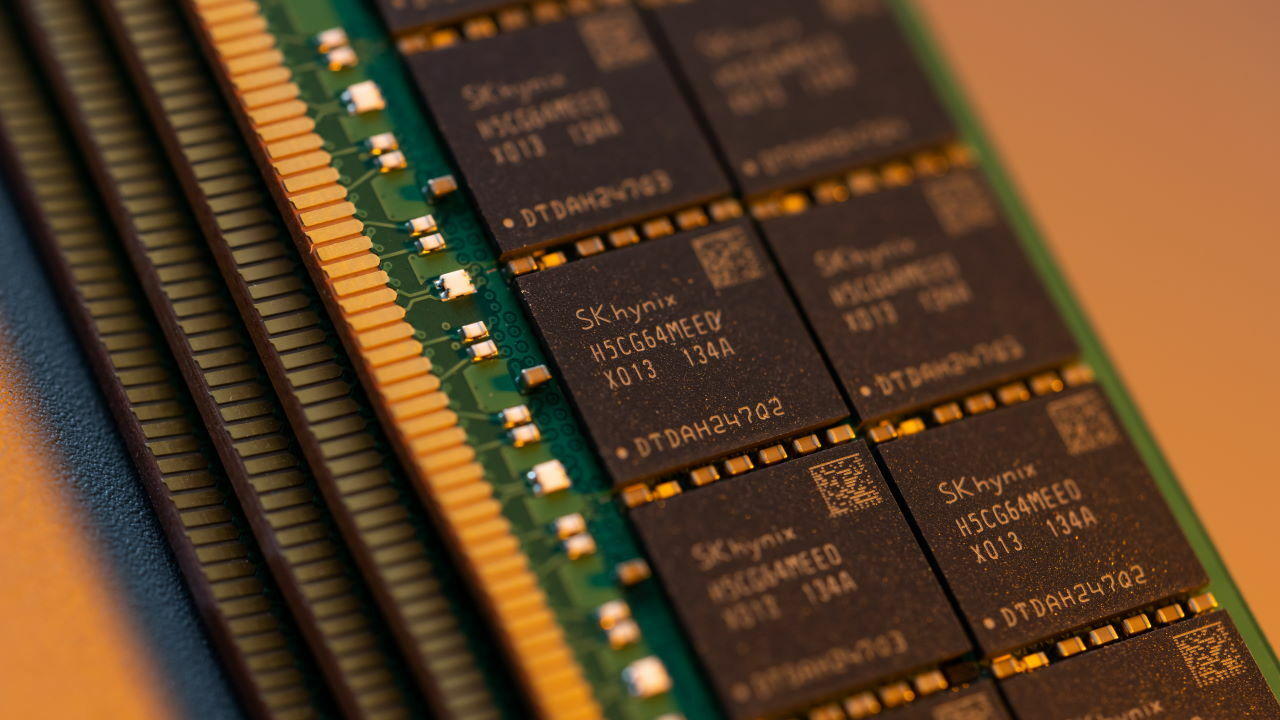

Instead of weakening the memory cycle, the market has stayed extremely tight. SK hynix reported record quarterly results this week, saying demand for AI memory still exceeds available capacity, while Reuters also reported that the company is investing about 19 trillion won, or roughly 12.85 billion dollars, in a new South Korean facility to support growing AI memory demand. That is not the behavior of an industry preparing for a sudden drop in memory needs. It is the behavior of a supply chain still racing to catch up.

The reason is simple, and SK hynix itself effectively explained it. In the company’s recent earnings comments, CFO Kim Woo hyun said that software and hardware optimization is actually another driver of memory demand growth, because memory efficiency improvements tend to increase the amount of context that can be processed per unit of memory. In other words, when models get more efficient, companies do not just use less memory and stop there. They often use that efficiency to push bigger contexts, more inference, more users, and more ambitious services, which then expands the total market again. Reuters’ reporting on SK hynix’s results lines up with that view by showing that demand remains ahead of supply even as the industry keeps optimizing.

That makes TurboQuant an economics story as much as a technology story. Google’s own research post presents TurboQuant as a way to reduce the KV cache bottleneck and lower memory costs for large language model inference and vector search. But lower cost per workload does not automatically reduce total demand. In fast growing markets, it often does the opposite. Better efficiency makes more products viable, more requests affordable, and more features practical, which pulls even more usage into the system. That is exactly the kind of virtuous cycle SK hynix is now talking about.

This is why the idea that one algorithm could “fix” the memory crisis was always too optimistic. TurboQuant appears to be a meaningful technical improvement, and Google’s reported results are impressive. But the AI market is not constrained only by waste. It is constrained by explosive growth. Every time the industry gets better at fitting more intelligence into the same memory footprint, it tends to respond by scaling up the workload, not by freezing demand at the old level.

That broader pattern is also visible in the capital spending now surrounding AI memory. SK hynix is not acting like efficiency gains are about to erase the need for more supply. It is building more capacity, accelerating investment, and treating AI memory as a sustained long term demand driver. Reuters noted that the company sees demand for AI chips remaining strong enough to support favorable pricing and continuing expansion. That signals a market where optimization and supply growth are moving together, not one where optimization is replacing the need for new memory production.

So the real takeaway is not that TurboQuant failed. It is that people misunderstood what success would look like. If TurboQuant works as advertised, it should make AI systems more efficient and more economically attractive. But in a market growing this fast, that does not relieve pressure on memory. It can intensify it by making larger and more frequent deployments possible. Google may have helped reduce memory use per task, but that is very different from reducing memory demand across the whole industry.

For the semiconductor sector, that means the memory crunch is still fundamentally a scale problem. Software breakthroughs can improve utilization, but they do not cancel the need for more fabs, more advanced packaging, more HBM, and more system memory capacity. If anything, they make the next stage of AI expansion more achievable, which only keeps the pressure on suppliers.

The AI industry will absolutely benefit from technologies like TurboQuant. It just should not expect them to slow the memory race. They are more likely to accelerate the next wave of it.

What do you think will have a bigger impact on the AI market from here: better memory efficiency, or simply building a lot more memory capacity?