AMD Raises Server CPU Outlook to 120 Billion Dollars as Lisa Su Says EPYC Verano Is Built Specifically for AI Infrastructure

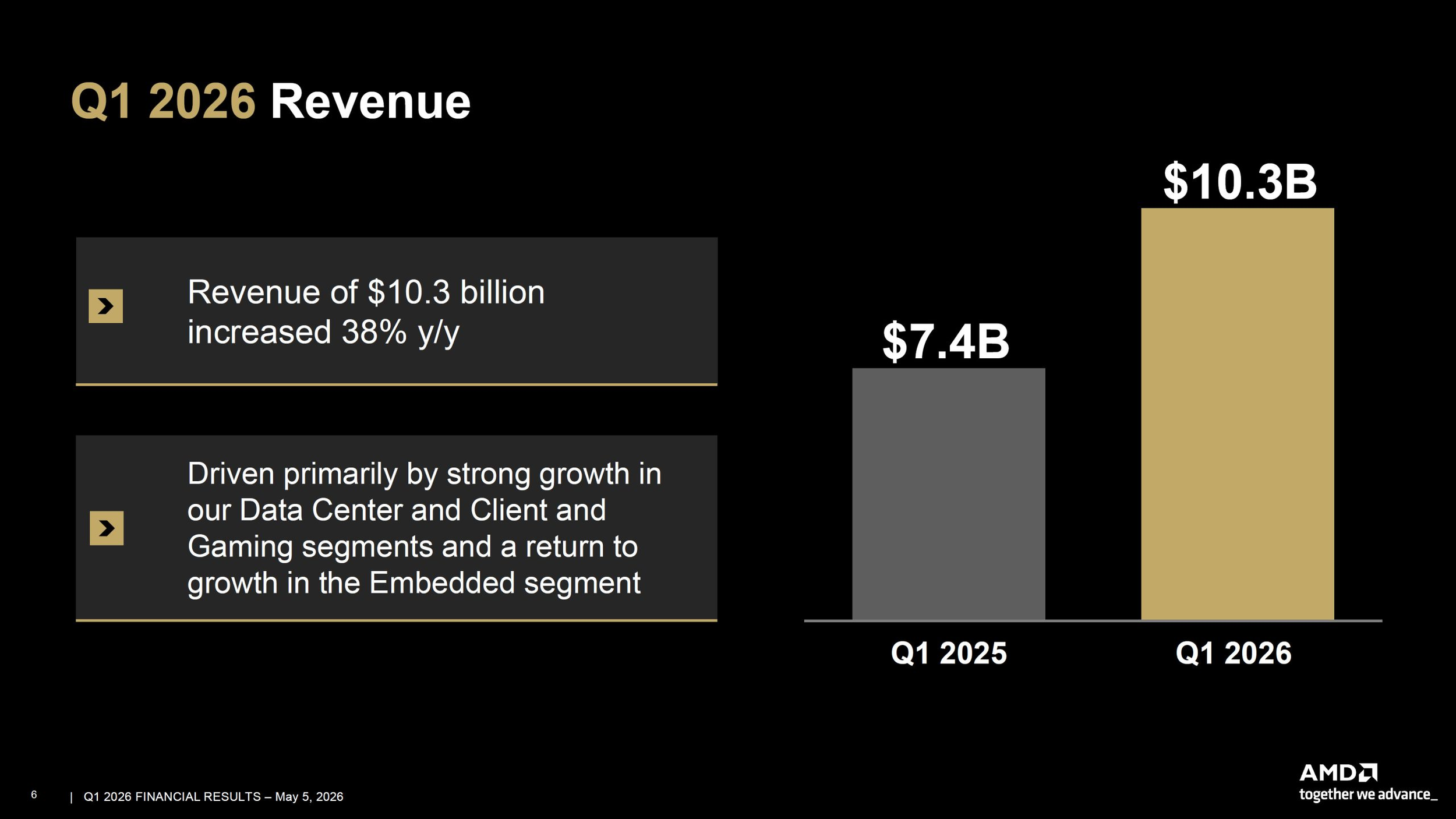

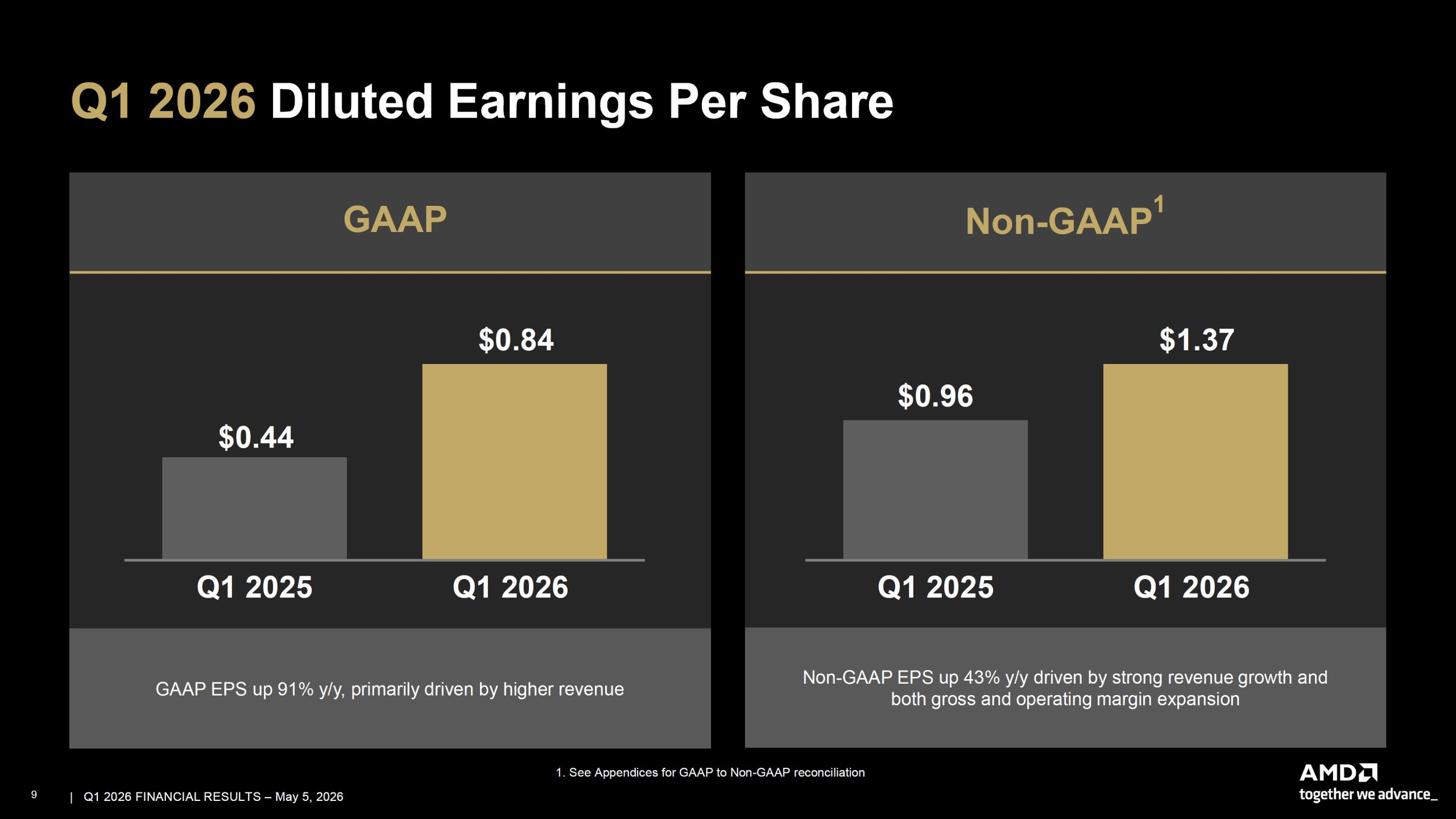

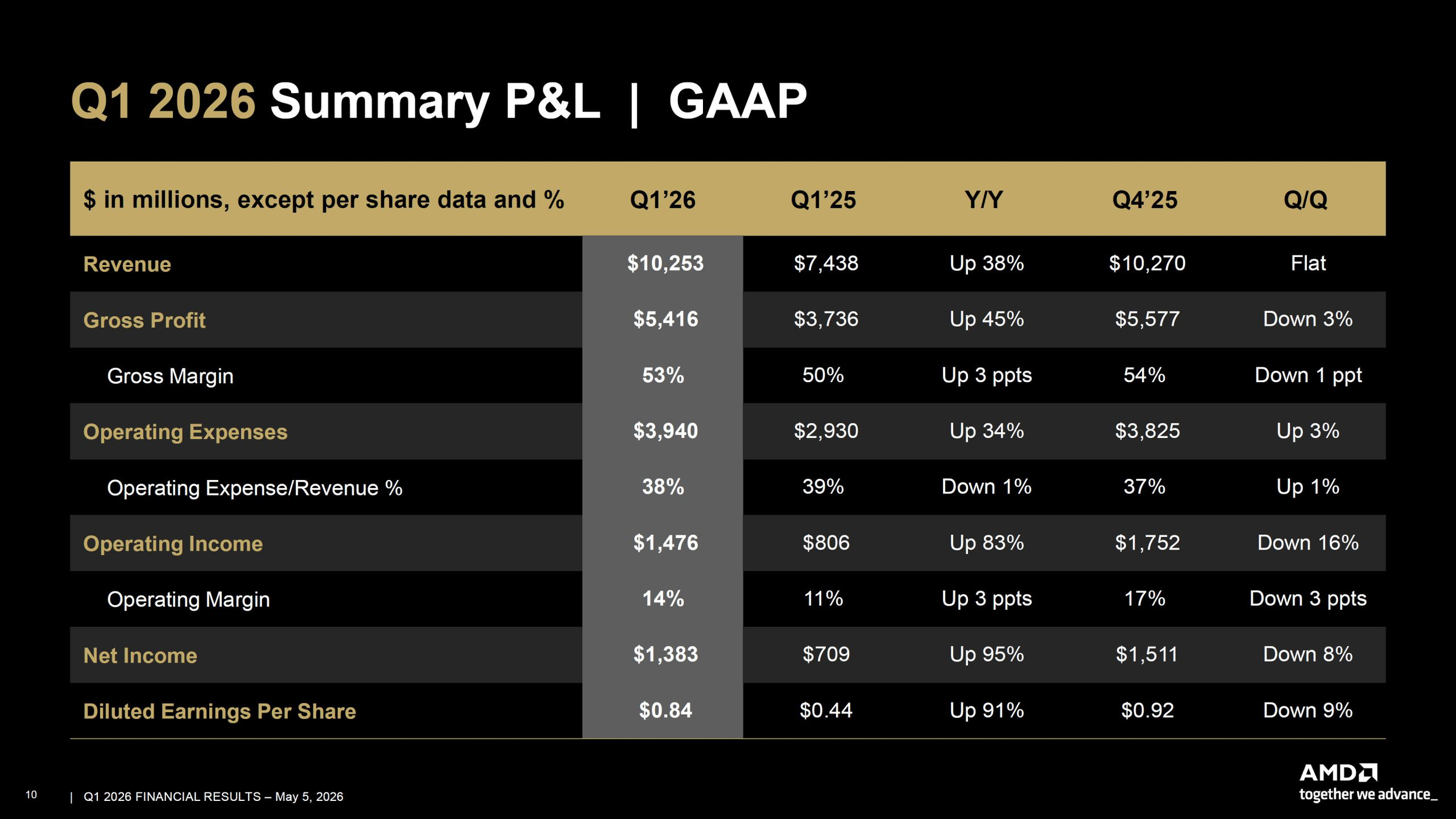

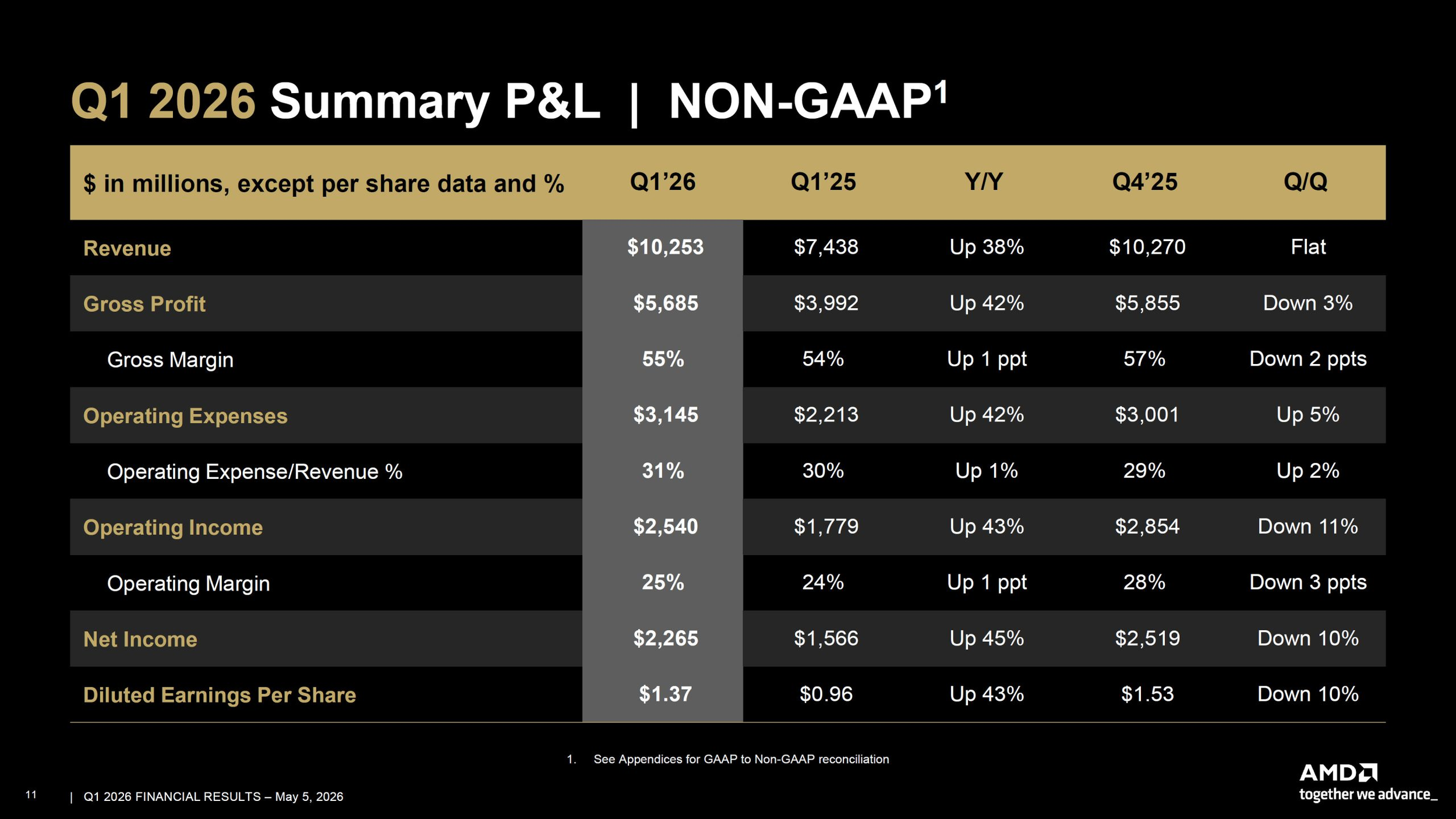

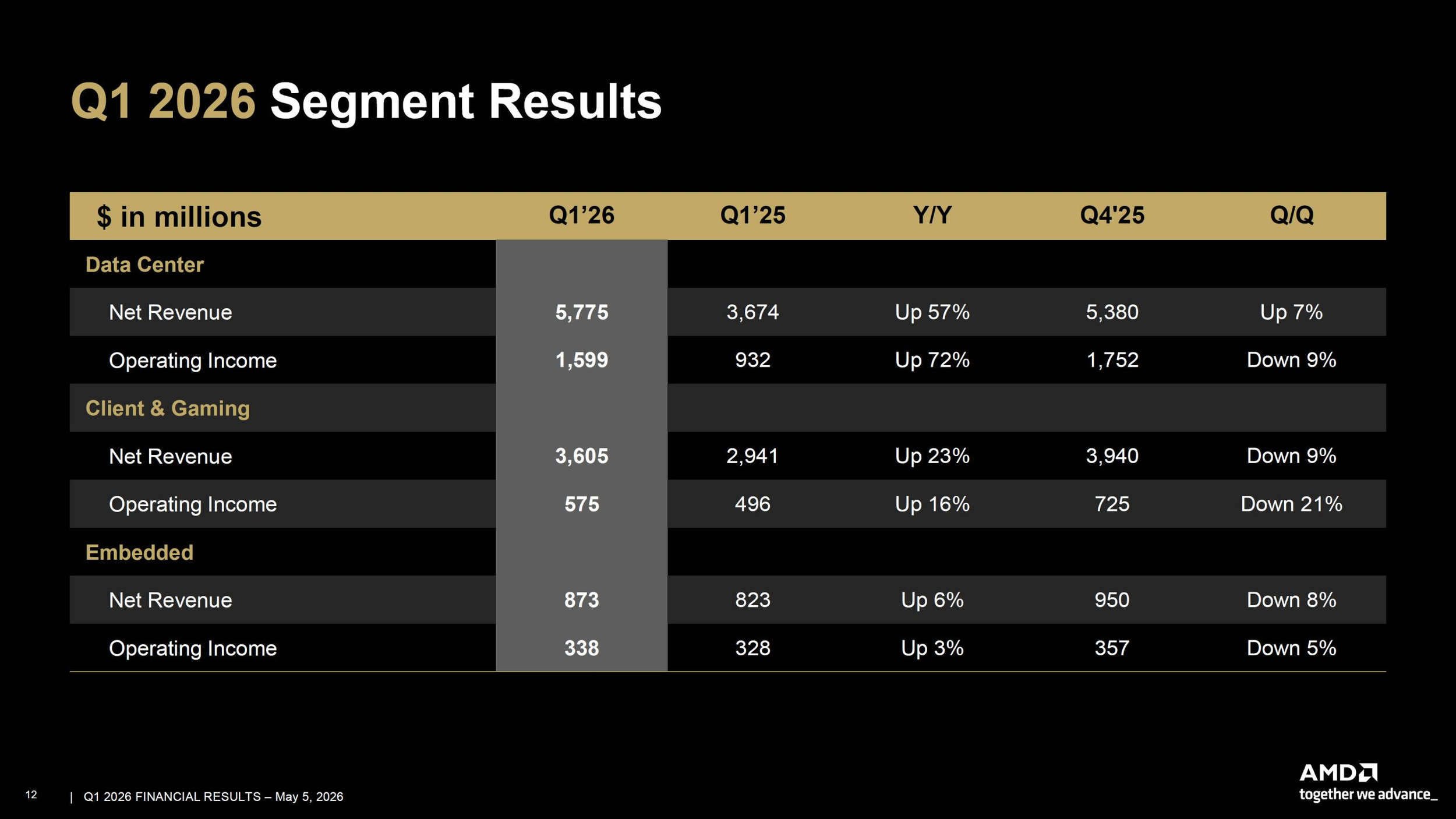

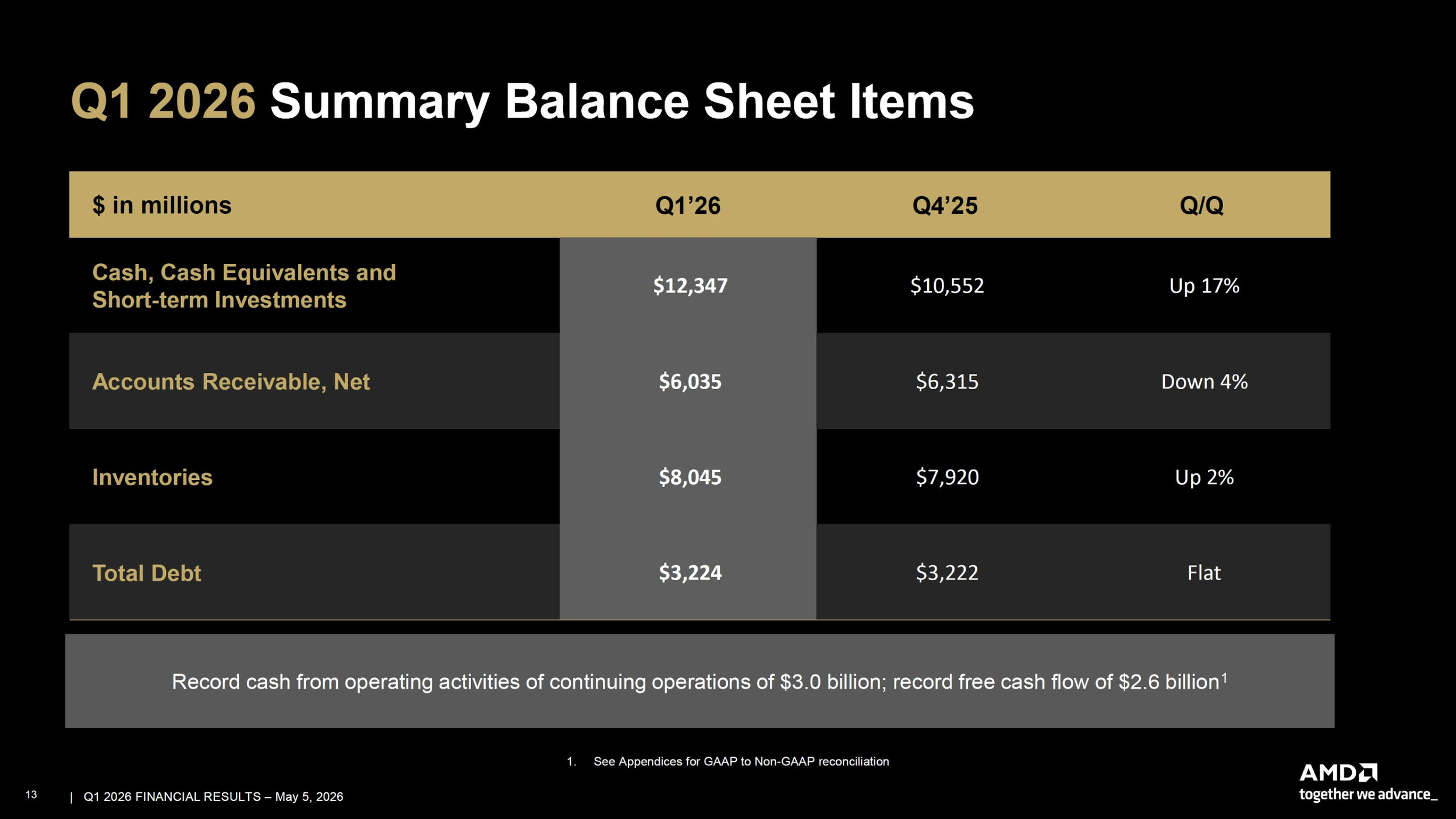

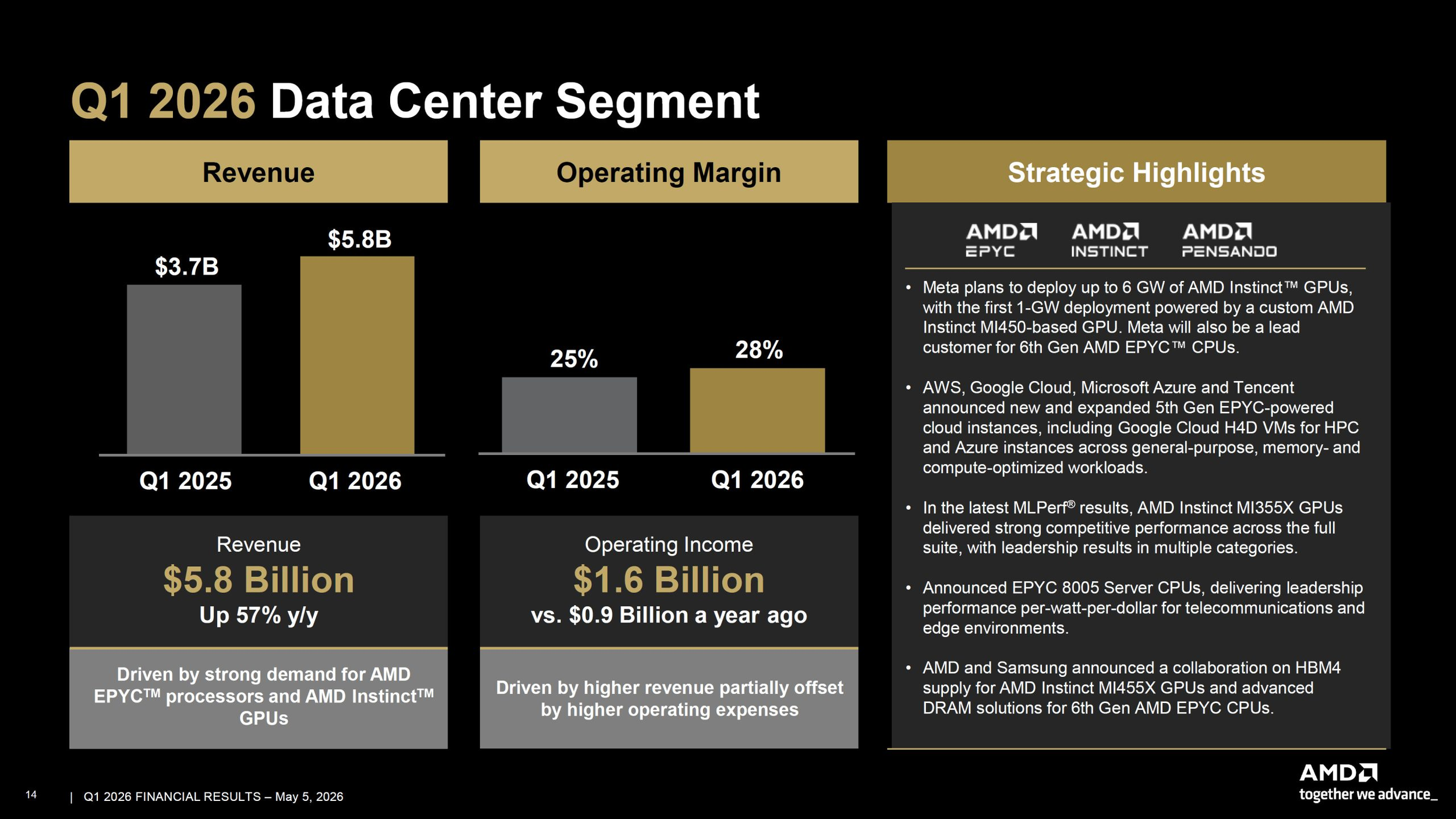

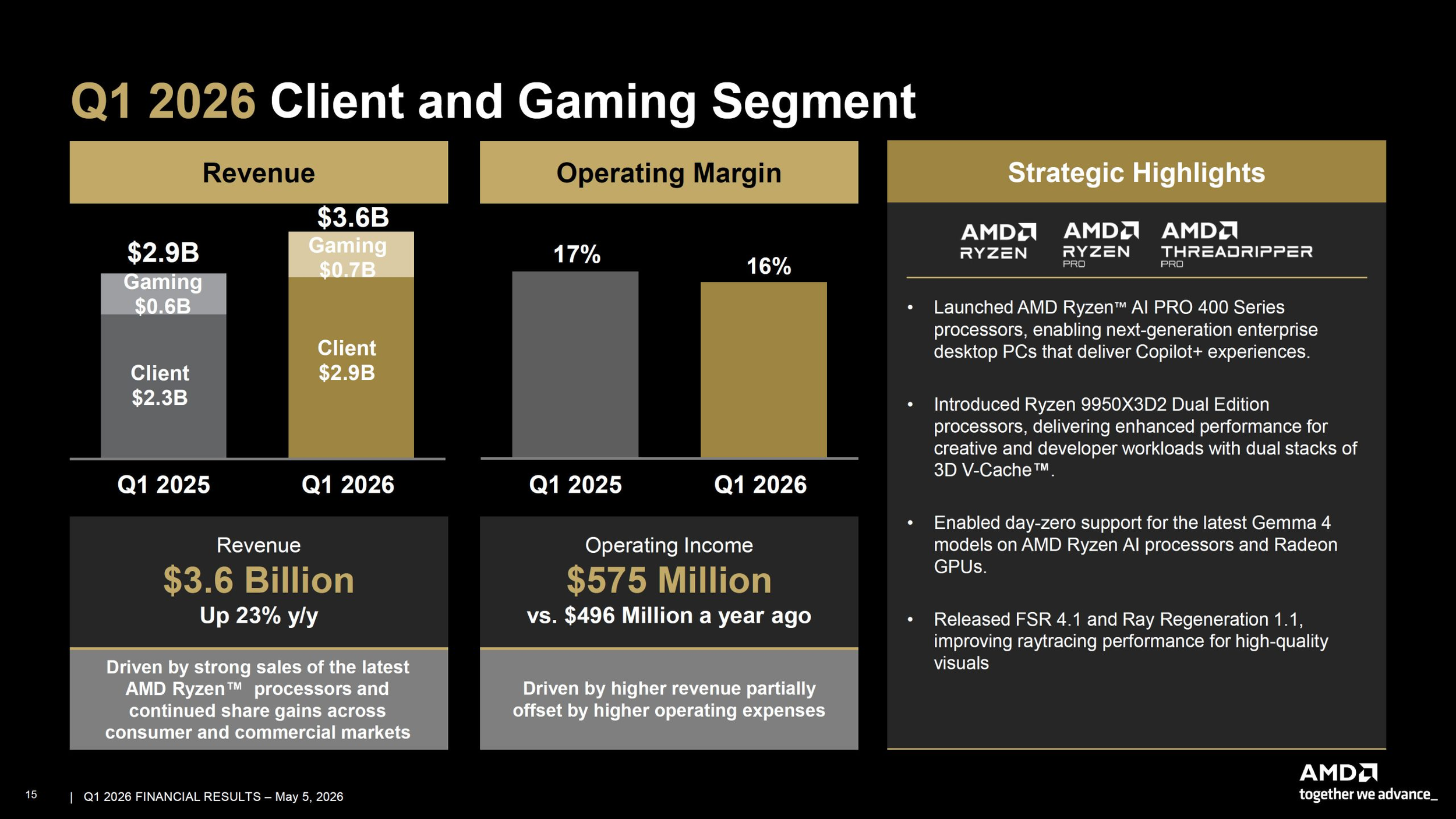

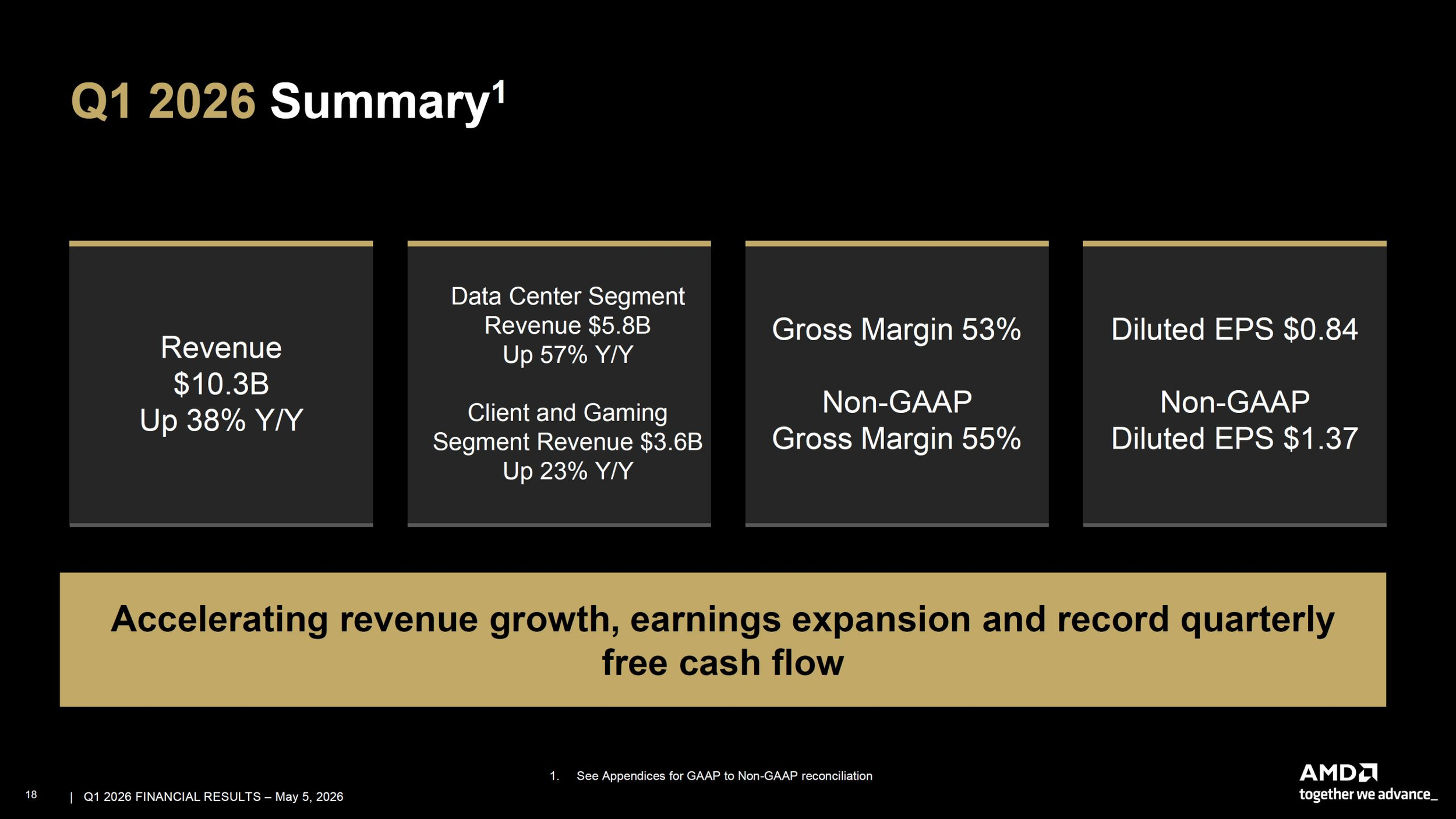

AMD has delivered one of its strongest quarter results yet, and the company is now making an even bigger statement about where the server market is headed next. In its Q1 2026 results, AMD reported record revenue of 10.3 billion dollars, up 38% year over year, with the Data Center segment reaching 5.8 billion dollars, up 57% from a year earlier. AMD said the quarter was driven by strong demand for EPYC processors and continued growth in Instinct GPU shipments, reinforcing that AI infrastructure is now the company’s primary growth engine.

The bigger long term signal came from Lisa Su’s comments around server demand. AMD now expects the server CPU total addressable market to grow at more than 35% annually and exceed 120 billion dollars by 2030. That is a major increase from the approximately 18% annual growth outlook AMD outlined at its Financial Analyst Day in November, effectively doubling the pace of expansion it had previously projected. Reuters reported that this new forecast reflects rising AI related server demand, especially as inference and agentic AI workloads create more need for CPUs alongside accelerators.

Lisa Su was direct about what is changing. AMD said inferencing and agentic AI are increasing demand for high performance CPUs and accelerators, while also increasing the need for server CPU compute for orchestration, data movement, and parallel execution. That point matters because it reframes the AI infrastructure story. The market is no longer only about GPUs. It is also about the CPUs that coordinate, feed, and manage those AI systems at scale.

AMD also used the earnings discussion to spotlight what comes next for EPYC. The company said its 6th Gen EPYC Venice processors, based on Zen 6 and TSMC 2 nanometer technology, remain on track to launch later this year. Su said the Venice family will span a broad set of CPUs optimized for throughput, performance per watt, and performance per dollar. Most notably, she identified Verano as AMD’s first EPYC CPU purpose built for AI infrastructure. That is a very deliberate positioning choice, because it shows AMD is starting to segment EPYC more aggressively around AI era workloads instead of treating server CPU design as a one size fits all portfolio.

AMD is also leaning into competitive language more openly. During the earnings call, Su said Venice will widen AMD’s advantage with substantially higher performance per socket and per watt versus competing x86 offerings, while delivering more than 2x throughput per socket compared with leading Arm based AI solutions. That is an especially important claim because Arm vendors are gaining more attention in AI centered infrastructure, and AMD clearly wants to frame Venice as a direct answer to that pressure.

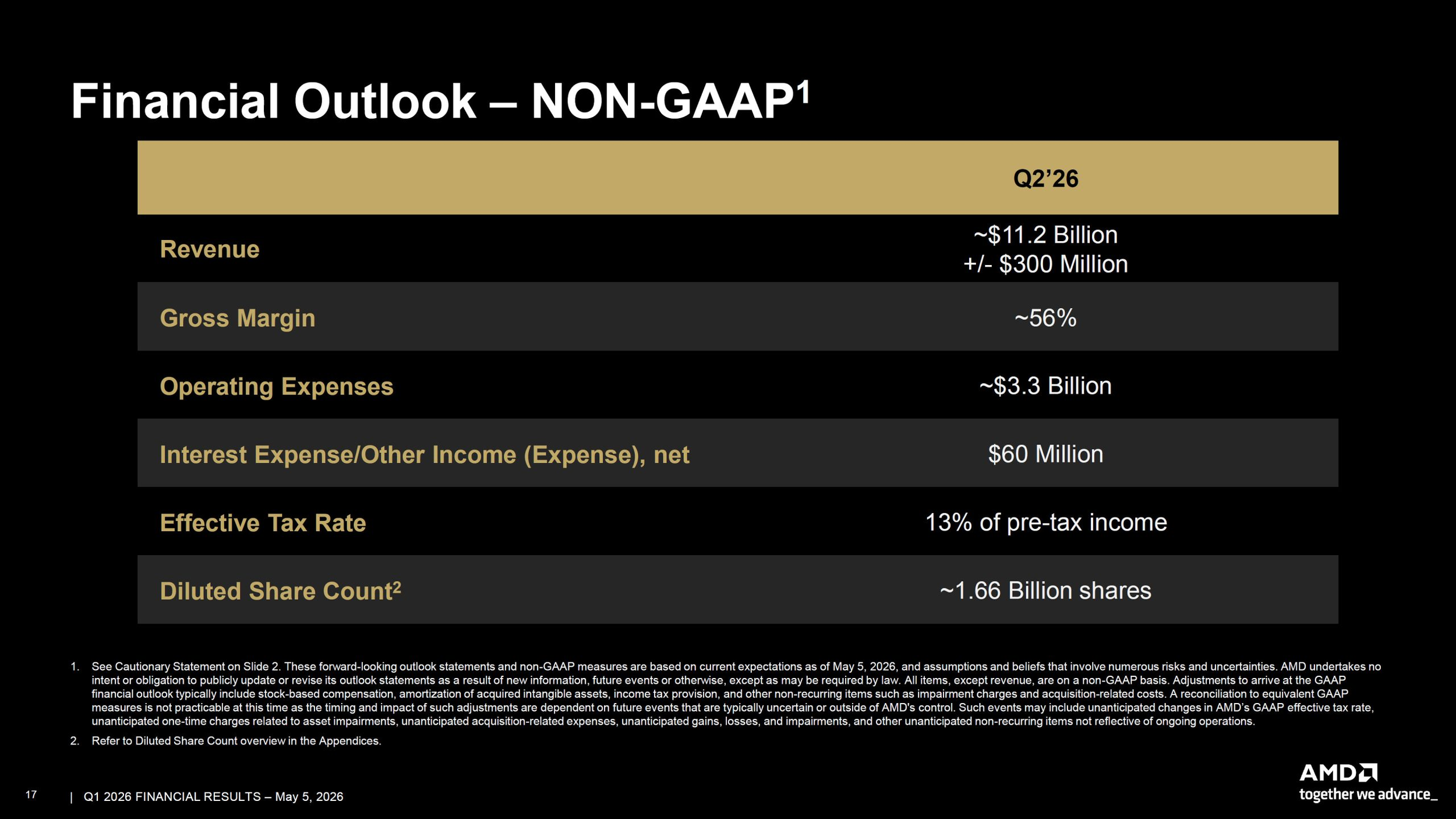

The quarter itself gives AMD a strong foundation for that message. The company said EPYC powered cloud instances increased nearly 50% year over year to more than 1,600, with broader availability across major cloud providers. AMD also said enterprise demand accelerated, delivering record revenue and record sell through in the quarter. On top of that, the company is guiding for approximately 11.2 billion dollars in Q2 revenue, plus or minus 300 million dollars, with server CPU revenue expected to grow by more than 70% year over year in the second quarter.

There is also a capacity angle here that should not be overlooked. AMD said it is working closely with supply chain partners to meaningfully increase wafer and backend capacity in response to the stronger demand outlook. That is critical because a more bullish server market forecast means little if supply cannot keep pace. AMD is effectively saying that the AI infrastructure wave is large enough now that it is forcing planning changes all the way down the manufacturing chain.

From a market perspective, this is one of AMD’s clearest attempts yet to expand the AI narrative beyond Instinct accelerators. EPYC is no longer being pitched only as a strong general purpose data center CPU family. It is increasingly being positioned as an essential layer of AI infrastructure itself. If that thesis continues to hold, AMD could benefit from both sides of the AI buildout, with Instinct driving accelerator growth and EPYC capturing the orchestration and host compute side of the same deployments. That conclusion is an inference based on AMD’s earnings commentary and guidance.

AMD’s Q1 results do not just show momentum. They suggest the company believes the entire server market has been structurally redefined by agentic AI, and it is aligning its roadmap around that shift. With Venice due later this year and Verano already positioned as a CPU made specifically for AI infrastructure, AMD is making it clear that the next chapter of EPYC will be written as much in AI data centers as in traditional enterprise deployments.

Do you think AMD’s biggest AI opportunity over the next 2 years will come more from Instinct accelerators or from EPYC CPUs becoming the control layer behind large scale AI deployments?