AMD Starts Sampling MI450 GPUs as Lisa Su Says Biggest AI Deployments Are Now Landing on the Inference Side

AMD’s latest earnings cycle made one thing very clear: the company’s next major AI accelerator push is already moving from roadmap language into customer hands. During the Q1 2026 earnings call, CEO Dr. Lisa Su confirmed that AMD has begun sampling MI450 GPUs to lead customers and remains on track to ramp Helios production shipments in the second half of 2026. She also said customer demand for MI450 continues to strengthen and that current forecasts from lead customers are already exceeding AMD’s initial plans.

That matters because MI450 is not being framed as a niche follow up product. AMD is positioning it as a core part of its next large scale AI infrastructure wave. Lisa Su said the company is seeing a growing number of customers engaging on significant deployments, including additional multi gigawatt opportunities, and later added that the broadest and largest deployment appetite is now centered on inference rather than only training. That is one of the most important strategic signals in the entire update, because it shows where AI spending is shifting as agentic AI and production serving workloads expand.

AMD’s momentum around MI450 is also being reinforced by already announced partnerships. In February, AMD and Meta disclosed a multi year, multi generation agreement to deploy up to 6 gigawatts of AMD Instinct GPUs, with the first gigawatt deployment expected to begin in the second half of 2026 using a custom AMD Instinct GPU based on the MI450 architecture. AMD also said this deployment will run on its Helios rack scale architecture alongside 6th Gen EPYC CPUs codenamed Venice. That gives MI450 a far more concrete commercial launch path than a normal early sampling announcement.

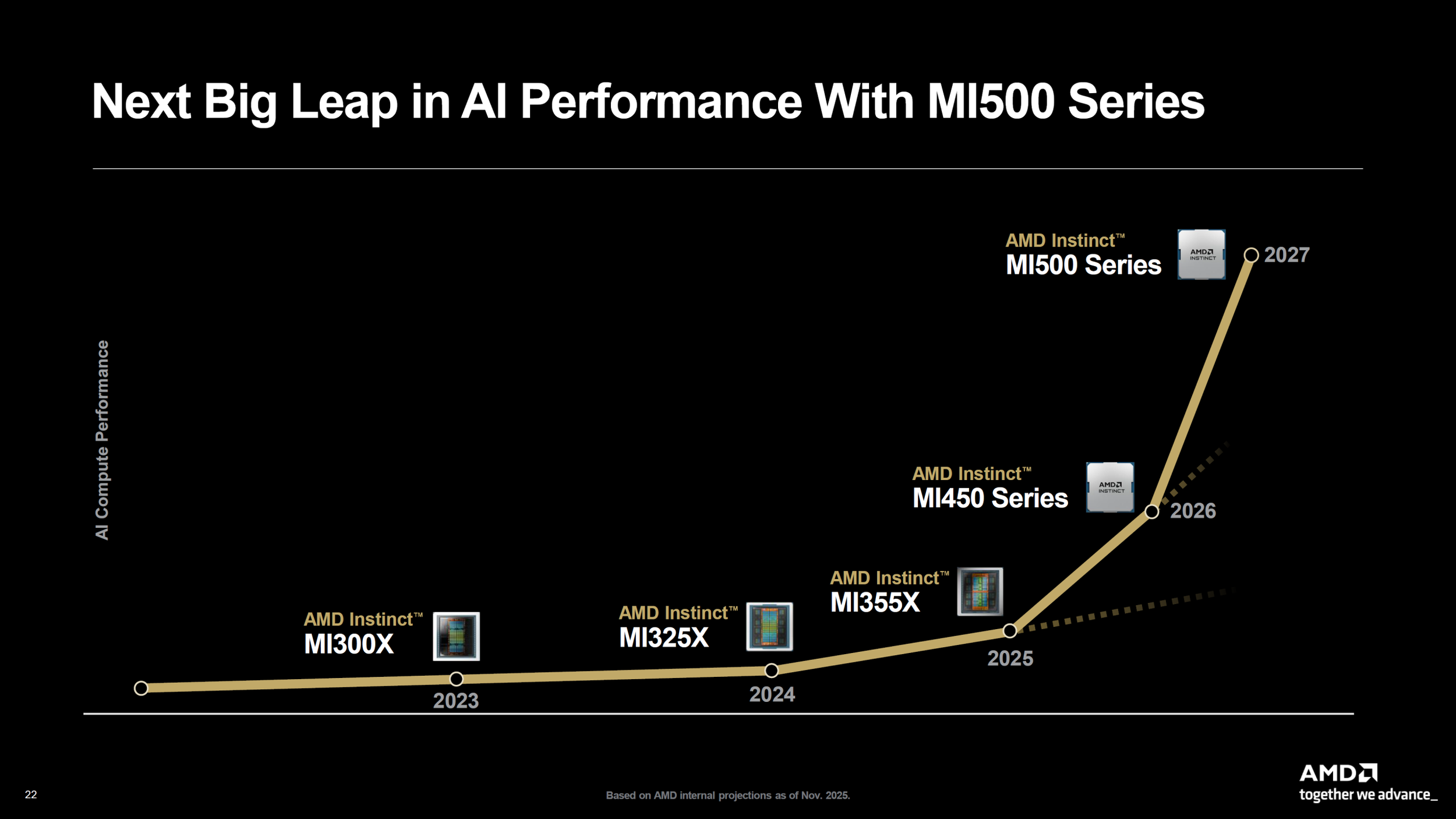

What stands out even more is that AMD is already talking beyond MI450. On the same call, Su said many customers are also deeply engaged with AMD on the MI500 series and the opportunities that platform could unlock. That suggests AMD is no longer just trying to win individual accelerator deals. It is trying to secure longer horizon infrastructure roadmaps with customers that are planning multiple generations ahead. If that continues, it would give AMD a stronger foothold not only in today’s AI buildout but in the next cycle of capacity planning as well.

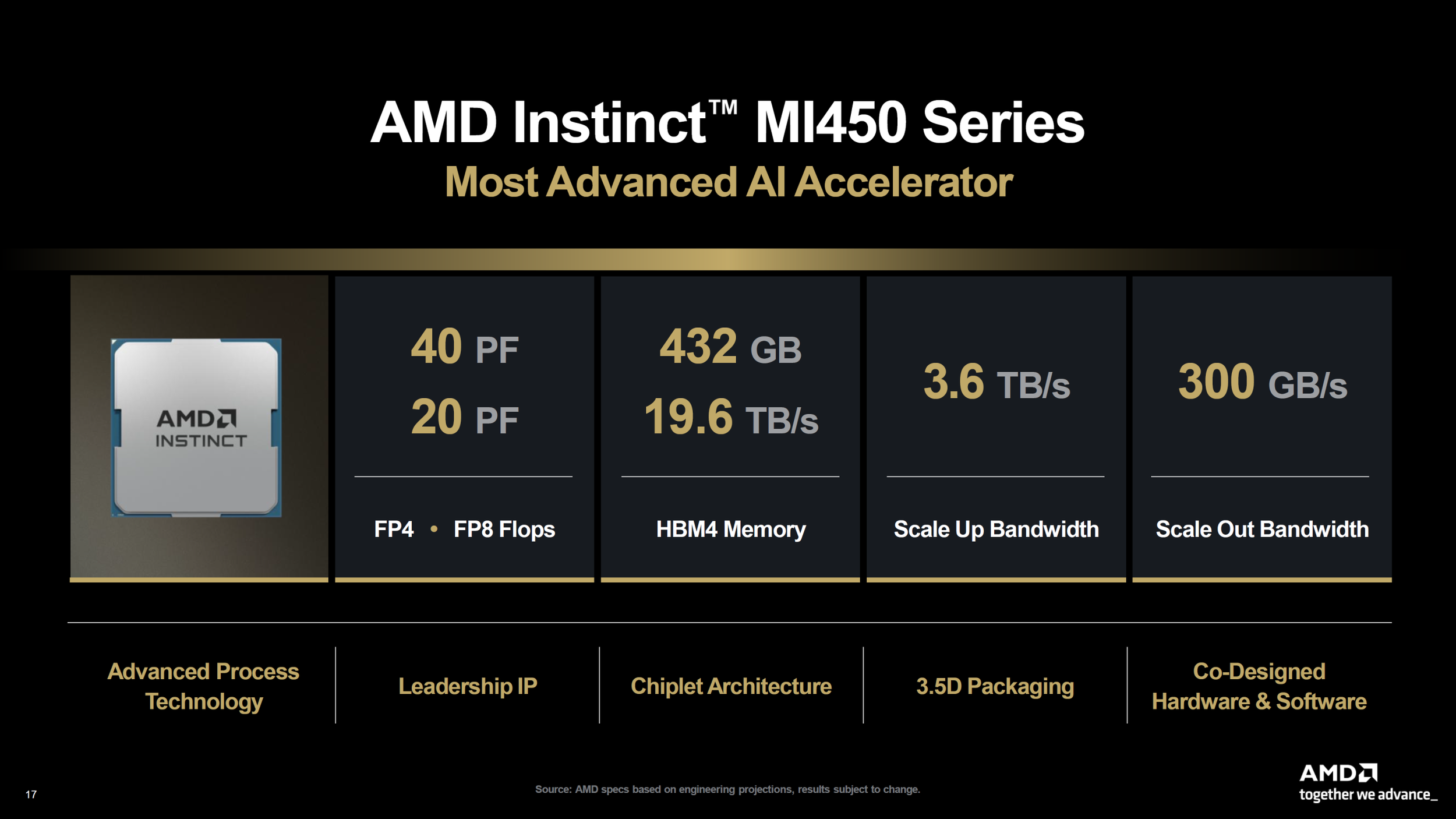

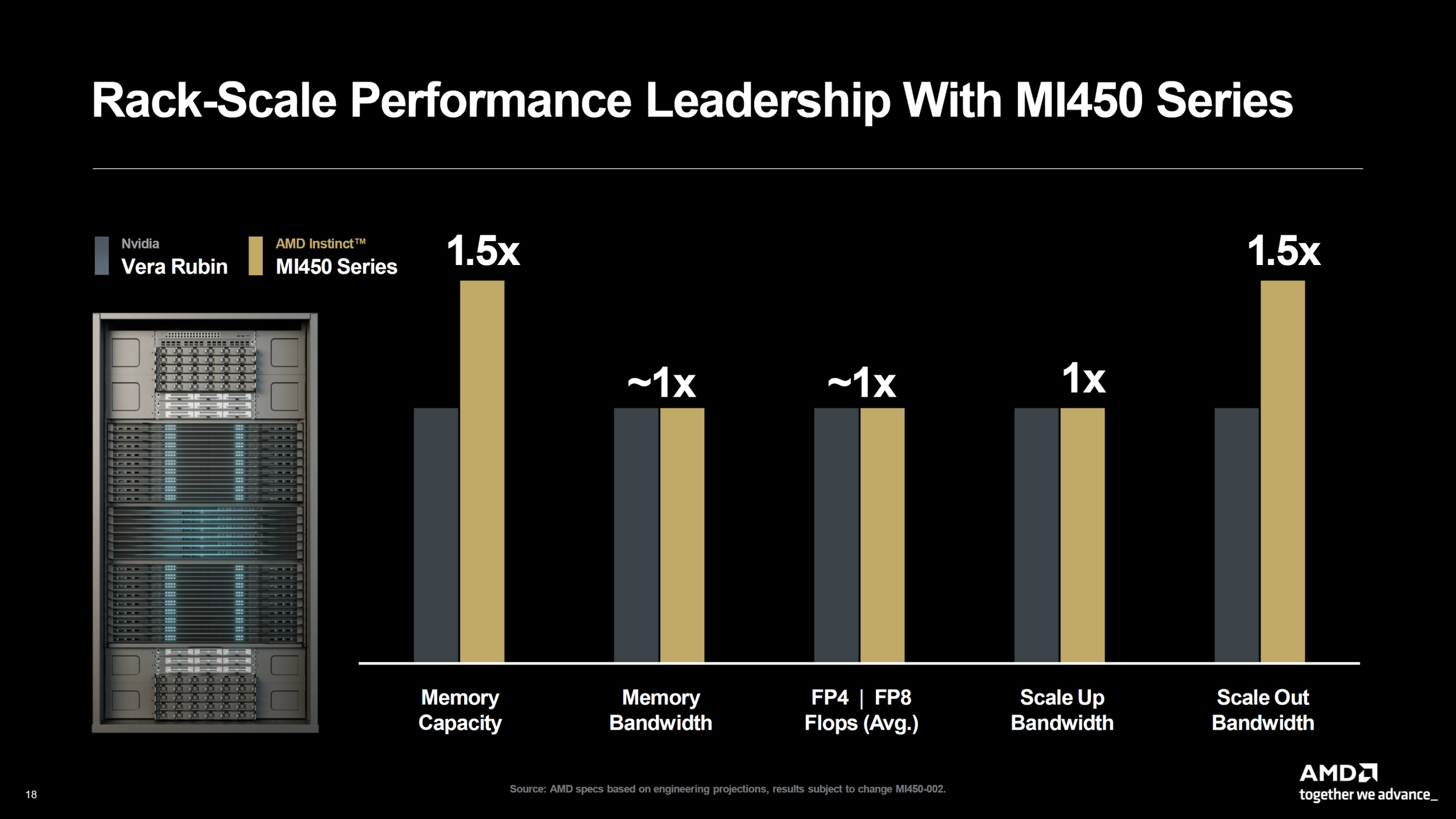

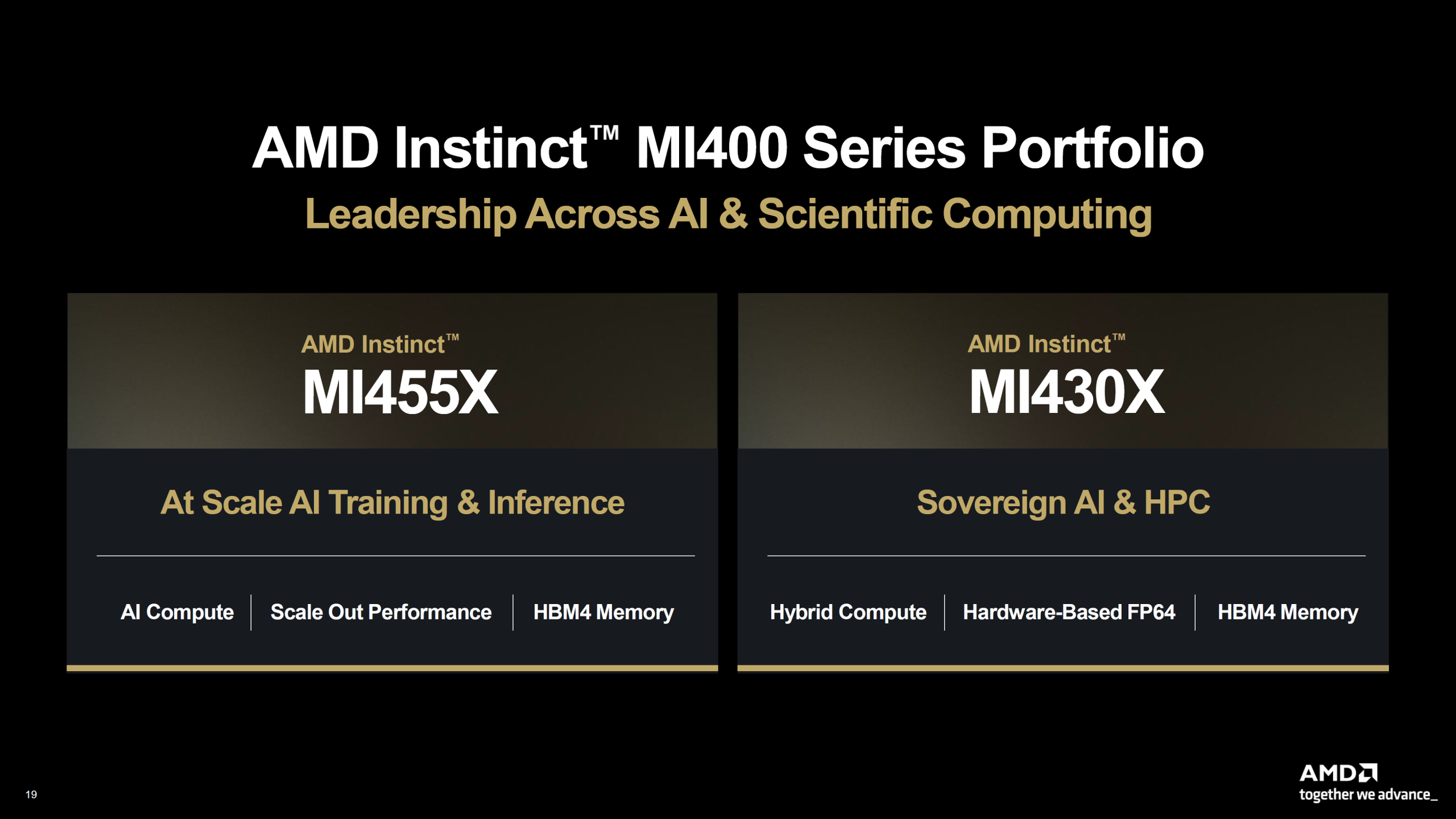

The market context also supports AMD’s confidence. Reuters reported last year that OpenAI was already working with AMD on the MI450 chips to improve their design for AI workloads, while AMD introduced the MI400 family as the basis for its Helios AI server platform. Reuters also noted that Helios is designed as a rack scale system with 72 MI400 series chips, placing AMD more directly into the same infrastructure class that NVIDIA has been dominating.

On the product side, the broader MI400 and MI450 platform story remains central to AMD’s pitch. The family is expected to bring major gains in compute density, memory capacity, and rack scale connectivity, with HBM4 playing a major role in that next step forward. AMD has also been leaning into open standards and rack scale networking as part of its differentiation strategy, which is meant to position Helios and MI450 as an alternative to more closed AI infrastructure ecosystems. That positioning becomes even more relevant if inference really does become the largest deployment segment, because operators at that scale will care deeply about performance per watt, memory footprint, system interoperability, and long term operating costs. This last point is an inference based on AMD’s public product strategy and Lisa Su’s comments about inference demand.

Another major takeaway from Lisa Su’s comments is how far customer interest has progressed. She described active deep co engineering with major partners and said the company is seeing a breadth of customers interested in significant scale MI450 node deployments. That is stronger language than a simple early preview phase, and it suggests AMD believes its AI accelerator roadmap is now being evaluated as part of real production infrastructure decisions rather than only pilot projects.

AMD is expected to share more details at its upcoming Advancing AI event in July, where the company plans to talk further about next generation Instinct GPUs, EPYC processors, the Helios rack scale platform, and customer engagement. For now, though, the message is already clear: MI450 sampling has started, MI500 discussions are underway, and the biggest near term AI deployments AMD sees are increasingly tied to inference. That could become one of the most important shifts in the accelerator market over the next 18 months, especially if AMD can convert that interest into high volume production shipments.

Do you think AMD’s biggest accelerator opportunity now lies in winning more inference deployments, or does it still need a much bigger breakthrough in training clusters to truly challenge NVIDIA?