Zyphra and AMD Launch Open AI Platform Built on MI355X Infrastructure With Expansion Plans for MI450 and Beyond

Zyphra has officially launched Zyphra Cloud, a new open AI platform developed in partnership with AMD and powered by TensorWave infrastructure, marking a notable step forward for AMD based AI cloud services in the United States. According to the company’s official announcement, the platform launches with Zyphra Inference, a serverless inference service focused on frontier open weight models and long horizon agentic workloads, while also positioning itself as a broader full stack AI environment that will expand beyond inference over time.

The launch is significant because Zyphra is not pitching this as a narrow model endpoint service. On its official cloud page, the company describes Zyphra Cloud as a full stack platform for open superintelligence, with components spanning inference, compute, agent environments, and its MAIA agent layer. The current live offering centers on inference for long context and long horizon agentic systems, but Zyphra says the platform is designed to serve developers, enterprises, and frontier AI hyperscalers alike.

At the infrastructure level, the announcement confirms that Zyphra Cloud is running on AMD Instinct MI355X GPUs through TensorWave. AMD’s own product page describes the MI355X as part of its Instinct MI350 series, built for high density AI infrastructure with 288 GB of HBM3E memory and 8 TB/s of bandwidth, which gives important context for why this hardware was selected for large scale inference workloads. Zyphra says the service is built around custom kernels, long context inference algorithms, and advanced parallelism schemes to deliver high throughput and low latency for production grade AI workloads.

One of the biggest technical talking points is scale. Zyphra states that the cloud platform is backed by 15 MW of MI355X capacity through TensorWave infrastructure, giving it a serious data center class deployment footprint from day 1. That is not being framed as the end state either. The company says the platform is built to expand to future AMD GPU generations including MI450 and beyond, which fits with AMD’s broader Instinct roadmap. AMD has already publicly referenced MI450 based deployments in official partnership announcements tied to second half 2026 shipments, so Zyphra’s forward looking expansion language is consistent with the direction AMD is already signaling in the AI market.

Zyphra is also making it clear that this is only the first phase of the platform. In its official release, the company says upcoming capabilities will include distributed post training services such as reinforcement learning and fine tuning, sandboxed agent and development environments powered by AMD EPYC CPUs, and access to dedicated GPU clusters and bare metal infrastructure. That matters because it shifts Zyphra Cloud from being just an inference endpoint platform into something much closer to an integrated AI development and deployment stack.

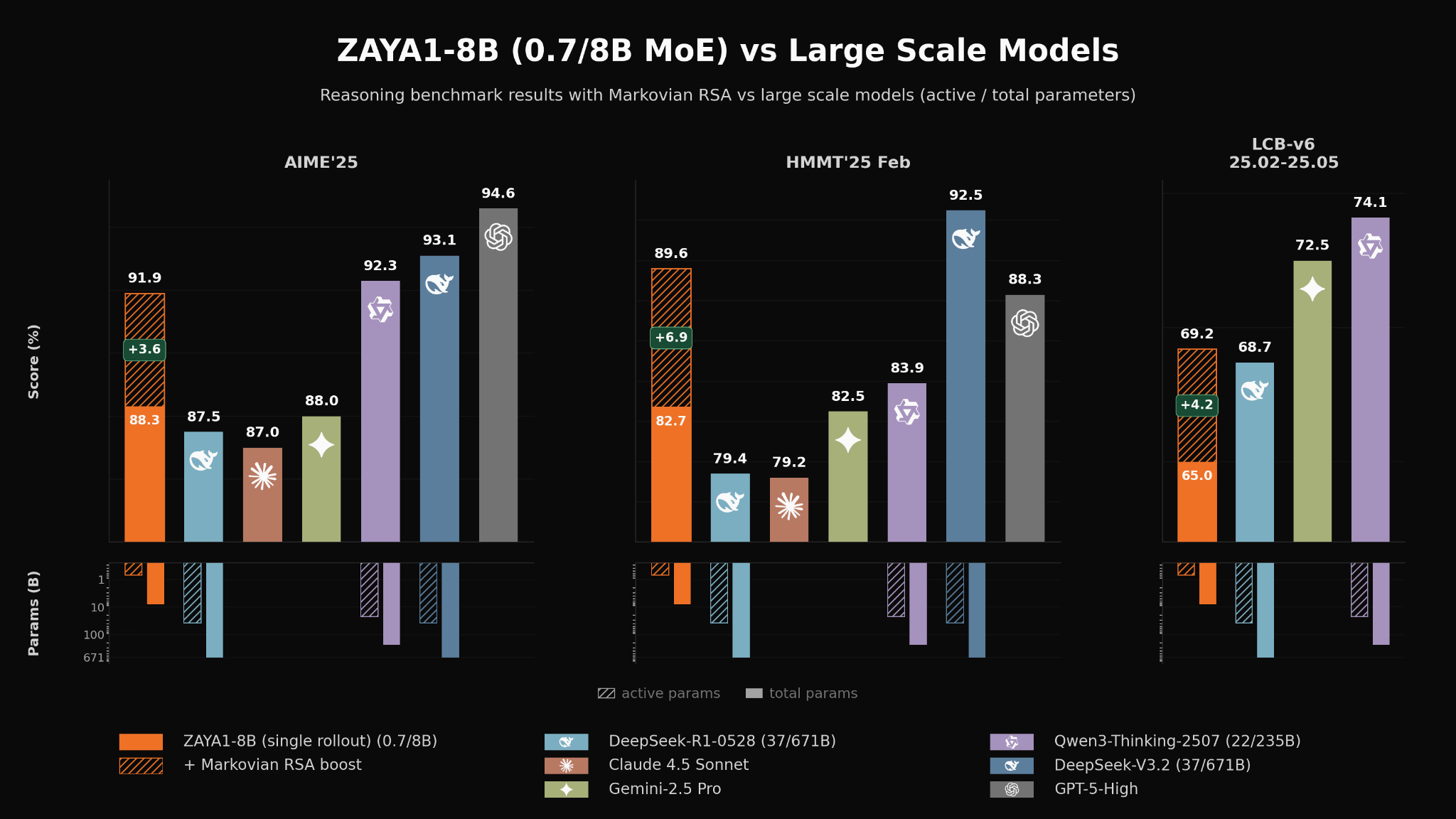

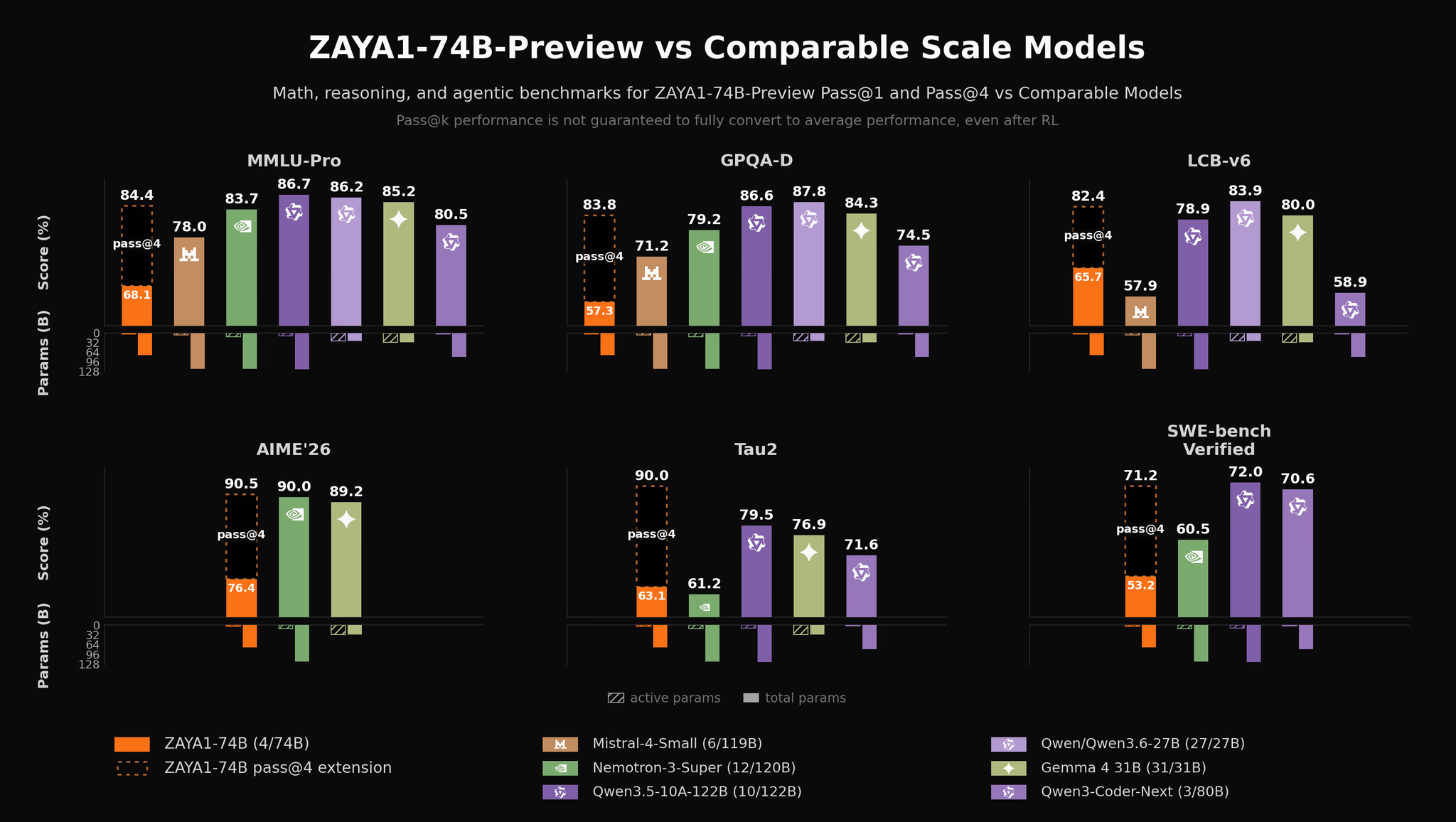

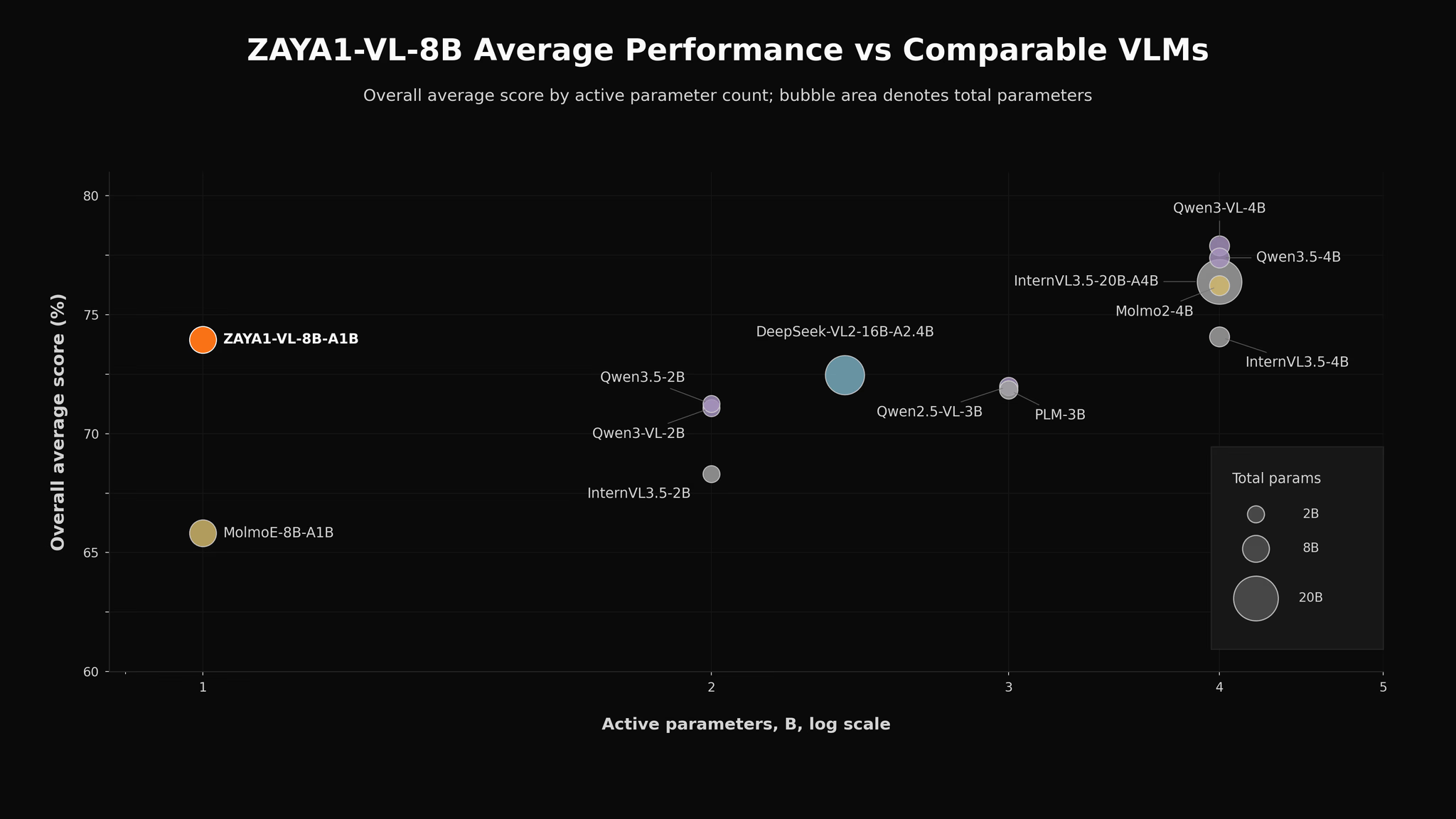

The platform also launches with a growing family of Zyphra models that help reinforce its vertical integration strategy. The company has already introduced ZAYA1 8B, ZAYA1 74B Preview, and ZAYA1 VL, alongside broader access to frontier open weight models through its inference layer. Zyphra describes ZAYA1 8B as the first MoE model pretrained, midtrained, and supervised fine tuned on an AMD Instinct MI300 stack, which underscores how closely the company is aligning its research and platform strategy with AMD hardware.

From a market perspective, this launch is important for 3 reasons. First, it gives AMD another visible AI cloud partner that is building around open weight and agentic workloads rather than only traditional hyperscale enterprise positioning. Second, it shows that MI355X infrastructure is now moving from performance claims into real commercial platform deployments. Third, it gives Zyphra a stronger chance to differentiate itself in a market increasingly crowded with model APIs by tying together cloud infrastructure, inference optimization, and its own research stack under one umbrella. Those are inferences based on the structure of the launch and the capabilities Zyphra has publicly outlined.

Can AMD backed open AI platforms like this become a serious long term challenger to NVIDIA dominated cloud AI stacks?