Samsung’s Memory Surge Is Becoming One of the Defining Profit Stories of the AI Race

Samsung’s memory business is rapidly turning into one of the clearest financial winners of the current AI infrastructure boom, and the scale is now becoming difficult for the wider tech industry to ignore. According to Counterpoint Research, Samsung generated $50.4 billion in memory revenue in the first quarter of 2026, including $37.0 billion from DRAM and $13.4 billion from NAND, both all time highs for the company’s memory operations. Counterpoint also said this was 167% above the prior cycle peak of $18.9 billion reached in the third quarter of 2018.

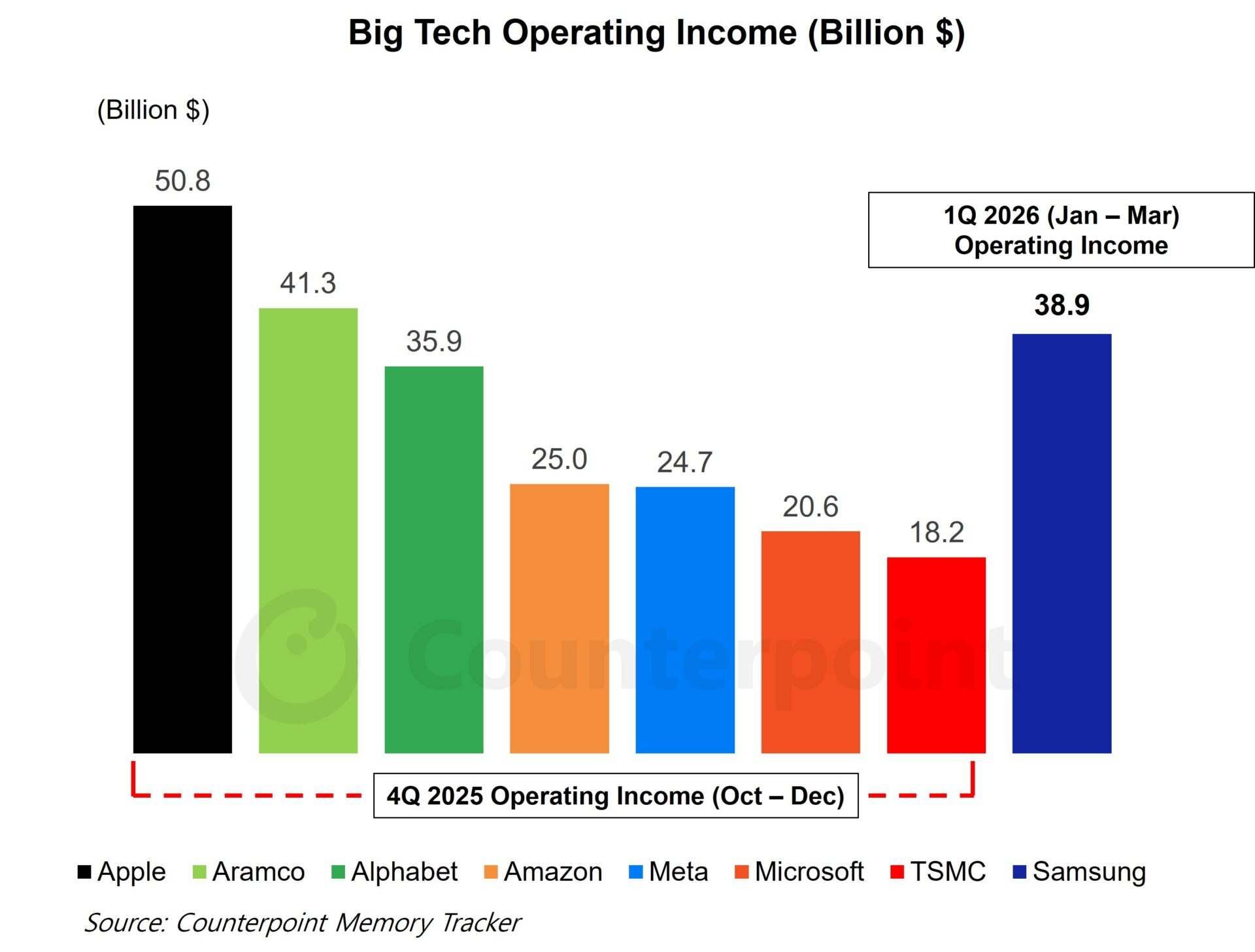

That headline needs one important clarification, though. The strongest comparison is not that Samsung’s memory division revenue alone is larger than the revenue of Amazon, Meta, or Microsoft, because it is not. The more defensible comparison is around profitability, and more specifically Samsung Electronics’ latest consolidated operating profit guidance. Samsung guided for approximately 57.2 trillion won in first quarter 2026 operating profit, which Reuters translated to about $37.92 billion. That level is above Amazon’s latest quarterly operating income of $25.0 billion, Meta’s latest quarterly income from operations of $24.745 billion, and Microsoft’s most recently reported quarterly operating income of $37.961 billion, though Microsoft’s figure is very close and comes from a different reporting calendar.

In other words, the broader point does hold up, but it needs to be framed carefully. Samsung’s official first quarter 2026 guidance is for the whole company, not just for memory. However, multiple reports indicate that the overwhelming driver behind that result is the company’s booming memory business, especially DRAM. Reuters reported that AI data center demand sharply lifted chip prices and helped push Samsung toward what would be its best quarterly profit on record, while DIGITIMES separately reported that more than 90% of Samsung’s earnings were tied to its memory business.

That makes strategic sense. In the current AI market, DRAM and high bandwidth memory are no longer supporting components sitting quietly in the background. They have become core infrastructure constraints. Training clusters, inference deployments, large scale cloud expansion, and increasingly dense accelerator platforms all need more memory bandwidth and more memory capacity. That dynamic has tightened supply, lifted pricing, and given scale players like Samsung, SK hynix, and Micron a much stronger pricing environment than the industry was used to only a few years ago. Reuters noted that DRAM prices were expected to rise by more than 50% in the current quarter, which helps explain why investors are treating this as a genuine supercycle rather than a short term spike.

Samsung is especially well placed to capitalize because it is not just participating in the memory upcycle, it is doing so with enormous manufacturing scale and with improving positioning in the most valuable AI segments. Reuters reported that Samsung has improved its standing in the high bandwidth memory market and has already been shipping HBM4 to NVIDIA. That matters because the next stage of AI infrastructure competition is not just about selling commodity memory into servers. It is about winning sockets in the most advanced accelerator platforms where bandwidth, thermals, and volume commitments all become strategic differentiators.

The revenue mix also tells its own story. Counterpoint’s figure of $37.0 billion in quarterly DRAM revenue versus $13.4 billion in NAND shows how central DRAM has become to this cycle. NAND remains important, but right now the market is being pulled hardest by compute expansion, hyperscaler buying, and AI system memory requirements. That is why Samsung’s memory business is now being discussed not just as a semiconductor segment, but as one of the financial engines of the wider AI buildout.

There is still a valid long term question around sustainability. Supercycles can cool, hyperscaler spending can shift, and new efficiency techniques could eventually change how much memory is needed per workload. But as of April 2026, the near term picture still looks extremely strong. Samsung’s official guidance, Counterpoint’s memory breakdown, and Reuters’ reporting on pricing and AI demand all point in the same direction. The constraint in this market is not demand generation. It is capacity.

For the rest of the industry, that should be a warning as much as a headline. The AI race is often framed around GPUs, cloud platforms, and model builders, but the companies controlling the memory supply chain are quietly capturing a larger share of the value than many expected. Samsung’s latest quarter is one of the clearest signs yet that memory is no longer just riding the AI boom. In many ways, it is one of the businesses defining it.

What do you think, is memory now the most underappreciated profit engine in the AI race, or will GPUs and cloud platforms continue to capture the bigger narrative?