NVIDIA Vera Rubin Platforms Could Gain a Major Inference Boost From Groq 3 LPX as Foxconn Ramps AI Server Production

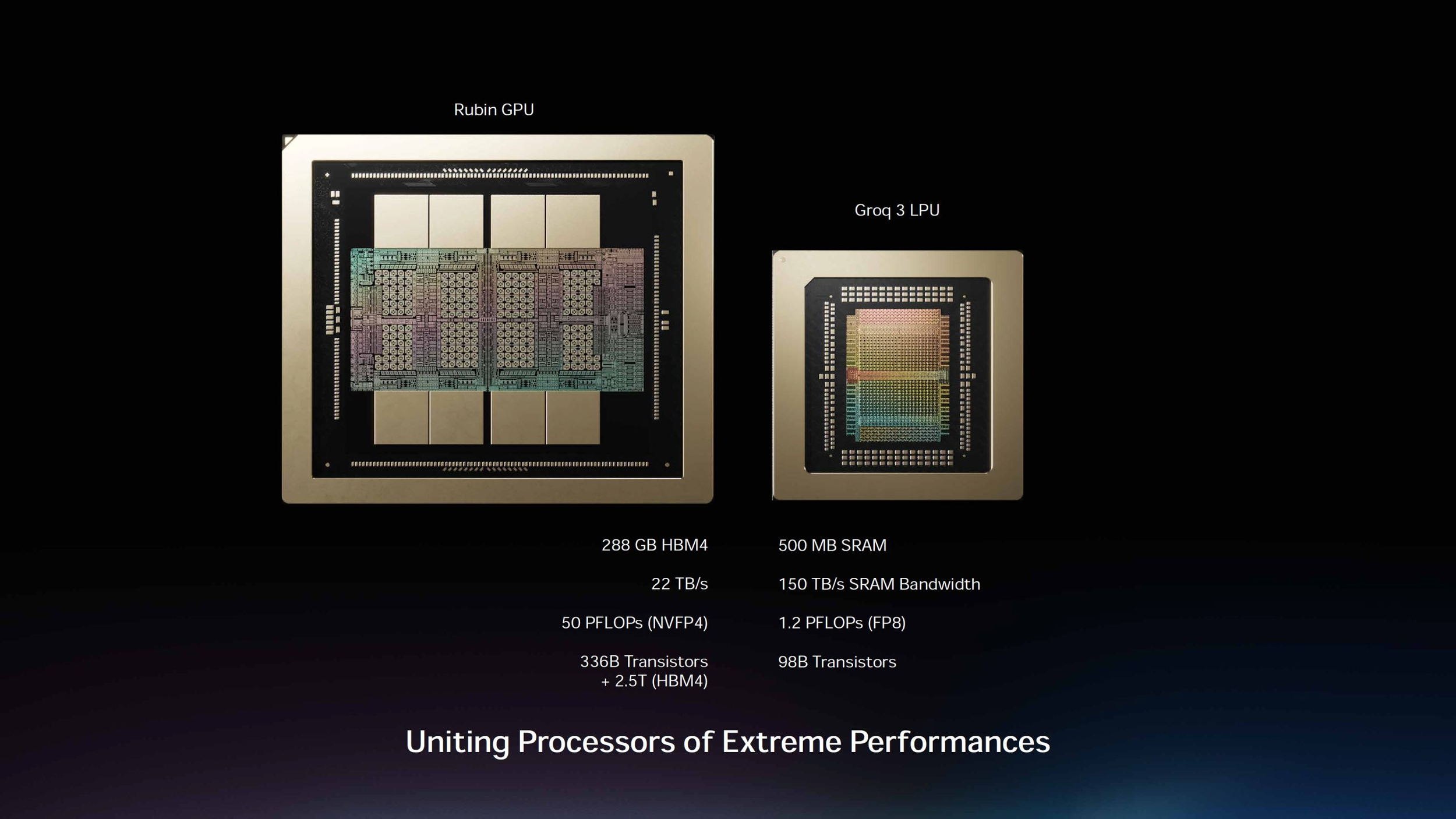

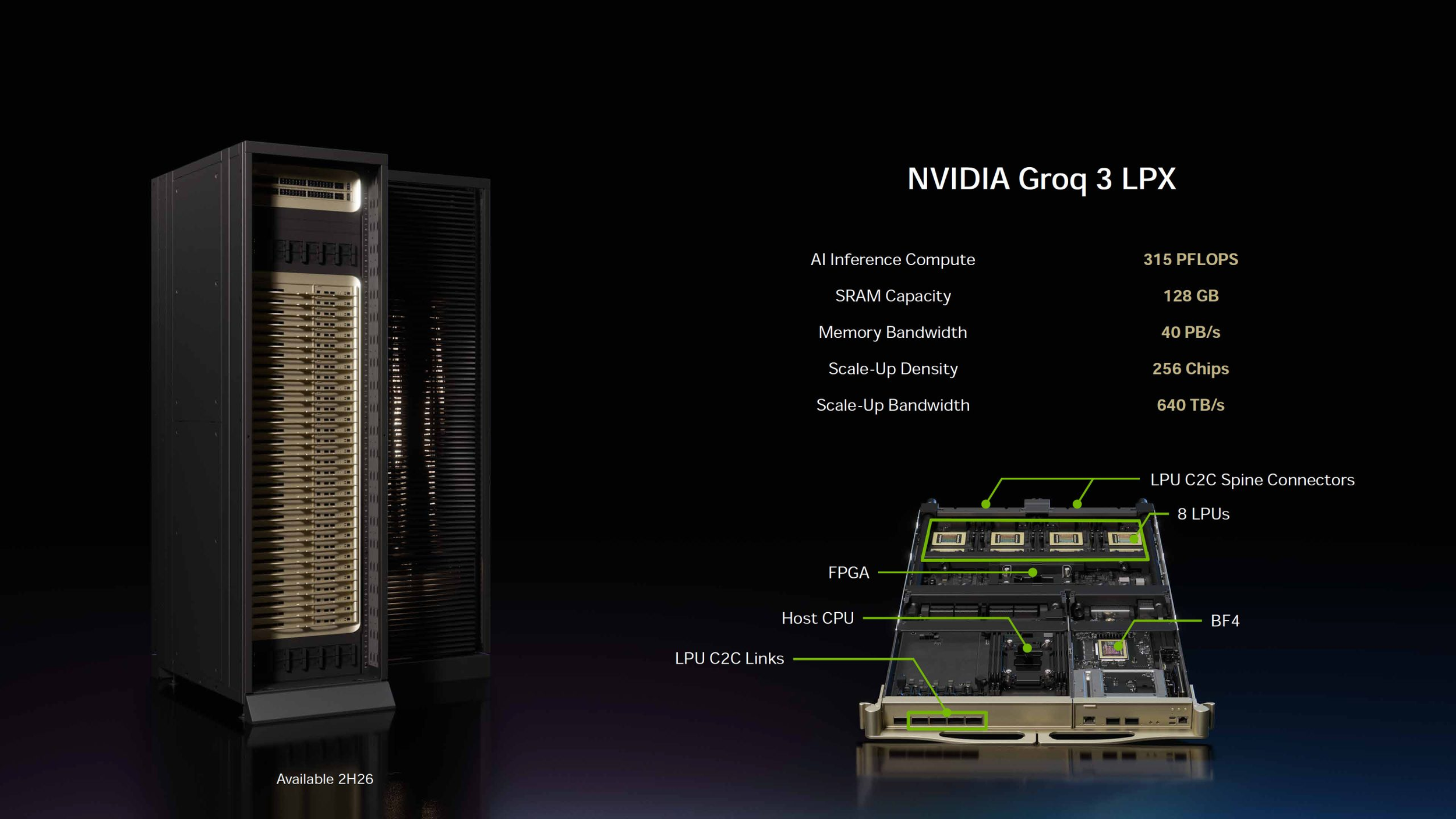

NVIDIA’s Vera Rubin platform is expected to receive a major inference boost from Groq 3 LPX, a rack scale LPU system designed to accelerate low latency AI inference for the agentic AI era. According to NVIDIA’s own LPX platform materials, Groq 3 LPX is built to pair with Vera Rubin NVL72 and improve throughput for trillion parameter models and long context workloads. NVIDIA says Vera Rubin with LPX can deliver up to 35x higher throughput per megawatt for trillion parameter models, although the company notes that projected performance remains subject to change.

The LPX system is designed around low latency token generation, which is becoming increasingly important as agentic AI workloads grow. Traditional GPU heavy systems are strong at training, prefill, and large scale parallel compute, but real time AI agents also require fast decode, deterministic response behavior, and efficient token output at scale. NVIDIA is positioning Groq 3 LPX as a complementary inference accelerator for Vera Rubin rather than a replacement for Rubin GPUs.

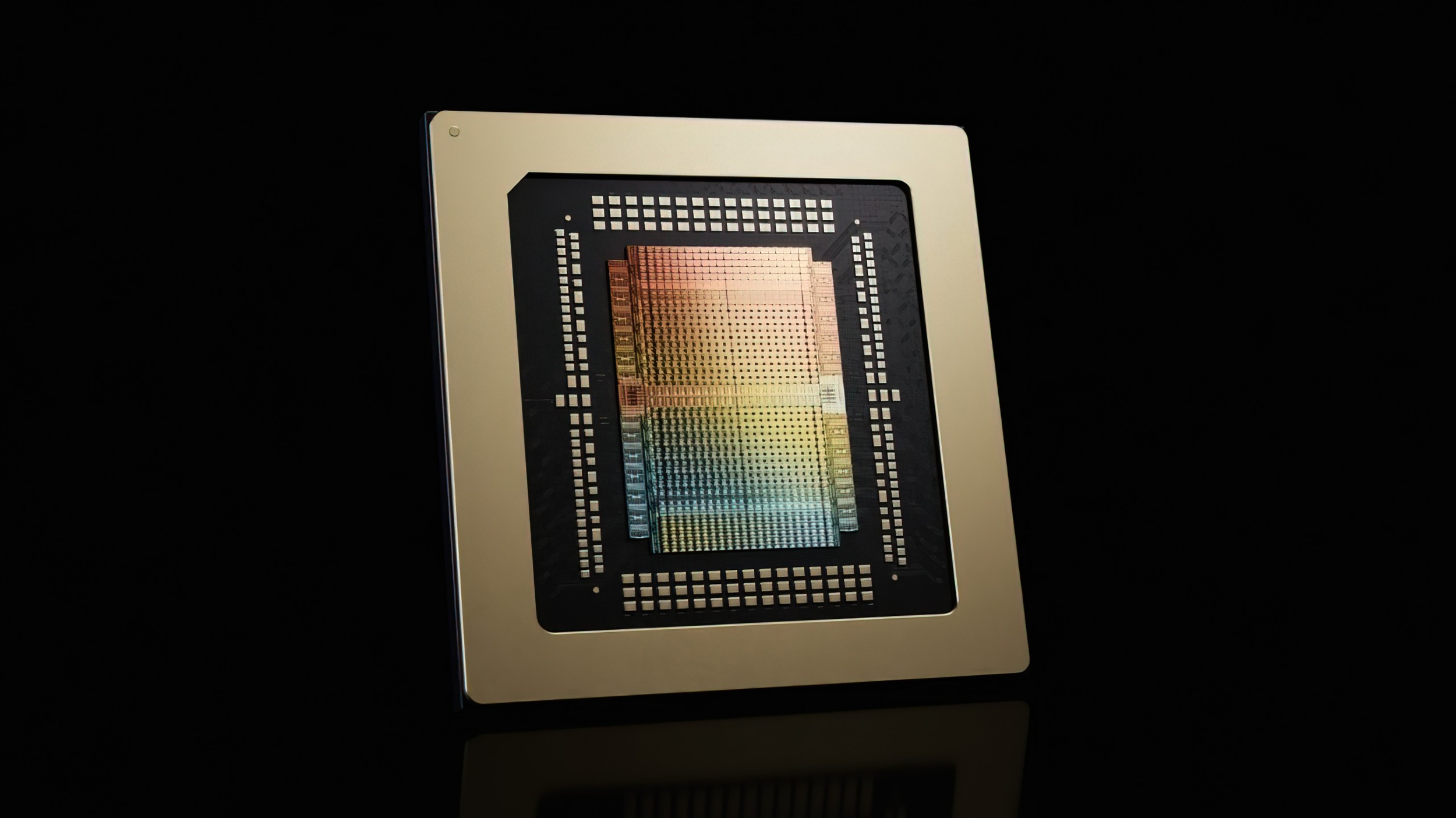

According to NVIDIA’s LPX platform page, the Groq 3 LPX rack is built around 256 Groq 3 LPUs, 128GB of on chip SRAM, and 12TB of DDR5 memory. The system is designed to support multi trillion parameter AI models, million token context windows, and agentic workloads that require high token volume with lower latency. NVIDIA says agentic systems can consume up to 15x more tokens than traditional AI applications, making inference throughput and power efficiency major infrastructure priorities.

Taiwanese supply chain reports now suggest that Groq 3 LPX shipments may begin ahead of schedule in Q3 2026. UDN reports that Foxconn is the exclusive supplier of the computing tray and the main supplier for LPX cabinet assembly, putting the Taiwanese manufacturing giant in a strong position as NVIDIA ramps its next generation AI infrastructure. SDxCentral also cites Taiwan’s Economic Daily News in reporting that Foxconn is the main supplier for the computing tray and cabinet assembly tied to NVIDIA’s Groq 3 LPX rack scale accelerator.

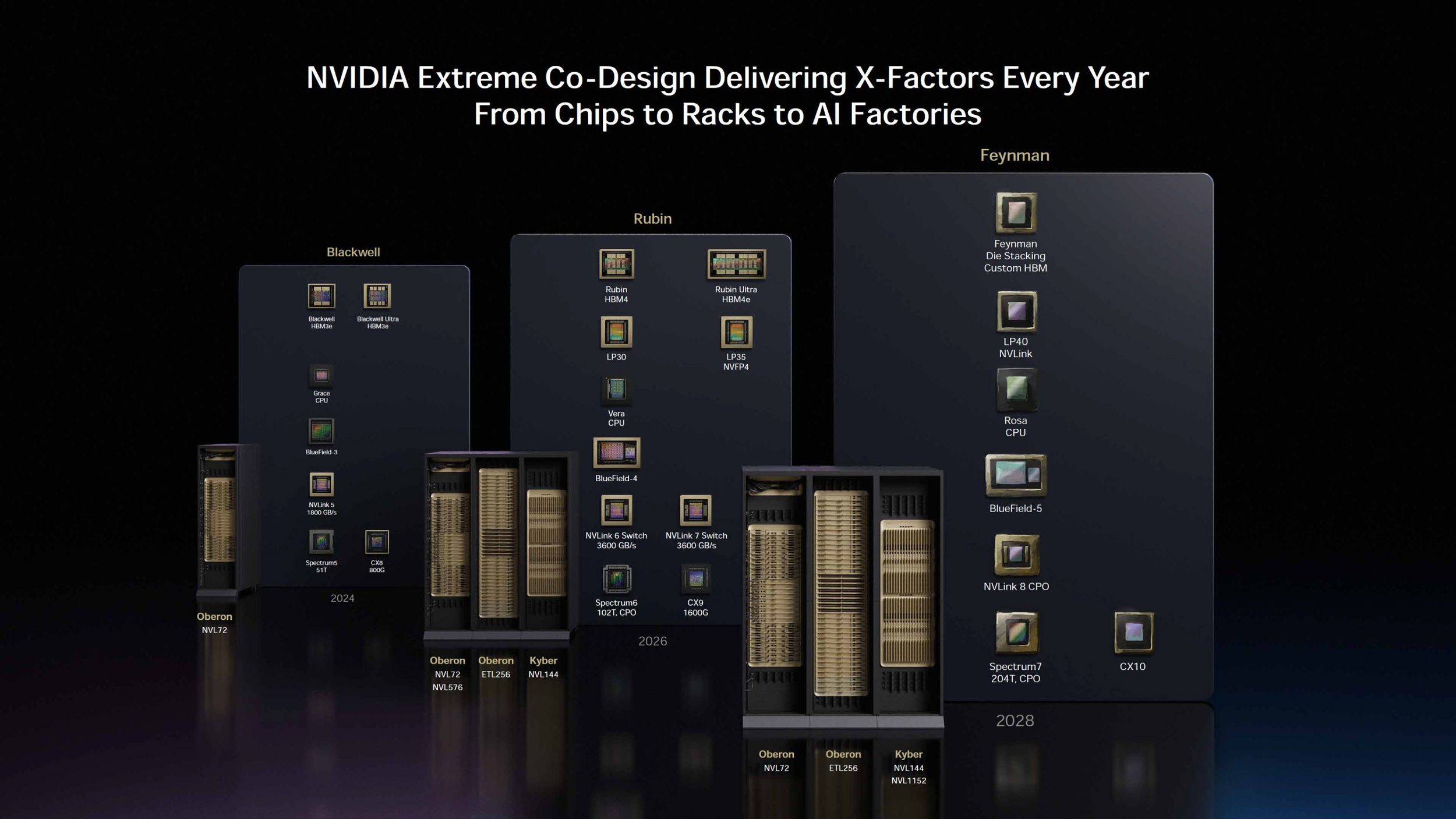

The reported shipment scale is significant. Supply chain estimates point to LP30 and LP35 chips reaching 1.5 million units in 2026 and 2.5 million units in 2027. Foxconn is also reportedly expected to deliver around 6000 Groq 3 LPX racks this year and another 10000 racks in 2027, excluding future LPX racks based on LP40 chips. These figures have not been publicly confirmed by NVIDIA, but they show how aggressively the supply chain is preparing for AI inference growth.

The Vera Rubin side of NVIDIA’s roadmap is also moving quickly. Reports indicate that Vera Rubin NVL72 rack shipments could reach around 12000 units in 2026, with major customers expected to include Google, Amazon AWS, and Microsoft. Mass production of Vera Rubin VR200 NVL72 servers is reportedly expected to begin by the end of Q3 2026. NVIDIA’s broader GTC 2026 data center roadmap also places Vera CPUs, Rubin GPUs, LP30 LPUs, BlueField 4 DPUs, and high speed NVLink and Ethernet networking into the 2026 platform cycle.

Foxconn stands to benefit heavily from this transition. The company is already one of NVIDIA’s most important AI server manufacturing partners, and its role in LPX computing trays and rack assembly could push its share higher in the second half of 2026. Foxconn CEO Liu Yangwei has previously said the company can produce more than 1000 AI server cabinets per week, with capacity expected to rise to 2000 cabinets per week by the end of 2026, according to the supply chain reports cited in the original coverage.

The strategic reason behind LPX is clear: inference is becoming the next major AI battleground. NVIDIA has already dominated GPU training infrastructure, but agentic AI requires more than raw GPU compute. It needs long context handling, fast token generation, low latency decoding, CPU orchestration, and efficient memory movement. By integrating Groq’s LPU technology into the Vera Rubin roadmap, NVIDIA is trying to build a more specialized AI factory stack for both training and high volume inference.

This shift also appears to have changed NVIDIA’s roadmap priorities. Tom’s Hardware reported that NVIDIA removed Rubin CPX accelerators from its official roadmap, with Groq 3 LPUs taking center stage for future low latency inference workloads. The report notes that LP30 uses SRAM for low latency, high performance inference and that NVIDIA’s direction now clearly favors LPUs for future inference acceleration.

For Foxconn, the timing is ideal. AI server manufacturing demand continues to surge, and NVIDIA’s Vera Rubin plus LPX ecosystem gives Foxconn another high value growth driver. For NVIDIA, the combination of Rubin GPUs, Vera CPUs, BlueField DPUs, NVLink, and Groq LPUs gives the company a more complete platform for agentic AI, where inference performance and system level efficiency may become just as important as training leadership.

If the supply chain reports are accurate, Groq 3 LPX could become one of NVIDIA’s most important inference products in 2026. The key question now is whether the early shipment ramp can meet real world deployment demand and whether the promised 35x throughput per megawatt advantage translates into measurable gains for large scale AI customers.

Will Groq 3 LPX make NVIDIA Vera Rubin the strongest platform for agentic AI inference, or will competing custom inference silicon challenge NVIDIA’s full stack strategy?