NVIDIA Says AI Compute Revenue Could Reach $1 Trillion by 2027 as Inference Hits an “Inflection Point”

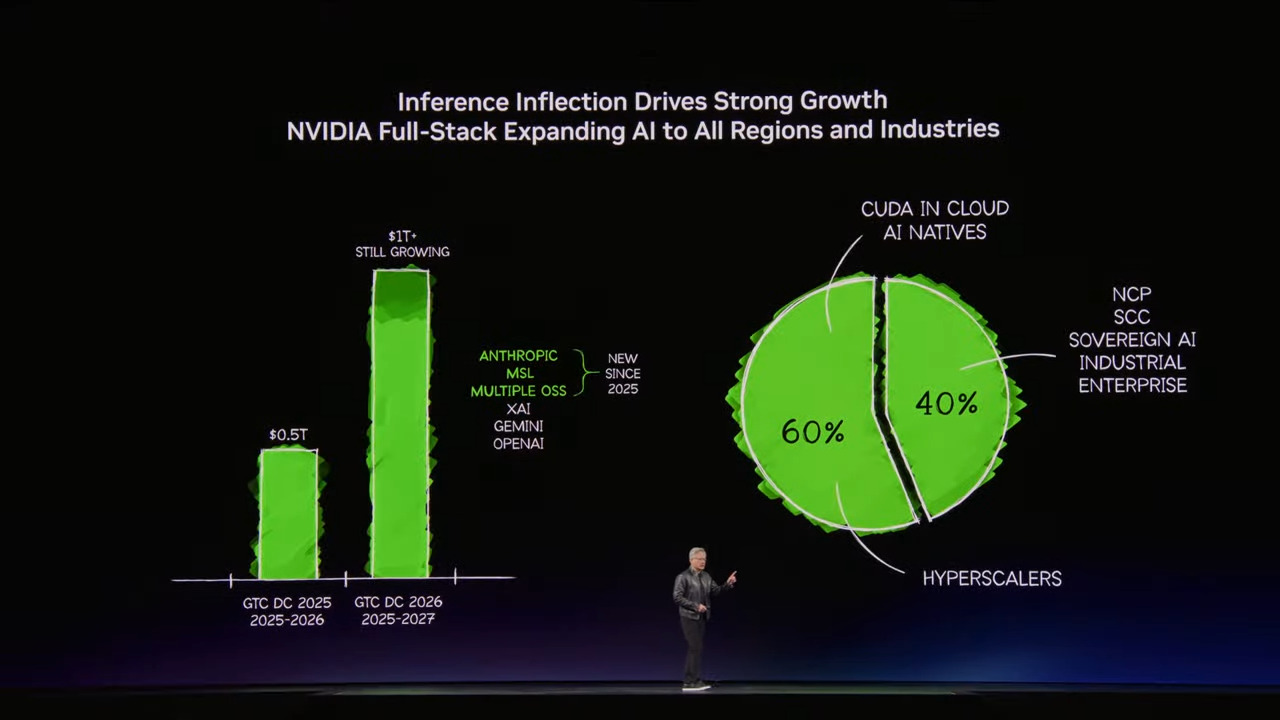

NVIDIA is dramatically raising its view of the AI hardware market, with chief executive officer Jensen Huang now saying the revenue opportunity for the company’s AI chips could reach at least $1 trillion through 2027. That figure, presented during GTC 2026, is roughly double the company’s earlier $500 billion forecast and reflects NVIDIA’s belief that the industry has entered a new phase where inference, not just training, is driving the next major wave of compute demand.

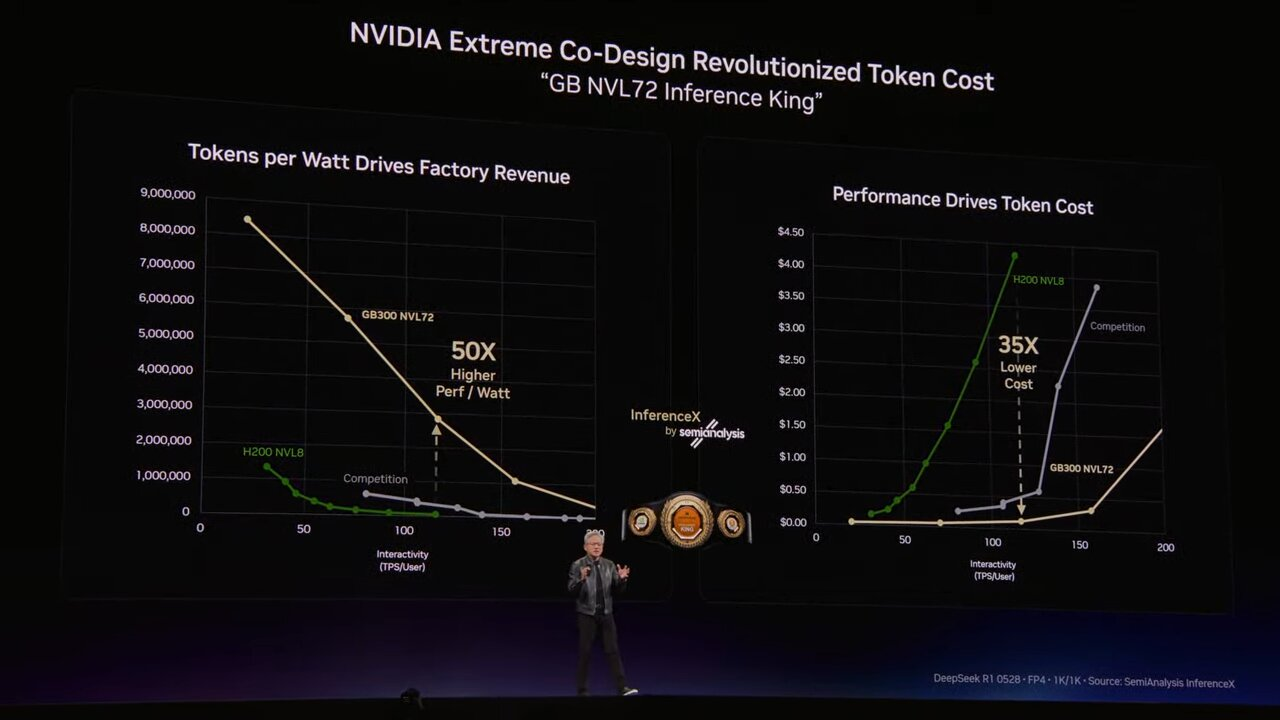

The shift in emphasis is crucial. Reuters reported that NVIDIA used this year’s GTC to focus heavily on inference, the part of AI deployment where models generate responses in real time rather than being trained in the first place. Huang described this as an “inflection point of inference,” arguing that AI systems now need to reason, respond, and operate continuously in live environments, which sharply increases the scale and intensity of required infrastructure.

Huang also said that compute requirements have grown by 1,000,000 times over the past 2 years, underscoring just how aggressively NVIDIA sees demand accelerating as the market moves toward agentic AI and real time inference heavy workloads. MarketWatch’s live GTC coverage specifically highlighted Huang’s use of the phrase “the inflection point of inference has arrived,” while Reuters tied that framing directly to NVIDIA’s updated trillion dollar revenue opportunity estimate.

That projection is not being framed as a distant long term theory. Barron’s reported that Huang revised NVIDIA’s expected data center revenue opportunity for 2025 through 2027 to $1 trillion, while also noting that NVIDIA generated $192 billion in data center sales over the past year, up 66%. In other words, NVIDIA is grounding this outlook in a business that is already operating at extraordinary scale, not in a market it still needs to create from scratch.

A major driver behind that confidence appears to be the expanding role of AI inference itself. Reuters says NVIDIA is increasingly positioning its products around the real time operation of AI systems, not just training, and that this includes new infrastructure around Vera Rubin as well as specialized inference solutions. NVIDIA’s own announcement for the Vera Rubin platform says it is designed to open the “next frontier of agentic AI,” with systems intended to scale the world’s largest AI factories.

The broader market context supports NVIDIA’s argument that compute supply remains tight. Reuters reported that NVIDIA sees rising demand from AI deployments across the market, while other coverage noted that even older generations such as Ampere and Hopper are benefiting from continued demand pressure. That reinforces the company’s view that the industry is not just cycling into the next product generation normally, but is operating under a wider structural compute bottleneck.

There is still room for skepticism. The Financial Times noted that Wall Street forecasts remain lower than NVIDIA’s own trillion dollar projection, with analysts modeling about $835 billion in total NVIDIA revenue through early 2028, meaning Huang’s guidance is still more aggressive than consensus. That gap matters because NVIDIA is effectively asking investors and customers to believe that the inference phase of AI will unlock an even larger spending cycle than the already historic training boom.

Even so, the strategic message from GTC is unmistakable. NVIDIA is no longer talking about AI compute growth as a continuation of the same trend. It is describing a step change, where inference, agentic workloads, and AI factories turn compute into an even larger market than the one built around model training. If that thesis holds, the company is not just projecting higher revenue. It is arguing that AI infrastructure is entering a new commercial category altogether. That last point is an inference from NVIDIA’s trillion dollar forecast, its heavy GTC focus on inference, and the rollout of Rubin era platforms aimed at agentic AI.

What do you think, is NVIDIA’s trillion dollar compute forecast realistic, or is the company getting ahead of what the inference market can actually sustain over the next 2 years?