DRAM Contract Prices Keep Climbing, but NVIDIA Still Looks Better Positioned Than Most of the AI Market

Rising DRAM prices are becoming one of the most important cost pressures in the AI infrastructure market, and the effect is no longer limited to memory vendors or supply chain analysts. New industry reporting indicates that memory is taking a much larger share of hyperscaler spending than it did just a few years ago, with SemiAnalysis arguing that memory could account for roughly 30% of hyperscaler AI data center spending in 2026, up sharply from prior years. At the same time, TrendForce says conventional DRAM contract prices are still moving higher, with 2Q26 contract pricing expected to rise 58% to 63% quarter over quarter, following already severe increases in 1Q26.

That combination helps explain why the memory market has become such a major strategic issue for cloud and AI giants. The old assumption that compute was the dominant cost center in AI systems is being challenged by the sheer amount of memory now needed across modern infrastructure. Rack scale AI deployments, custom silicon platforms, and larger memory pools are all driving stronger demand for DDR5, LPDDR5, and HBM, while supply remains tight because manufacturers continue to prioritize the most profitable server and AI segments. TrendForce said suppliers are still reallocating capacity toward server related applications, keeping overall supply constrained and prices elevated.

Memory is taking over Hyperscaler CapEx.

— SemiAnalysis (@SemiAnalysis_) April 3, 2026

In CY23 and CY24, memory was ~8% of total Hyperscaler spend. We estimate it hits 30% in CY26 and moves higher in CY27. That's a near-4x shift in just four years. (1/4) 🧵 pic.twitter.com/fUxpwUYfcO

What makes this especially notable is that, despite the inflation, there is little evidence that hyperscalers are materially stepping back. The spending burden is rising, but the appetite for AI infrastructure has remained extremely strong. Reuters previously reported that the AI buildout was already driving a global memory supply crunch, with SK hynix expecting shortages to last through late 2027, while smartphone and consumer electronics players were warning about higher prices as supply shifted toward AI driven demand.

That is where NVIDIA’s position becomes the real differentiator. According to SemiAnalysis reporting summarized by Tom’s Hardware, NVIDIA holds “Very Very Preferred” supplier status for DRAM, giving it better pricing and capacity access than much of the broader market. SemiAnalysis argues this allows NVIDIA to secure supply at rates below what many hyperscalers and competitors are seeing, which in turn helps protect its server economics even as memory costs rise across the rest of the industry.

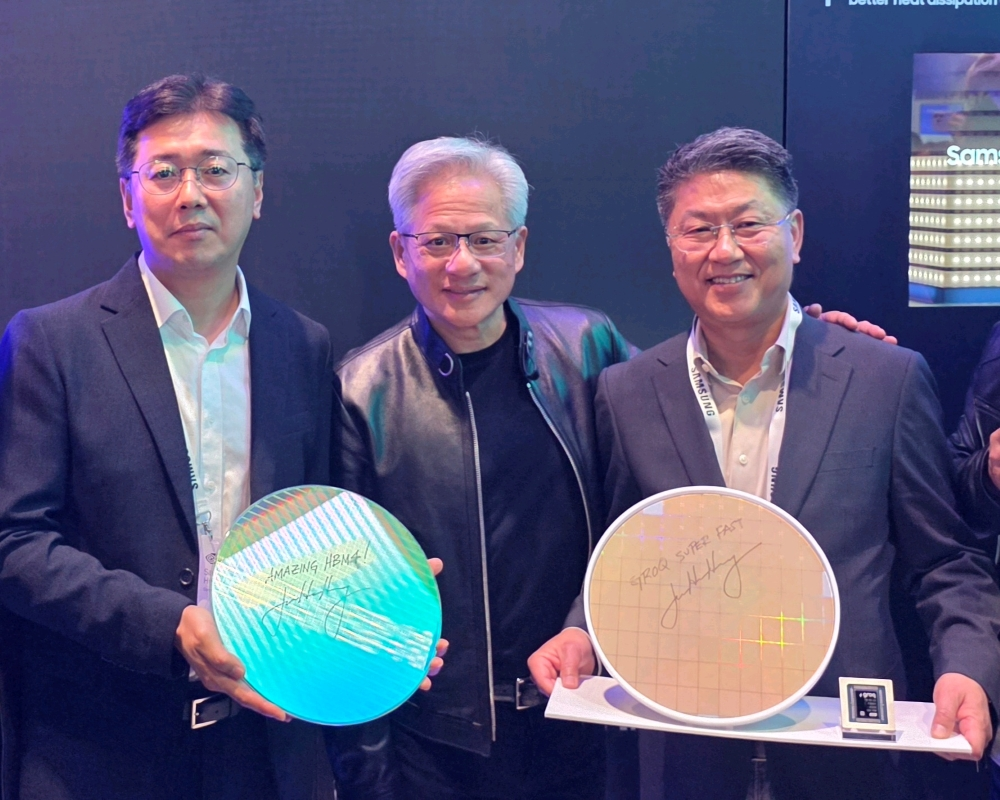

This also lines up with Jensen Huang’s broader message about NVIDIA’s supply strategy. Reporting from early 2026 noted that Huang said the company had already locked down key pieces of the AI infrastructure stack, including memory, wafers, advanced packaging, systems, and interconnect related components. That kind of forward contracting is one of the reasons NVIDIA has looked structurally harder to challenge than many rivals expected. It is not just about GPU performance leadership. It is also about controlling enough of the surrounding supply chain to keep shipping while others are forced to pay more or wait longer.

That does not mean NVIDIA is completely immune to memory constraints. Barron’s reported this week that delays in securing enough HBM4 from SK hynix and Micron could reduce 2026 Rubin GPU output from around 2 million units to 1.5 million units, showing that even NVIDIA can still face bottlenecks at the very high end of the stack. But the same reporting also suggested that the problem is being viewed as manageable rather than existential, which says a great deal about how strong NVIDIA’s procurement position still is compared with the rest of the field.

The wider implication is clear. Memory inflation is no longer a side story in AI infrastructure. It is becoming a core capital allocation issue. If memory really is consuming around 30% of hyperscaler AI spending, and if contract pricing continues climbing at the pace TrendForce is projecting, then the competitive landscape will increasingly favor the companies that secured preferred supply early and can absorb the cost better than everyone else. Right now, NVIDIA appears to be one of the clearest examples of that advantage.

For the rest of the market, this is where the gap starts to widen. Competing with NVIDIA is not just about building a faster accelerator or a better rack concept. It also means matching a supply chain position that spans memory, packaging, and system integration at a moment when every one of those inputs is under pressure. As AI infrastructure keeps scaling, that advantage may prove just as important as the silicon itself.

Do you think rising DRAM costs will eventually slow hyperscaler AI spending, or will companies keep absorbing the hit as long as infrastructure demand keeps accelerating?