NVIDIA Says AI Infrastructure Should Be Measured by Cost Per Token, Not FLOPS Per Dollar

NVIDIA is pushing a new way for enterprises to evaluate AI infrastructure, arguing that the industry needs to stop focusing on raw compute metrics and instead judge systems by one business outcome above all others: cost per token. In its recent blog post, Rethinking AI TCO: Why Cost per Token Is the Only Metric That Matters, the company says traditional data center thinking no longer fits the economics of modern inference heavy AI deployments, where the real output is tokens rather than theoretical compute.

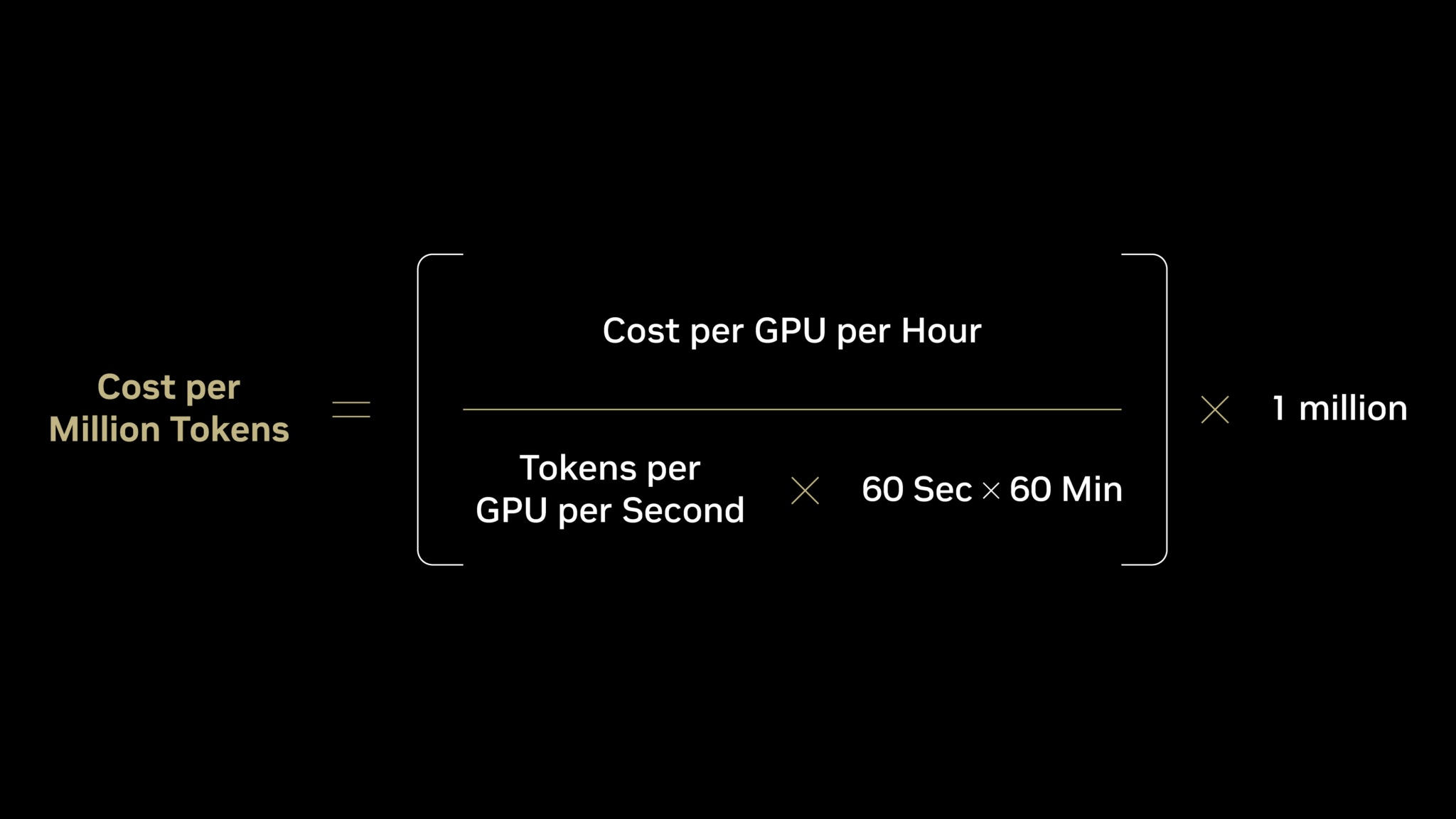

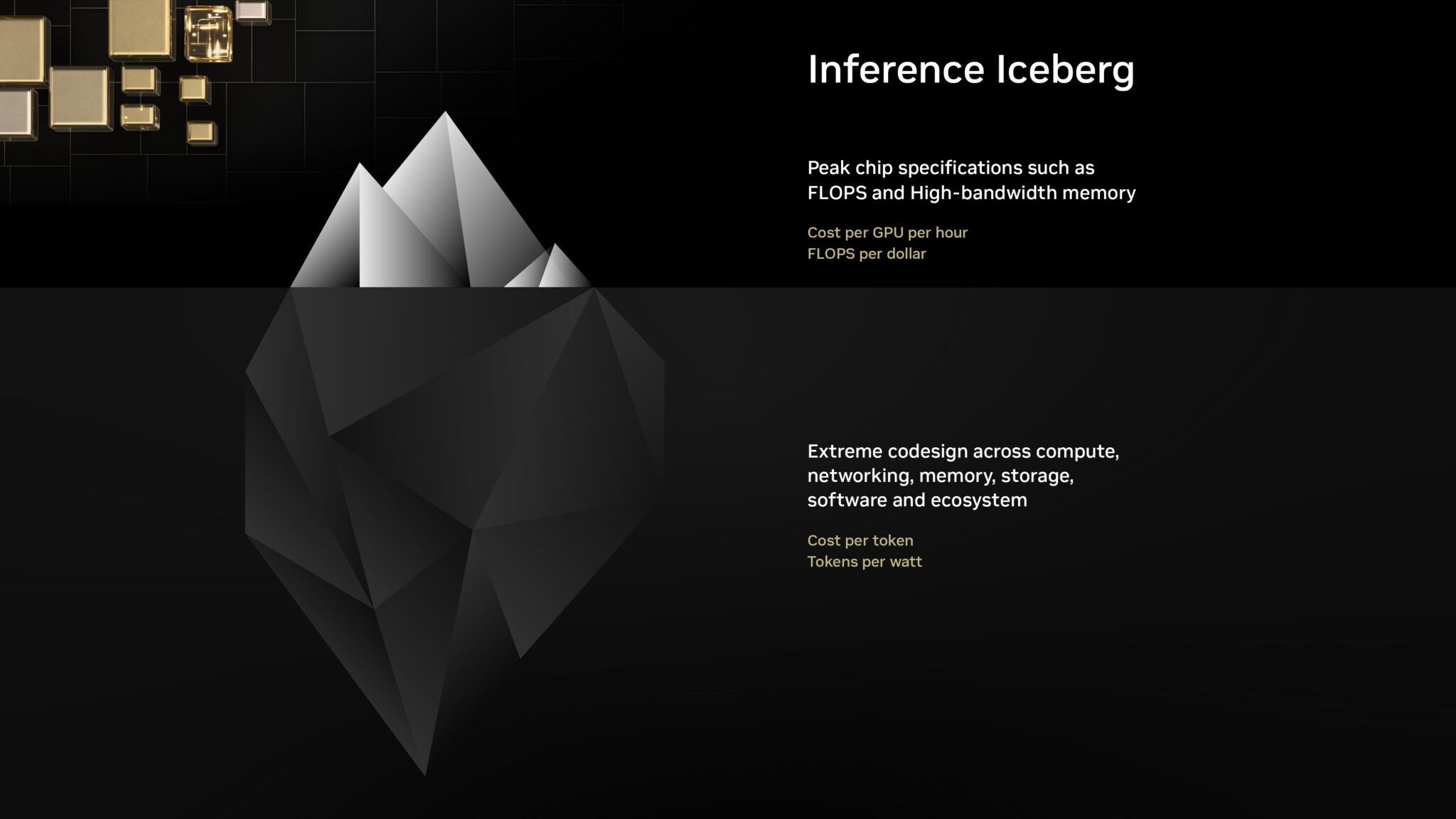

According to NVIDIA, many enterprises still lean on metrics such as compute cost and FLOPS per dollar when comparing infrastructure, but the company argues those are only input measurements. Its case is that AI factories should instead be judged by the all in cost of producing each delivered token, typically represented as cost per million tokens. NVIDIA explicitly defines compute cost as what enterprises pay for AI infrastructure, FLOPS per dollar as the amount of raw compute bought for each dollar spent, and cost per token as the actual delivered output cost that better reflects real world inference economics.

The logic behind that argument is straightforward. NVIDIA says focusing mainly on cost per GPU hour misses the more important side of the equation, which is token output. In its framing, higher delivered token throughput lowers token cost and also increases tokens per megawatt, which in turn can improve both profit margins and revenue generation from the same infrastructure footprint. That is why the company says enterprises should care less about peak chip specifications on their own and more about how effectively a full stack platform turns infrastructure spending into usable inference output.

To support that point, NVIDIA compares Hopper and Blackwell using data it says is sourced from its own analysis and the SemiAnalysis InferenceX v2 benchmark. The company’s published example shows Blackwell with roughly 2 times the cost per GPU per hour and 2 times the FLOPS per dollar of Hopper, but it argues that those figures understate the true business gap because token throughput is dramatically higher on Blackwell. NVIDIA says that in this example Blackwell reaches 6,000 tokens per second per GPU versus 90 for Hopper, delivers 2.8 million tokens per second per megawatt versus 54,000, and drops cost per million tokens from 4.20 dollars to 0.12 dollars. NVIDIA summarizes that as more than 50 times greater token output per watt and nearly 35 times lower cost per million tokens.

| Metric | NVIDIA Hopper (HGX H200) | NVIDIA Blackwell (GB300 NVL72) | NVIDIA Blackwell Relative to Hopper |

|---|---|---|---|

| Cost per GPU per Hour ($) | $1.41 | $2.65 | 2x |

| FLOP per Dollar (PFLOPS) | 2.8 | 5.6 | 2x |

| Tokens per Second per GPU | 90 | 6,000 | 65x |

| Tokens per Second per MW | 54K | 2.8M | 50x |

| Cost per Million Tokens ($) | $4.20 | $0.12 | 35x lower |

NVIDIA’s broader message is that real AI total cost of ownership should now be tied to delivered inference, not just hardware pricing or theoretical peak performance. The company also argues that token economics depend on more than silicon alone, pointing to networking, memory, storage, software optimization, serving layers, speculative decoding, FP4 support, routing, cache handling, and overall ecosystem integration as factors that determine whether token output stays high in practice. In other words, NVIDIA is not just defending its chips here. It is defending its full stack strategy.

That is also where the argument becomes more strategic. NVIDIA says cost per token captures the value of hardware performance, software optimization, ecosystem support, and real world utilization in a way FLOPS per dollar cannot. This is a notable positioning move because it shifts the competitive conversation away from simpler spec sheet comparisons and toward end to end delivered output, an area where NVIDIA believes its software and platform integration create a stronger advantage.

There is, of course, a clear marketing dimension here. The comparison comes from NVIDIA’s own blog, and the company is naturally choosing a metric that better highlights the benefits of its current Blackwell platform and software ecosystem. Still, the underlying point is not unreasonable. If enterprises are deploying AI primarily for inference and monetizable output, then the cost of each delivered token is arguably a more commercially relevant measure than raw floating point capability in isolation. The key question is whether buyers accept NVIDIA’s framing and whether competing vendors can present comparable audited token economics on their own systems. That last part is partly an inference from NVIDIA’s argument, but it follows directly from the way the company is trying to redefine the benchmark conversation.

For the AI market, this is a meaningful shift in language. NVIDIA is trying to move the discussion away from what infrastructure costs and toward what infrastructure actually produces. If that framing takes hold, future AI hardware battles may be judged less by peak compute headlines and more by the efficiency and profitability of large scale inference output.

Do you think cost per token should become the main benchmark for AI infrastructure, or do raw compute metrics still matter more when buyers make long term platform decisions?