Intel Unveils Texture Set Neural Compression SDK at GDC 2026, Promising Up to 18x Smaller Texture Sets

Intel has stepped deeper into neural graphics at GDC 2026 by formally presenting its new Texture Set Neural Compression, or TSNC, as a standalone SDK aimed at game developers. The technology builds on the research prototype Intel showed at GDC 2025, but this time the company is positioning it as a productized tool rather than an experimental demo. Intel says TSNC can reduce texture set size by up to 18x compared with uncompressed source data, offering developers a new way to cut install size, memory usage, and streaming overhead in modern games.

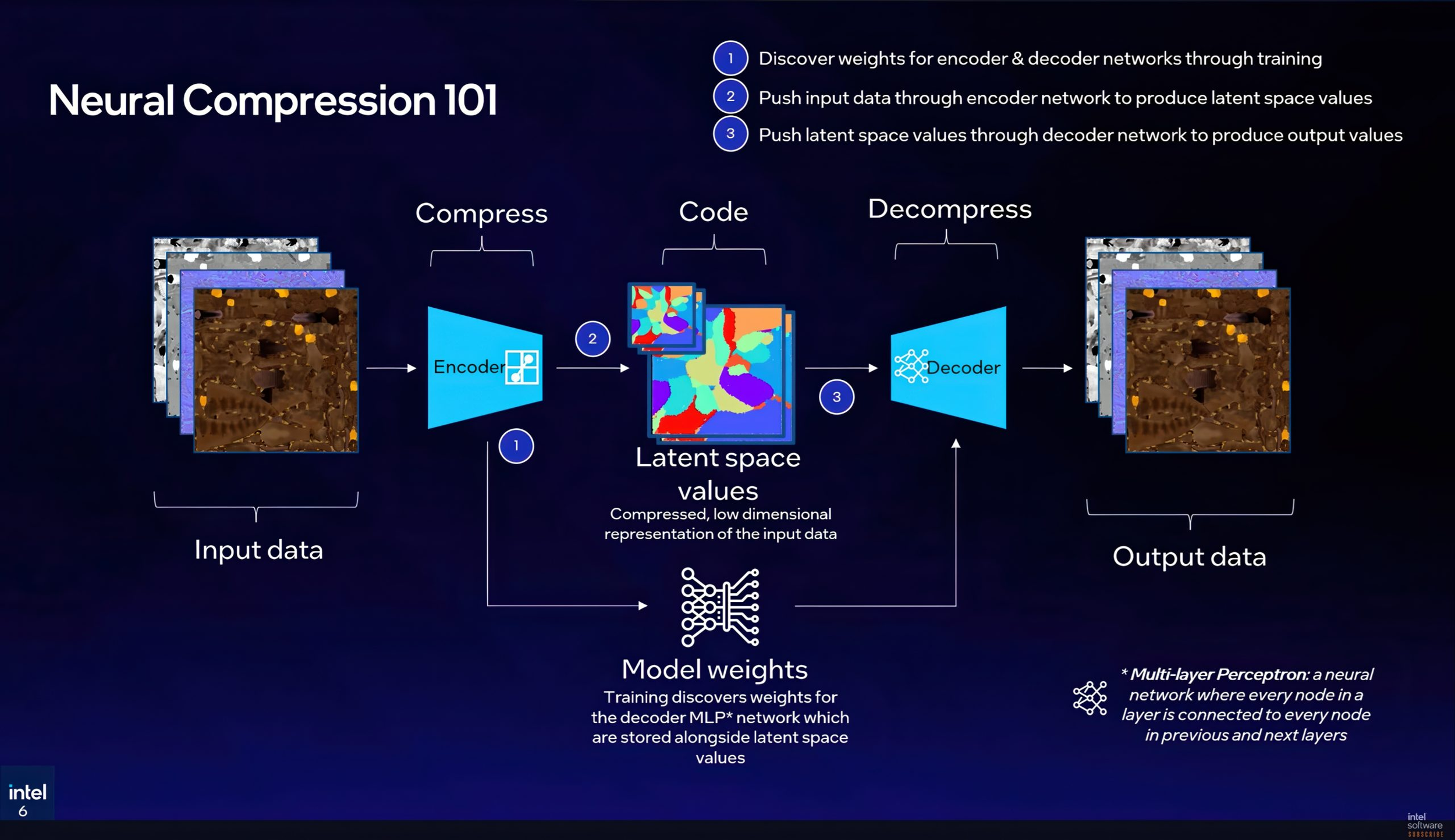

At its core, TSNC is designed to compress complete material texture sets more intelligently than traditional GPU block compression formats such as BC1 through BC7. Instead of treating each texture or channel in isolation, Intel’s approach trains a compact neural model to reconstruct the shared information across a full physically based rendering material set, including maps such as diffuse, normal, roughness, metallic, ambient occlusion, and emissive. That matters because those texture channels often contain overlapping structure, and TSNC is meant to exploit that redundancy more effectively than fixed rule based compression formats can.

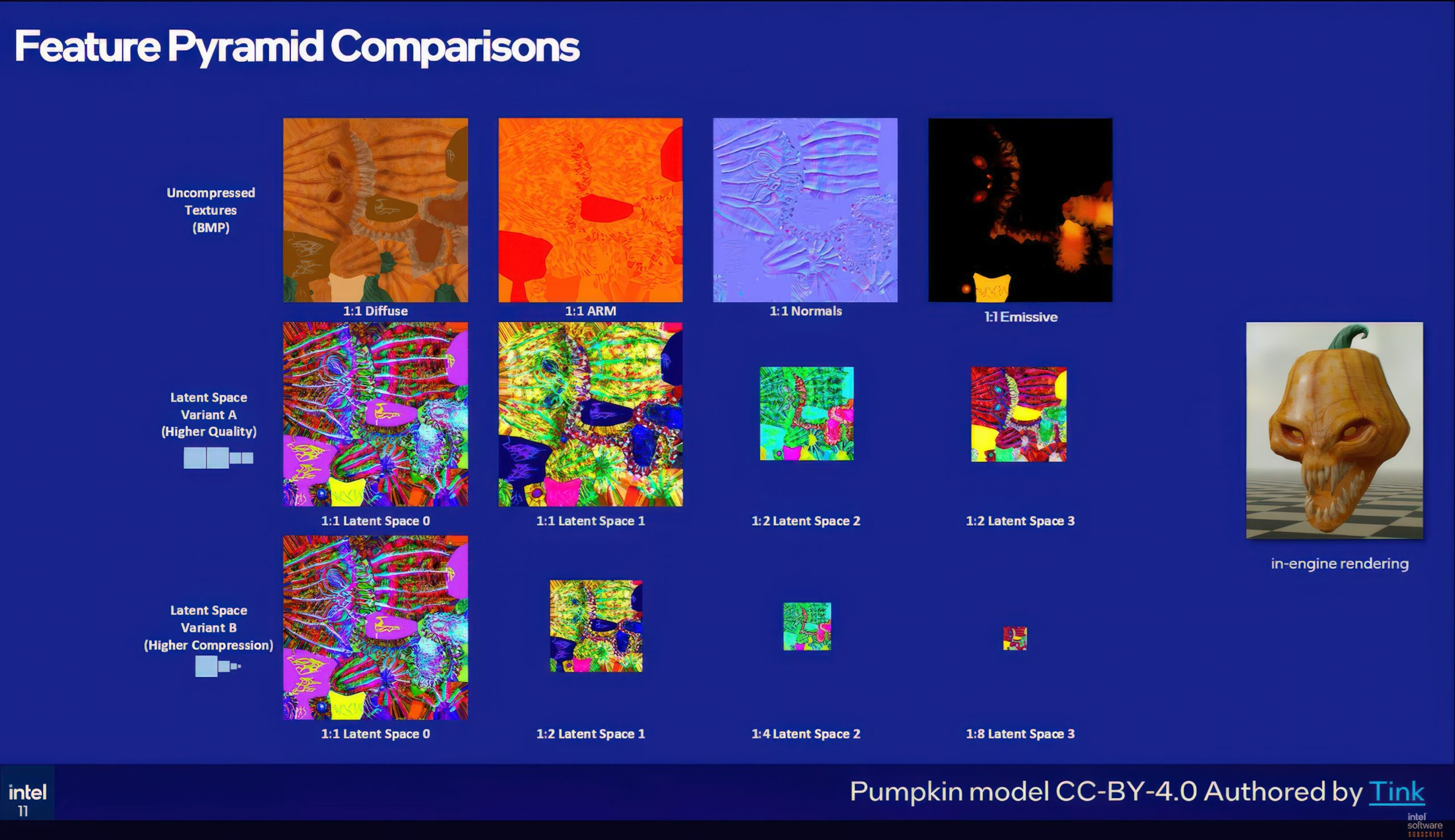

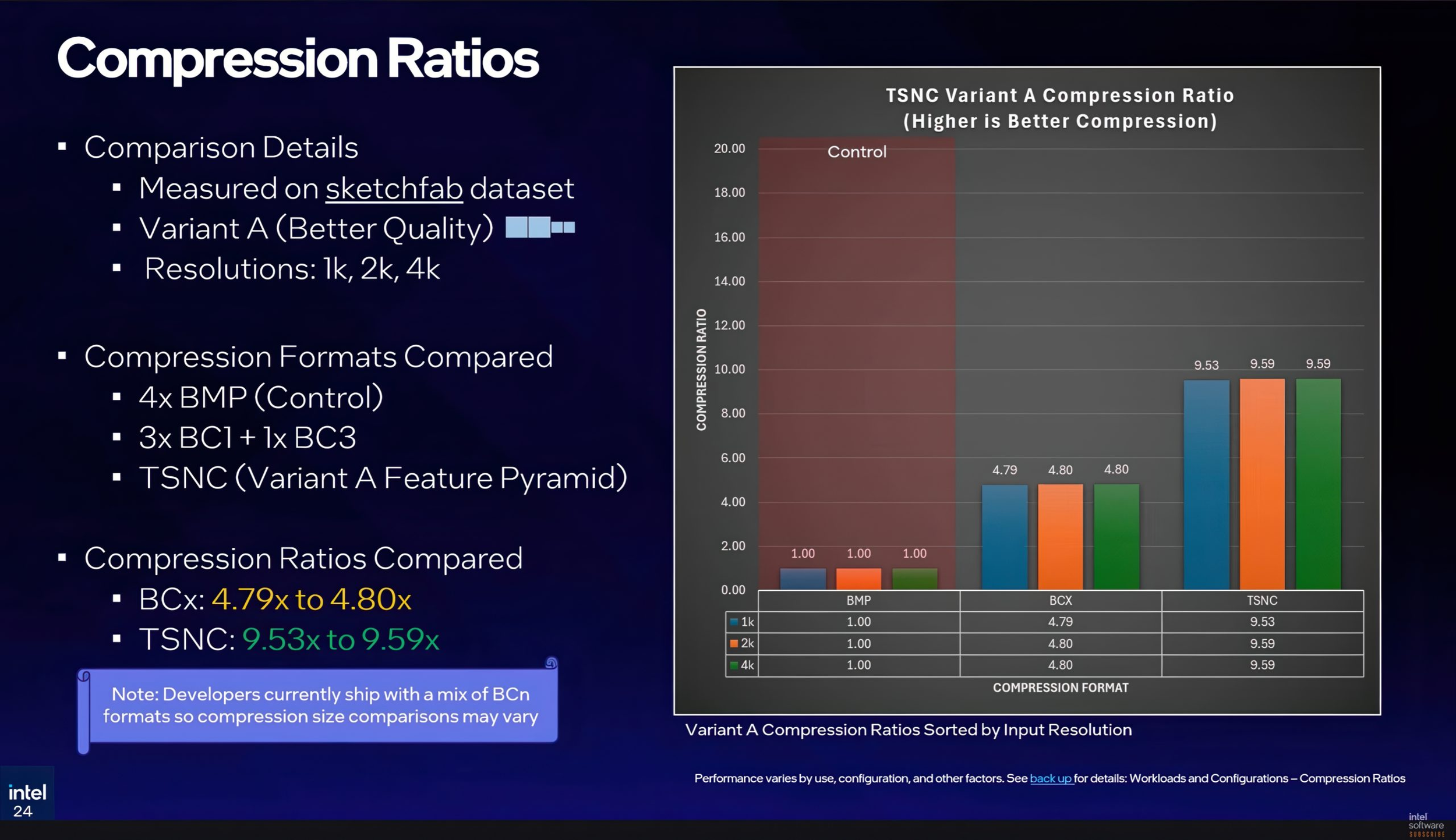

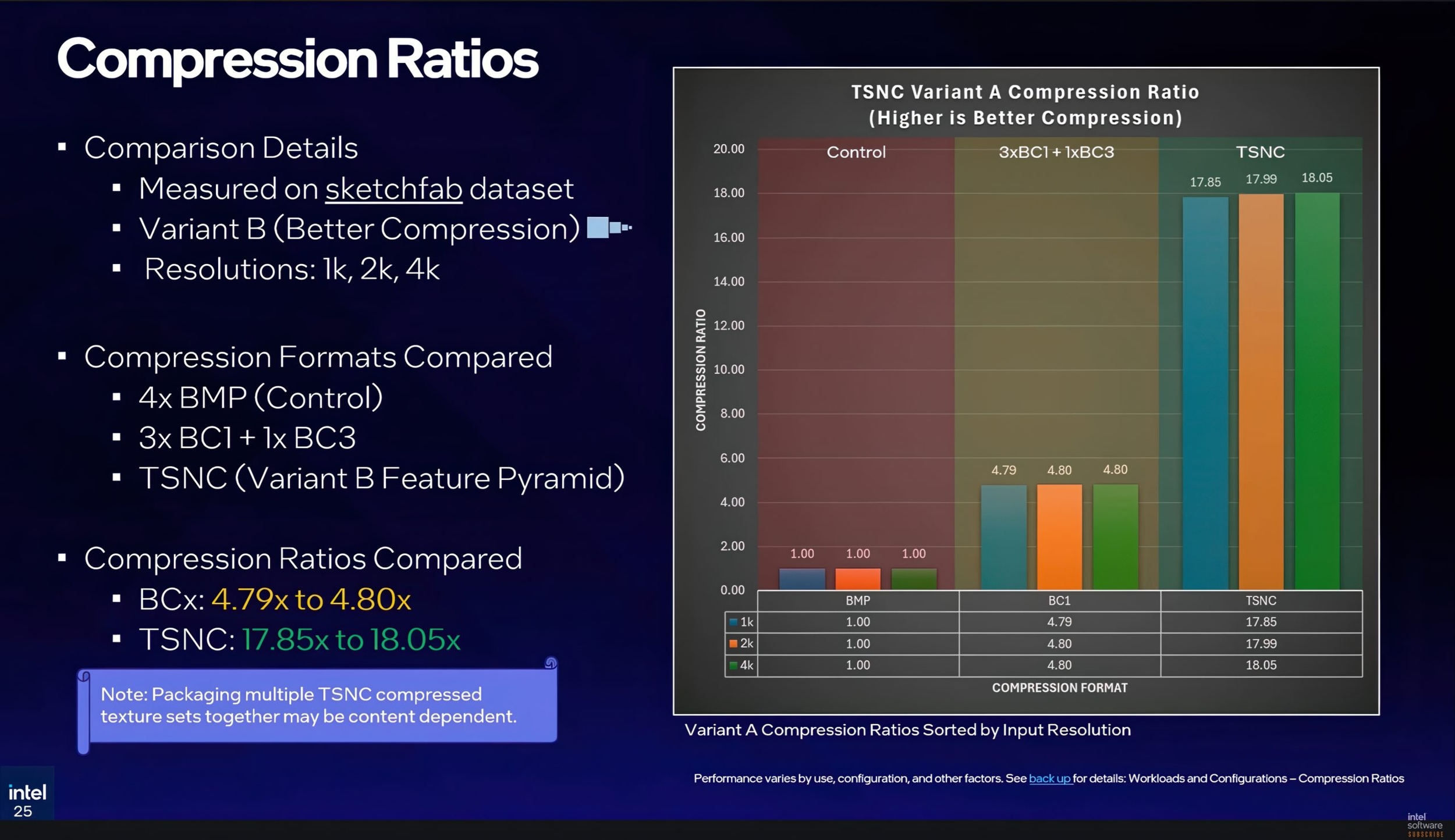

Intel presented 2 main quality tiers for the feature pyramid that underpins TSNC. Variant A is the higher quality option, using 2 full resolution latent images and 2 half resolution latent images. Intel said that for a 4K input texture set, this comes out to around 26.8 MB versus 256 MB of uncompressed source bitmaps, or a little over 9x compression. That is notably better than the roughly 4.8x reduction developers would get from standard BC compression alone, while keeping visual loss relatively limited. Intel’s presentation showed perceptual error around 5% using NVIDIA’s FLIP analysis tool.

Variant B is the more aggressive preset. It reduces the latent image pyramid further down to 1/2, 1/4, and 1/8 resolution levels, pushing compression past 17x and up toward the headline 18x figure. The tradeoff is more visible quality degradation, particularly in normal maps and some material channels where block artifacts become easier to spot. Intel indicated that perceptual error climbs into the 6% to 7% range here, enough to become noticeable, which suggests this mode is better suited to less critical materials or assets viewed at a distance.

A key part of the announcement is that Intel has reworked the underlying implementation since last year’s prototype. The original research path used PyTorch, but the current SDK has been rewritten around Slang compute shaders, which gives it a more practical route into real engine workflows. Intel also emphasized that the decompressor can target different execution backends, whether the developer is deploying it in Unreal Engine, a custom engine, or even running decompression on the CPU. That flexibility is important because neural texture compression only becomes viable at scale if studios can integrate it without completely reshaping their content pipelines.

On the acceleration side, Intel is tying TSNC to DirectX 12 Cooperative Vectors, using Intel Arc’s XMX matrix hardware for faster inference where available. Intel also said there is an FMA fallback path for hardware without XMX support, allowing the technique to run on CPUs and on non Intel GPUs as well, though with lower performance. This cross hardware fallback is one of the more strategically important parts of the announcement because it gives TSNC a broader potential footprint than a solution locked entirely to one vendor’s AI hardware.

Intel outlined 4 deployment models for developers, each with different tradeoffs. At install time, games can ship compressed textures and decompress them locally during installation, mainly reducing download and distribution overhead. At load time, compressed textures stay on disk and are decompressed into VRAM during loading, helping with install size and memory management. At stream time, decompression happens on demand alongside texture streaming, which balances storage and runtime efficiency. Finally, at sample time, textures remain compressed in VRAM permanently and are decoded per pixel inside the shader, which is the most aggressive VRAM saving option but also the most inference intensive.

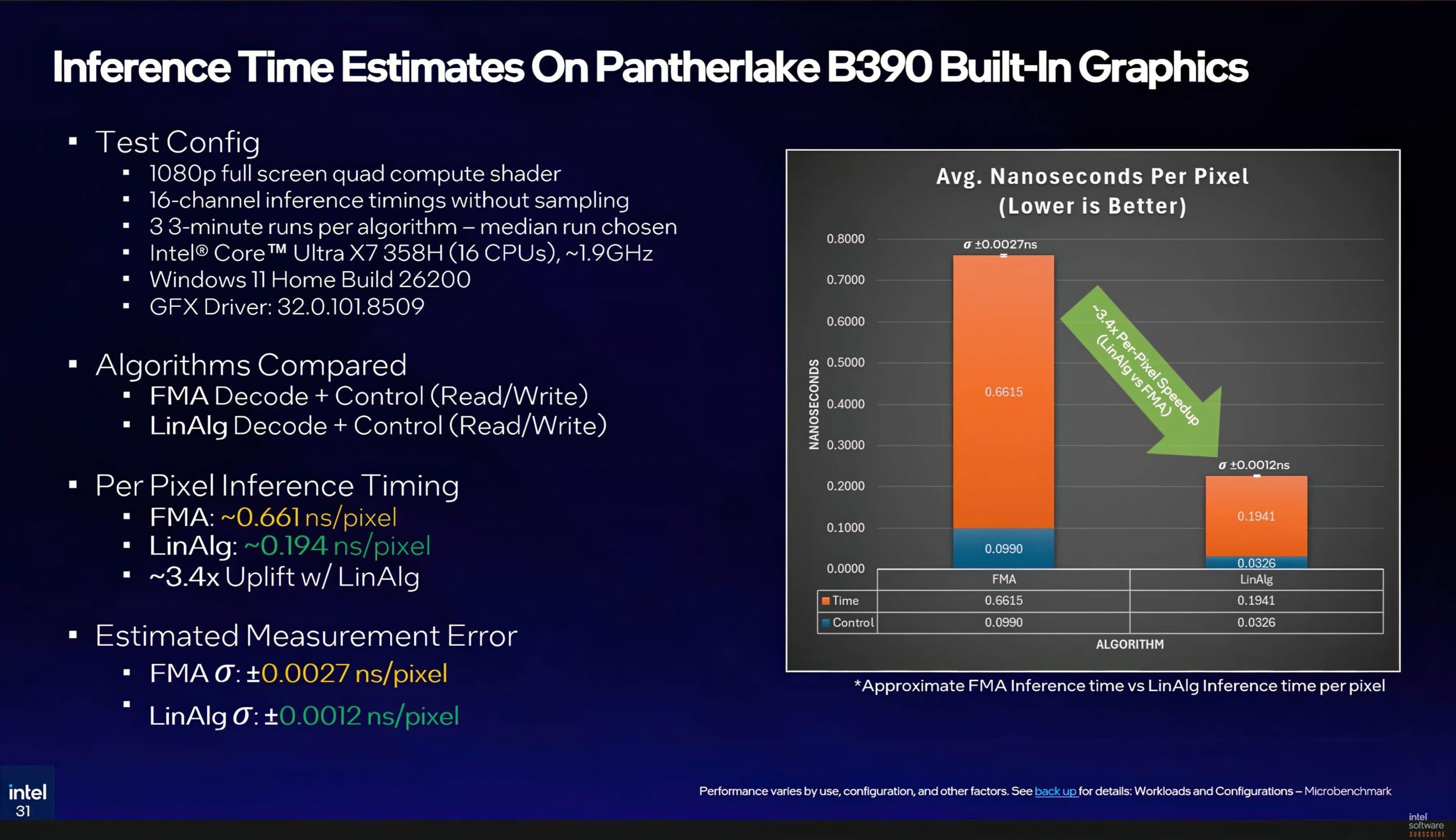

Intel also shared early performance numbers from a Panther Lake laptop using B390 integrated graphics at a full 1080p compute shader workload. According to the presentation, the standard FMA path ran at 0.661 nanoseconds per pixel, while the XMX accelerated linear algebra path reached 0.194 nanoseconds per pixel, a roughly 3.4x speedup. Those numbers are especially interesting because if sample time decoding is already plausible on integrated graphics, the overhead on more powerful discrete GPUs could be much easier to justify in real world game deployments.

Intel says it plans to release an alpha version of the TSNC SDK later in 2026, followed by a beta and then a broader public release, though the company has not yet committed to fixed dates. That puts TSNC in an interesting position. It is still early, and developers will need to validate quality, pipeline complexity, and runtime cost for themselves, but Intel is clearly trying to establish its own place in the neural rendering conversation rather than leaving that space entirely to NVIDIA.

From a broader industry standpoint, this is a smart move. Texture data remains one of the most expensive parts of game content in terms of storage, streaming, and VRAM pressure, especially as materials become richer and higher resolution across PC and next generation console pipelines. If TSNC proves practical in shipping workflows, it could give developers another serious tool for balancing image quality with memory efficiency. It also shows that Intel wants to compete not only in raw graphics hardware, but in the middleware and rendering technologies that will shape how future games are built. That last point is an inference based on Intel’s SDK strategy and public technical positioning.

What do you think, could neural texture compression become a standard part of future game pipelines, or will developers still prefer the simplicity and predictability of traditional block compression?