PCI-SIG Pushes PCIe 8.0 Toward 1 TB/s by 2028, Targeting the Bandwidth Wall Facing AI and Data Center Infrastructure

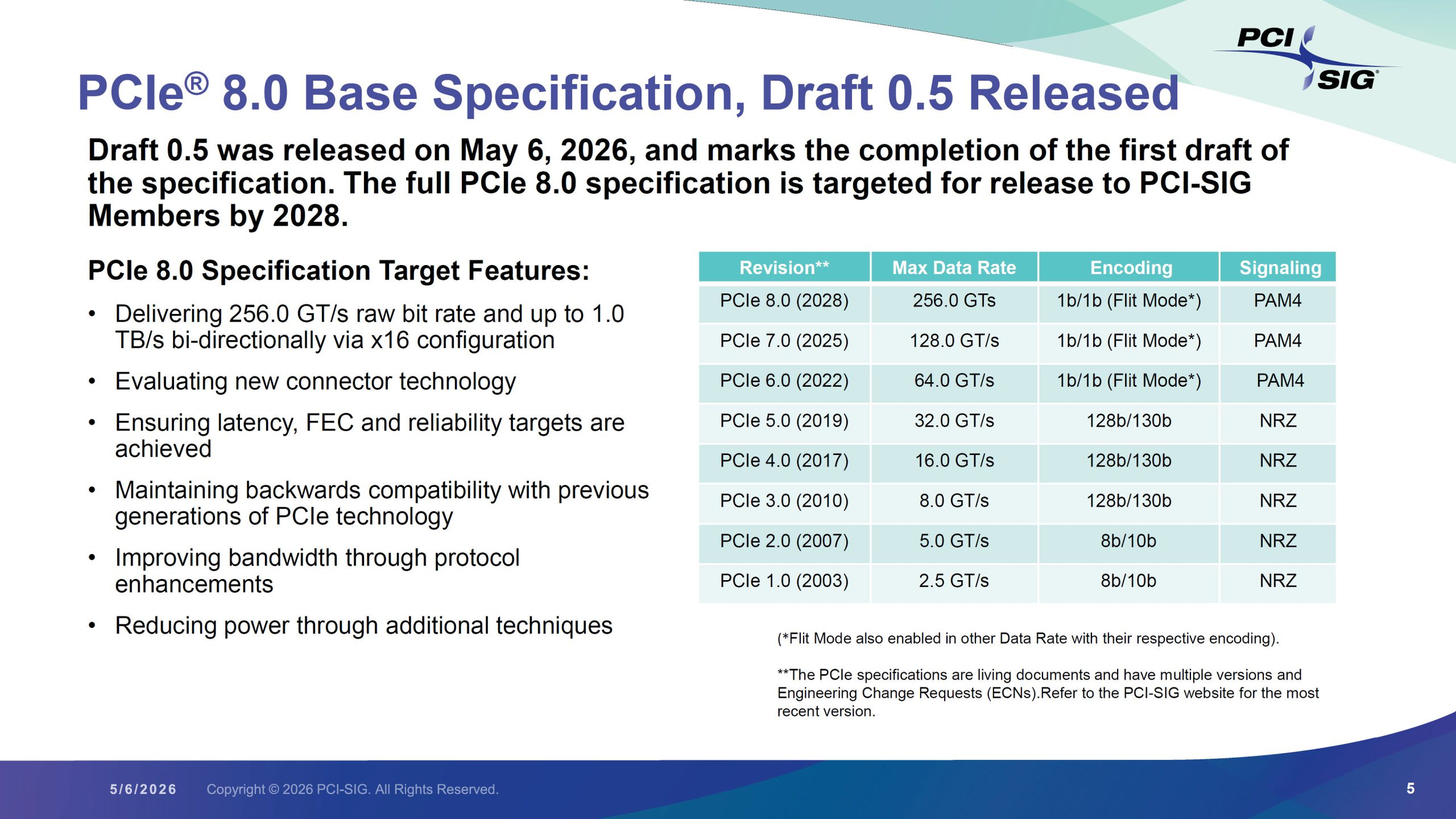

PCI-SIG has officially moved PCIe 8.0 forward with the release of draft 0.5, keeping the specification on track for final release in 2028. The headline target is exactly the kind of jump the AI and data center market has been waiting for: 256.0 GT/s transfer speeds and up to 1.0 TB/s of bi directional bandwidth in an x16 configuration. According to PCI-SIG, the new standard is designed for data intensive environments including AI, data centers, high speed networking, edge computing, and quantum computing.

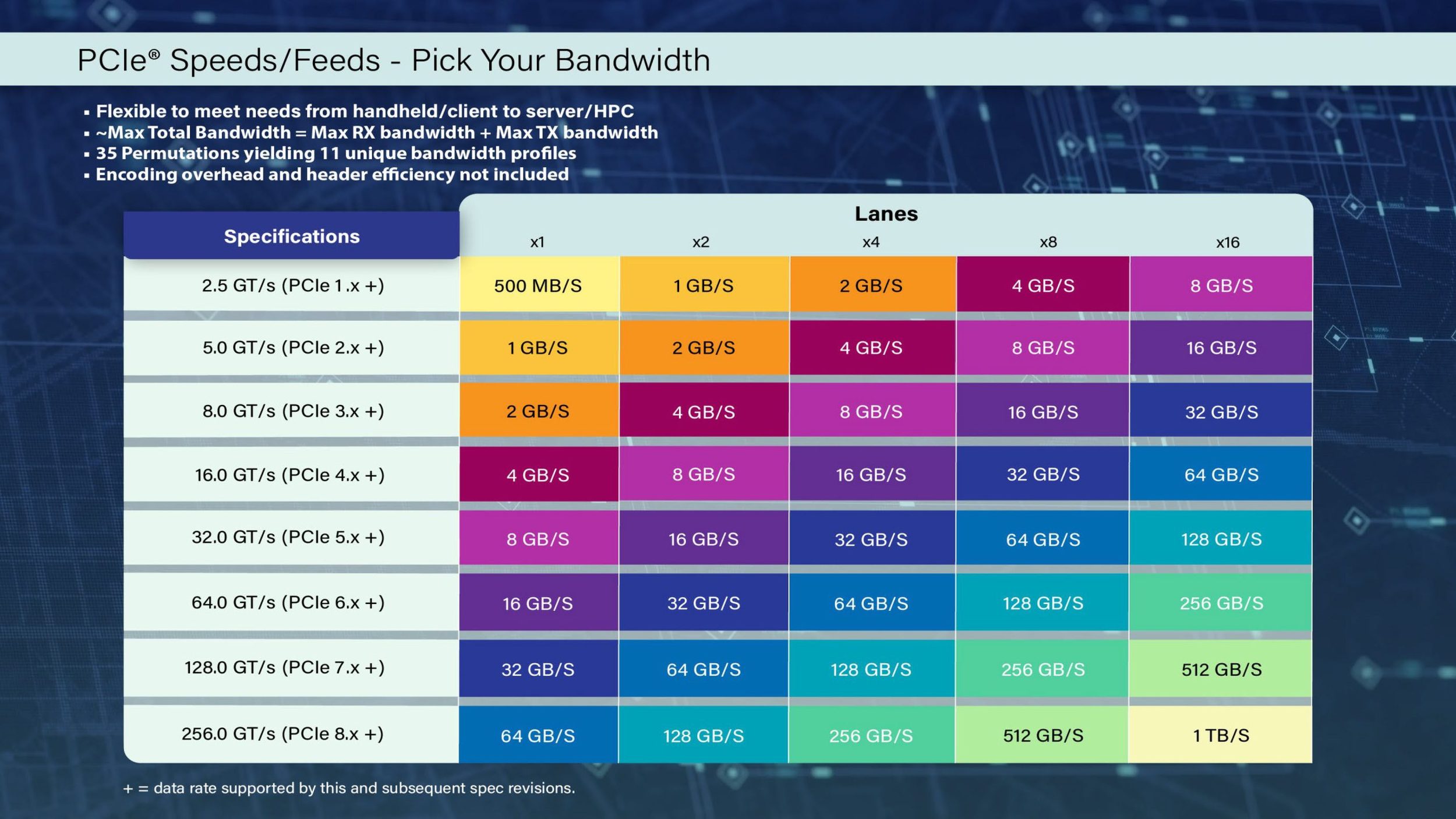

The scale of the jump is substantial. PCI-SIG says PCIe 8.0 will deliver 8x the raw data rate and bandwidth of PCIe 5.0, which is why the new standard is being framed less as a consumer upgrade and more as a backbone technology for next generation server and accelerator platforms. In raw throughput terms, PCIe 8.0 targets 64 GB/s on x1, 128 GB/s on x2, 256 GB/s on x4, 512 GB/s on x8, and 1024 GB/s on x16, all in bi directional bandwidth terms based on PCI-SIG’s stated 1.0 TB/s x16 objective and the lane scaling implied by the specification target. The x16 figure is the central number PCI-SIG itself has now put on the record.

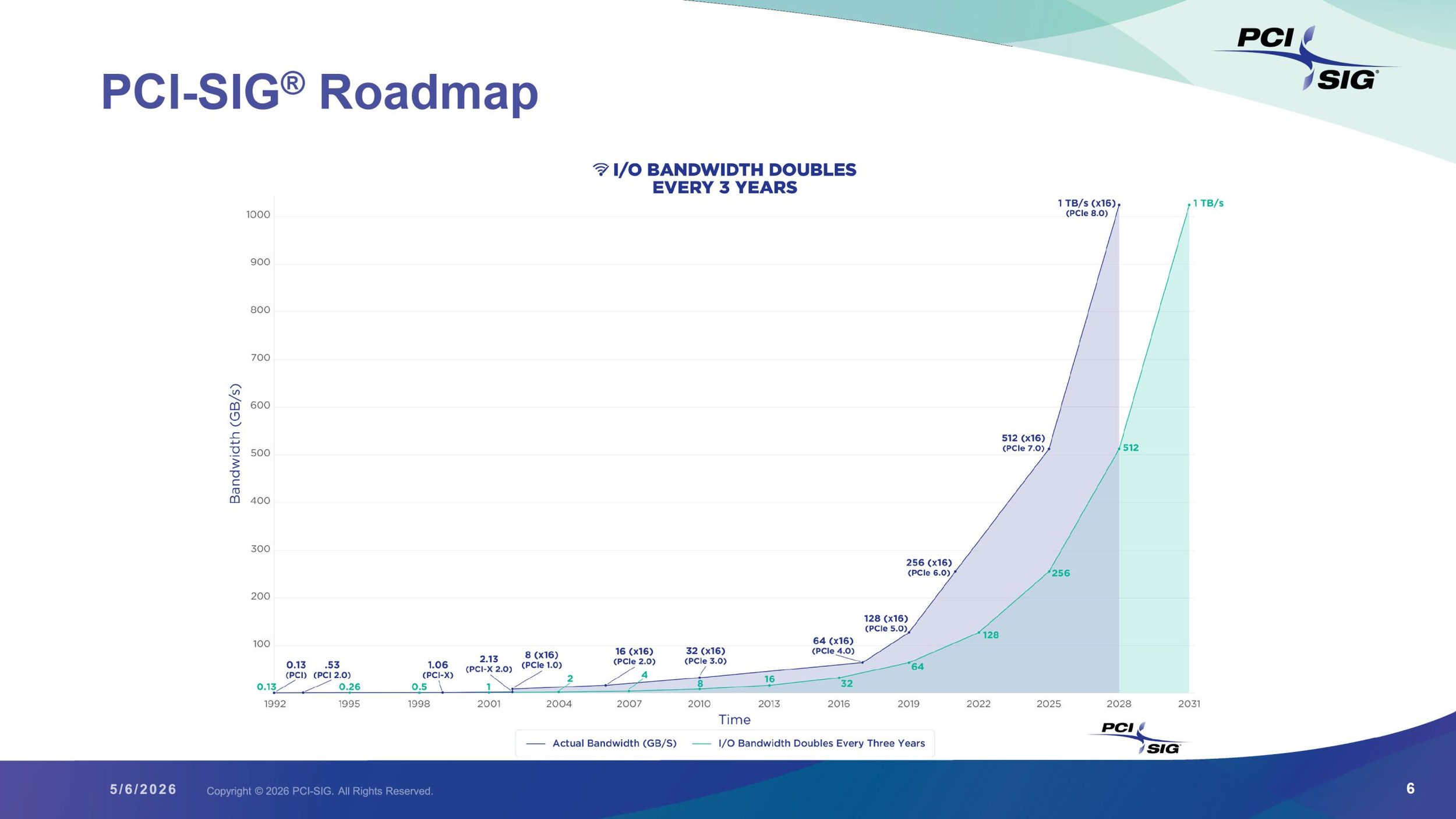

PCI-SIG is also keeping to its longer term cadence of doubling I O bandwidth roughly every 3 years. That matters because the workloads driving this roadmap are no longer ordinary desktop upgrade cases. They are large scale AI clusters, disaggregated data center systems, and networking heavy compute environments where bandwidth density and latency consistency increasingly decide how much useful performance expensive hardware can actually deliver. PCI-SIG explicitly says PCIe 8.0 is being shaped for those data hungry segments, not just for a faster version number.

Technically, PCIe 8.0 continues the move to PAM4 signaling, which has already become central to higher speed PCIe generations. PCI-SIG also says the new standard will maintain backward compatibility with earlier PCIe generations while pursuing protocol level bandwidth improvements, new connector evaluation, and additional power reduction techniques. Just as important, the group says latency, forward error correction, and reliability targets remain part of the specification objectives, which is critical because faster signaling is not useful in practice if error handling and deployment complexity spiral out of control.

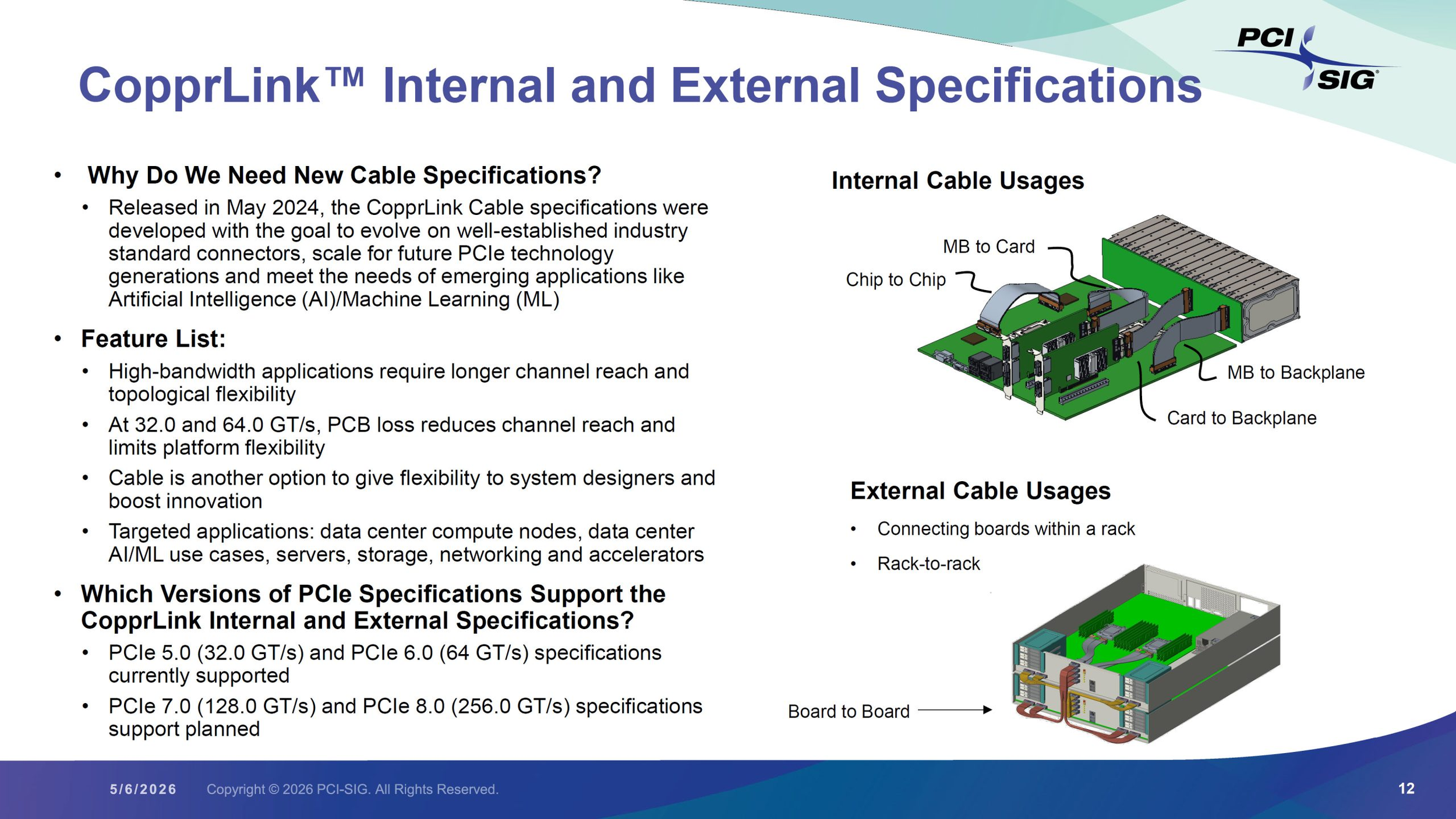

That is really the larger story here. PCIe 8.0 is not just about making add in cards or SSDs faster on paper. It is about preparing the interconnect layer for systems where GPUs, accelerators, smart NICs, CPUs, and storage all need to exchange huge volumes of data with far less room for bottlenecks. In the AI era, raw compute keeps climbing, but system balance increasingly depends on how quickly data can move between those components. PCI-SIG’s messaging around AI and data centers makes it clear that this is the pressure point PCIe 8.0 is meant to address. That interpretation is an inference based on PCI-SIG’s stated target markets and bandwidth goals.

It is also worth keeping the timeline in perspective. This is draft 0.5, not the finished specification. PCI-SIG describes it as the official first draft and says it incorporates member feedback received after the draft 0.3 release in September 2025. So while the bandwidth targets are now public and the roadmap remains intact, actual ecosystem products based on full PCIe 8.0 adoption are still several years away. The immediate significance is not that PCIe 8.0 hardware is around the corner, but that the standards body has now put the next major bandwidth milestone firmly on the map.

For the broader industry, that is a meaningful signal. AI infrastructure is moving so quickly that even PCIe 7.0 is no longer the end of the planning horizon. PCIe 8.0 shows that the next generation of server interconnect is already being defined around an environment where 1 TB/s class bandwidth is no longer excessive, but increasingly necessary.

Do you think PCIe 8.0’s biggest impact will land first in AI servers, or will storage and networking end up benefiting just as much?