NVIDIA’s Rubin CPX Disappears From the Roadmap as Groq LPUs Take Over Inference Duties, With a Possible Return in 2028

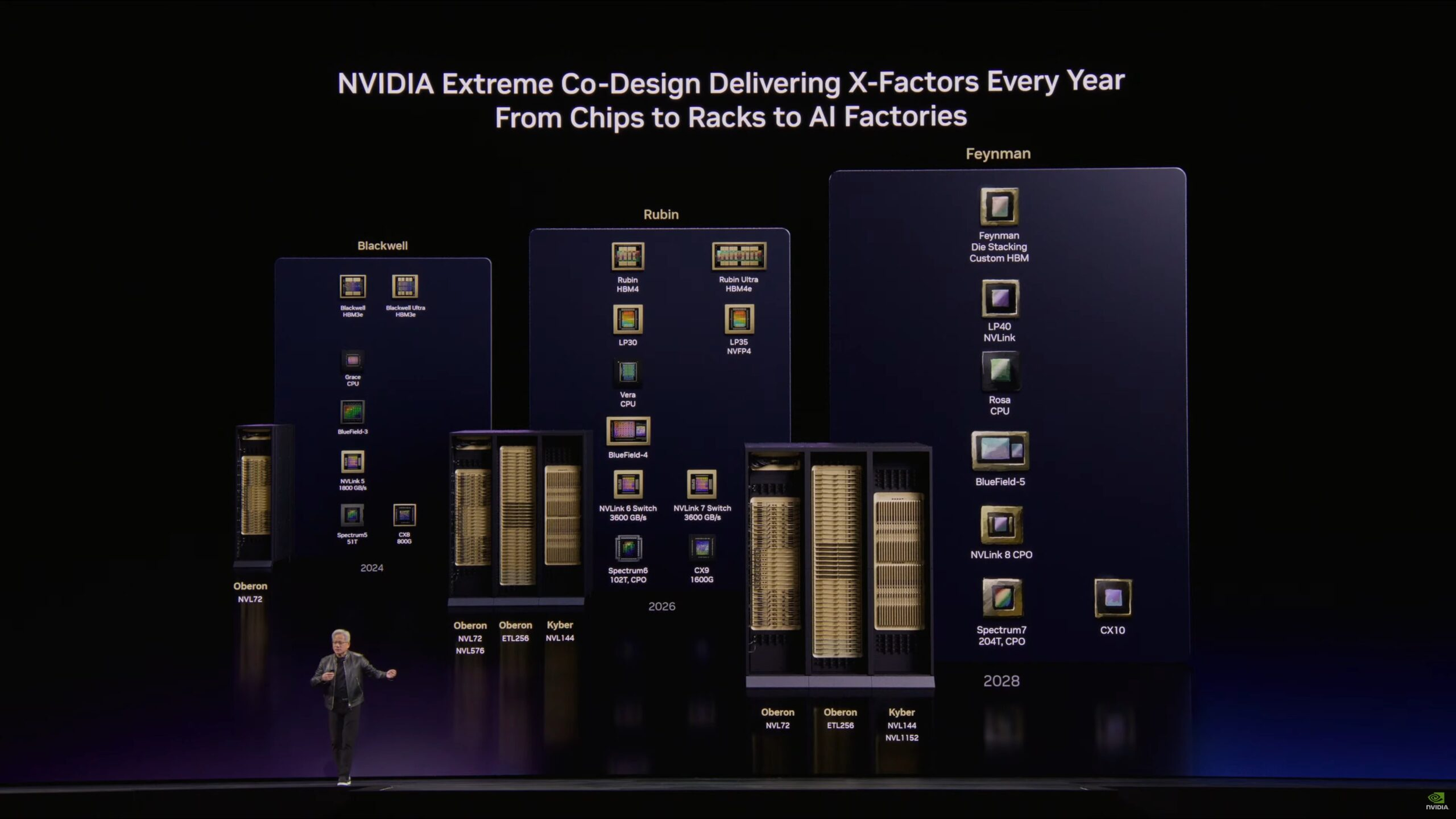

NVIDIA’s Rubin CPX has quietly fallen off the company’s public roadmap, and that absence is now looking much more than accidental. According to a report from ComputerBase, NVIDIA VP and General Manager Ian Buck confirmed during a roundtable that Rubin CPX is not coming as part of the Rubin generation after all. The concept itself is not dead, but it has effectively been pushed aside for now and could potentially reappear with the Feynman generation, which is currently expected in 2028.

That is a meaningful shift because Rubin CPX had previously been presented as part of NVIDIA’s broader push into inference focused silicon. ComputerBase notes that the chip was originally introduced around 6 months ago and was designed for very high inference throughput, particularly in the FP4 space, with the earlier concept centered around a rack focused solution using GDDR7. But when Jensen Huang presented the Rubin lineup at GTC 2026, CPX was nowhere to be seen. Buck’s explanation makes the situation much clearer: NVIDIA has changed its short term priorities and no longer sees Rubin CPX as the right answer for the current phase of inference demand.

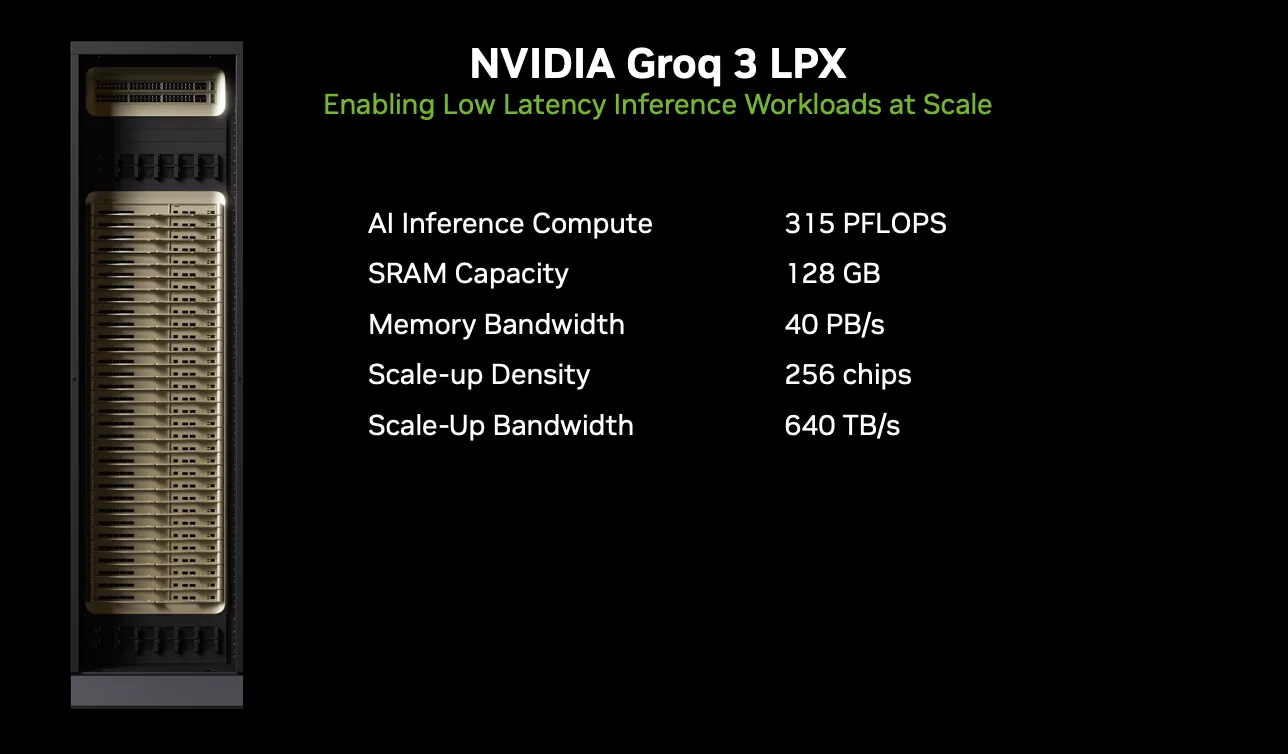

The strategic reason appears to be tied to how inference workloads are evolving. Reuters reported from GTC that NVIDIA is now splitting inference into 2 major stages, with Vera Rubin handling the prefill stage and Groq based silicon handling decode, the stage where responses are generated in real time. ComputerBase adds that Buck specifically pointed to faster token generation as the near term focus, with Groq’s LPU architecture better suited for that role than the now shelved CPX concept. In practical terms, NVIDIA seems to have decided that decode performance and ultra low latency are the more urgent battlegrounds right now, and that makes Groq a more attractive fit for the current market than a Rubin era CPX product.

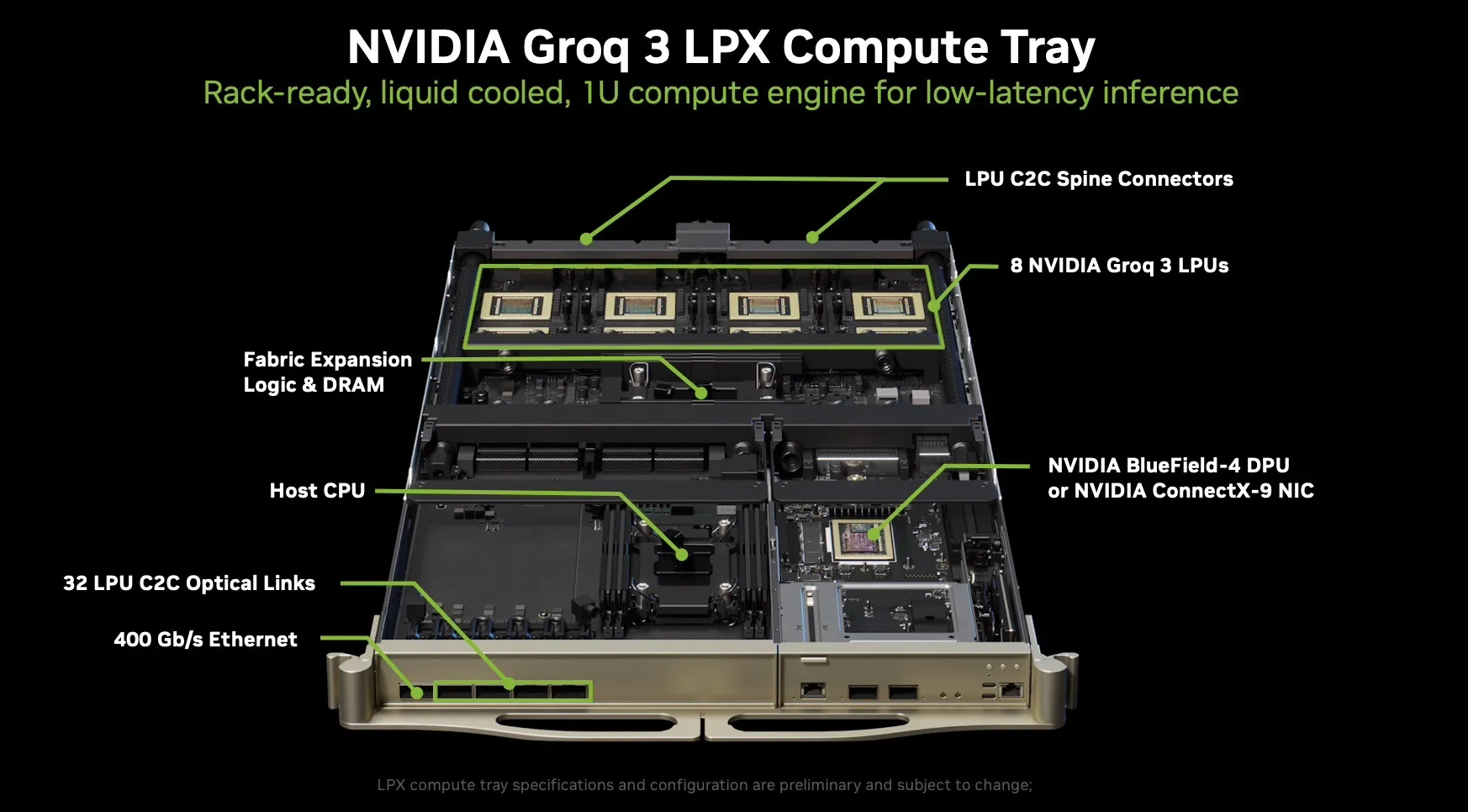

That also explains why the Groq powered LPX rack has suddenly become so important inside NVIDIA’s inference strategy. Reuters says NVIDIA unveiled a system built on technology licensed from Groq as part of a major deal announced in December, with Groq chips positioned for decode while Rubin handles prefill. ComputerBase further describes Groq’s LPU approach as deterministic and built around very low latency execution, supported by a large on chip SRAM pool. This architecture gives NVIDIA a ready made route into the fast response, real time AI segment without having to force Rubin CPX into the lineup before it is commercially or technically ready.

For the broader roadmap, the interesting part is that Feynman now looks like the earliest possible return point for the CPX idea. Reuters reported that NVIDIA showed the Feynman roadmap at GTC and that the architecture is expected in 2028, following Rubin Ultra. That lines up with Buck’s comments to ComputerBase that the concept may come back in the next generation rather than the current one. If that happens, it may not look exactly like the original design. Industry reporting around the roadmap change suggests NVIDIA has been reconsidering parts of the memory approach, which would make sense given how quickly inference hardware priorities are shifting across the market.

From a business perspective, this is another sign that NVIDIA is moving aggressively to protect its lead in inference rather than just training. Jensen Huang said at GTC that the inference inflection has arrived, and Reuters reported that NVIDIA now sees its AI chip revenue opportunity reaching at least 1 trillion dollars through 2027. In that context, removing Rubin CPX from the near term roadmap does not look like retreat. It looks more like a fast portfolio correction, with NVIDIA choosing the product mix it believes can win right now in the highest growth part of the AI market.

For gamers and the wider hardware space, there is also a side angle worth watching. A Rubin era CPX product would have consumed advanced memory resources for AI infrastructure, so its removal could at least modestly reduce one point of pressure in the already strained high performance memory ecosystem. That does not suddenly solve supply issues, but it is another reminder that every roadmap change in AI now has ripple effects beyond the data center. For now, Rubin CPX is off the board, Groq LPUs are filling the gap, and Feynman has become the place to watch for whether NVIDIA revives the concept in a stronger form.

Do you think NVIDIA made the right call by prioritizing Groq LPUs over Rubin CPX, or should it have kept both tracks moving at the same time?