NVIDIA’s Nemotron 3 Super Tops Open Source Enterprise AI Benchmark, Beating DeepSeek and GPT OSS

NVIDIA is adding software momentum to its already dominant AI hardware story, with Nemotron 3 Super now taking the top spot in the open source category of the EnterpriseOps Gym leaderboard. The benchmark evaluates agentic AI systems across 1150 expert curated tasks in 8 enterprise domains using 512 functional tools in fully interactive environments, making it one of the more practical workflow focused tests now circulating in the AI space.

That result matters because EnterpriseOps Gym is not a lightweight chatbot style benchmark. The project describes itself as a large scale enterprise agent benchmark built around long horizon planning, persistent state changes, policy governed workflows, and cross domain coordination. In its own summary, the benchmark says even the top overall model reaches only 37.4 percent success, which helps explain why topping the open model side of this leaderboard carries more weight than a simple static knowledge test.

Benchmarks should reflect real-world performance.

— NVIDIA AI (@NVIDIAAI) May 4, 2026

That’s why we’re excited to share that Nemotron 3 Super has topped the open source category on the EnterpriseOps-Gym leaderboard.

This agentic gauntlet evaluates performance across 1,150 tasks in fully interactive environments… pic.twitter.com/A2y5hlSMKd

NVIDIA’s Nemotron 3 Super is not a small model trying to punch above its class. NVIDIA describes it as a 120B total parameter, 12B active parameter Mixture of Experts hybrid Mamba Transformer model, released in March 2026 as part of the Nemotron 3 family. The company says the model was designed for complex multi agent use cases such as software development and cybersecurity triaging, while also delivering more than 5x the throughput of the previous Nemotron Super and supporting a native 1M token context window.

The architecture is a big part of why NVIDIA is pushing this model so aggressively. According to NVIDIA, Nemotron 3 Super uses Latent MoE to call 4x as many expert specialists for the same inference cost by compressing tokens before they reach the experts, and it also adds multi token prediction to reduce generation time for long sequences through native speculative decoding. NVIDIA is positioning the model not just as another large open model, but as a more efficient reasoning engine built for high volume agentic workloads.

NVIDIA’s own Nemotron research page also claims the model achieves up to 2.2x and 7.5x higher inference throughput than GPT OSS 120B and Qwen3.5 122B respectively in one tested configuration, while maintaining higher or comparable accuracy across a diverse set of benchmarks. That does not replace independent testing, but it does show how central efficiency is to NVIDIA’s pitch. Nemotron 3 Super is being marketed as both a capability play and a throughput play, which fits neatly with NVIDIA’s broader strategy of tying model design directly to its AI infrastructure stack.

On openness, NVIDIA is being unusually explicit. Its Nemotron site says the company publishes model weights, training datasets, and techniques, and directly states that Nemotron models are “truly open source.” NVIDIA also says Nemotron models are intended to be openly available for developers to run and modify, including in production environments. That matters because the open model conversation has become increasingly blurred between open weights, partially open releases, and fully closed systems with public APIs.

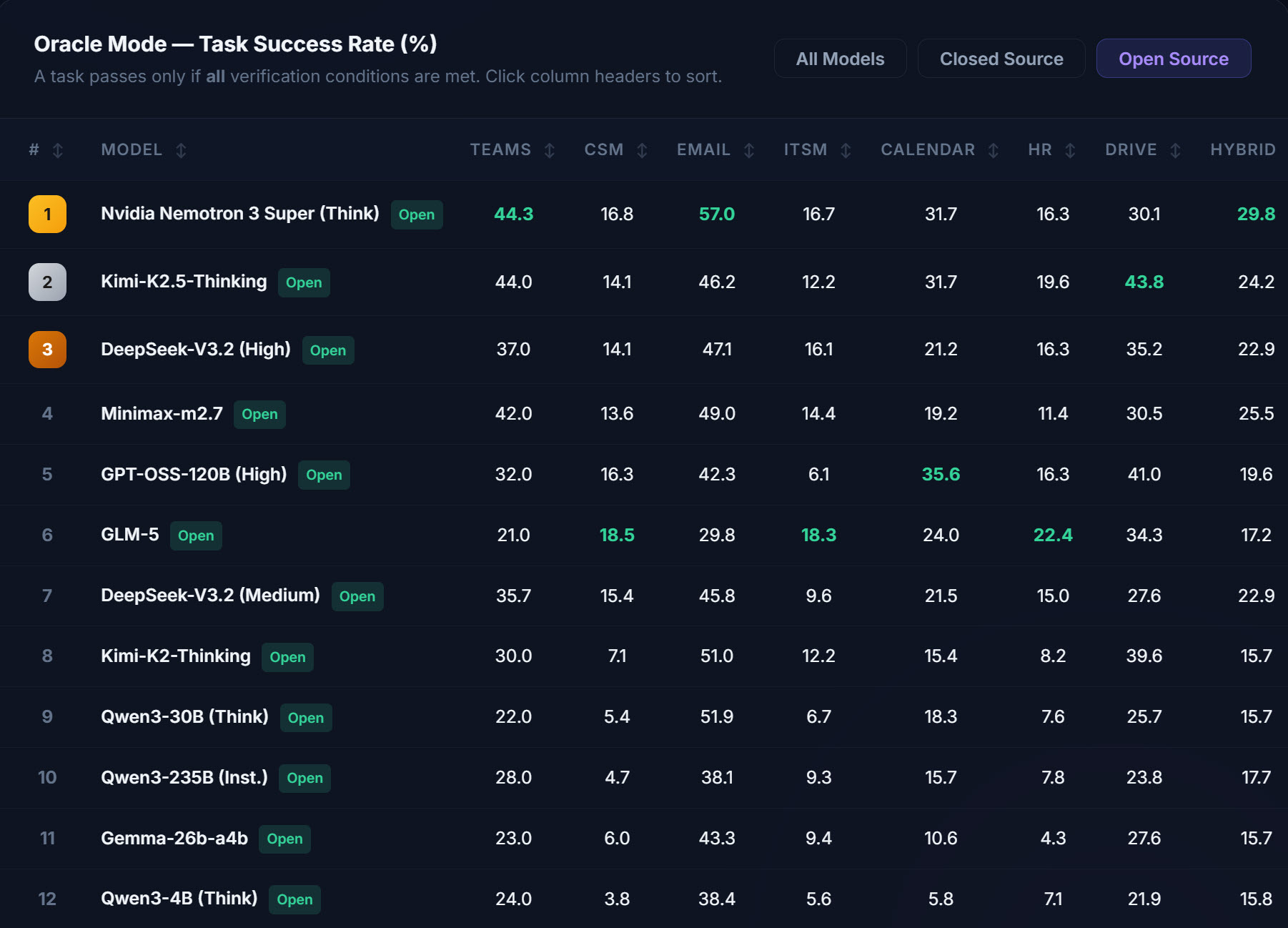

As for the new leaderboard result itself, NVIDIA’s AI account said Nemotron 3 Super topped the open source category on EnterpriseOps Gym, while multiple reports summarizing the public leaderboard say the model posted an average score of 27.3 and ranked ahead of Kimi K2.5 in second, DeepSeek v3.2 in third, and GPT OSS 120B in fifth. I was able to verify NVIDIA’s top rank claim from NVIDIA’s own public post and the benchmark site, but the exact ranking positions and 27.3 figure were not directly readable from the benchmark page text output I accessed, so those specific leaderboard placements should be treated as reported figures rather than fully independently confirmed from the page render itself.

Even with that caveat, the result is strategically important. NVIDIA has spent years building the case that it is not just the dominant GPU vendor for AI, but a full stack AI company spanning silicon, interconnect, software libraries, inference frameworks, and now increasingly competitive foundation models. Nemotron 3 Super feeds directly into that narrative. The more NVIDIA can show strong open model performance in practical agent benchmarks, the easier it becomes to argue that customers choosing NVIDIA are buying into a complete AI platform rather than just a box of accelerators.

NVIDIA’s broader Nemotron lineup also reinforces that strategy. The company describes Nano, Super, and Ultra as models aimed at different reasoning workloads, while the larger Nemotron family now spans multimodal, reasoning, RAG, and safety applications. In that sense, Nemotron 3 Super topping an enterprise agent leaderboard is not an isolated win. It is part of a broader attempt to show that NVIDIA can compete at the model layer just as aggressively as it does in the chip market.

For the wider open AI ecosystem, this is another sign that the leaderboard battle is becoming more crowded and more practical. It is no longer just about who can post the highest reasoning score on a narrow test set. Enterprise agent benchmarks like EnterpriseOps Gym are starting to matter more because they test whether models can actually coordinate tools, systems, and workflows under realistic constraints. On that front, NVIDIA now has a result it can point to with confidence, and it will likely use it hard.

What do you think matters more for open models now, benchmark leadership, real world deployment cost, or long term ecosystem openness?