NVIDIA Looks to Space as AI Data Center Pressure Grows on Earth, but Orbital Compute Is Still a Long Game

NVIDIA is now openly treating space as a serious extension of the AI infrastructure roadmap, not just as a science fiction concept. The company has already announced a dedicated space computing push and is working with Starcloud, Planet Labs, Kepler Communications, Firefly Aerospace, Aetherflux, Axiom Space, and Sophia Space on platforms designed for on orbit AI processing, geospatial intelligence, autonomous operations, and eventually orbital data center class workloads. The shift comes at a time when AI infrastructure on Earth is facing mounting scrutiny over power, land, and water intensity, making orbital compute an increasingly attractive long term concept even if it remains far from mainstream deployment today.

NVIDIA just quietly partnered with 5 space companies.

— The Assembly (@InTheAssembly) May 11, 2026

This is one of the most important moves in tech right now.

They are officially taking AI infrastructure to orbit.

Here are the 5 names that could define the next decade:

The reason this idea is gaining traction is not hard to understand. AI data centers on Earth are getting larger, more power hungry, and more politically sensitive. The International Energy Agency said in its Electricity 2026 outlook that data centers are one of the major forces pushing global electricity demand higher through 2030, while its Energy and AI analysis projects that in a faster growth case, global data center electricity demand could exceed 1,700 TWh by 2035. In the United States, Reuters reported this week that the Energy Information Administration expects power use to reach new records in 2026 and 2027 partly because of AI and crypto data center demand.

Water and community impact are becoming part of the problem too. The World Resources Institute says in its analysis of U.S. data center growth that mid sized facilities can use up to 300,000 gallons of water per day, while large ones can consume as much as 5 million gallons daily. Brookings similarly noted in its AI, data centers, and water piece that water use from cooling is becoming a major policy issue as AI infrastructure expands. The local backlash is no longer theoretical either, with reporting from Politico and wider follow up coverage showing how communities are increasingly pushing back on the strain these facilities can place on water systems.

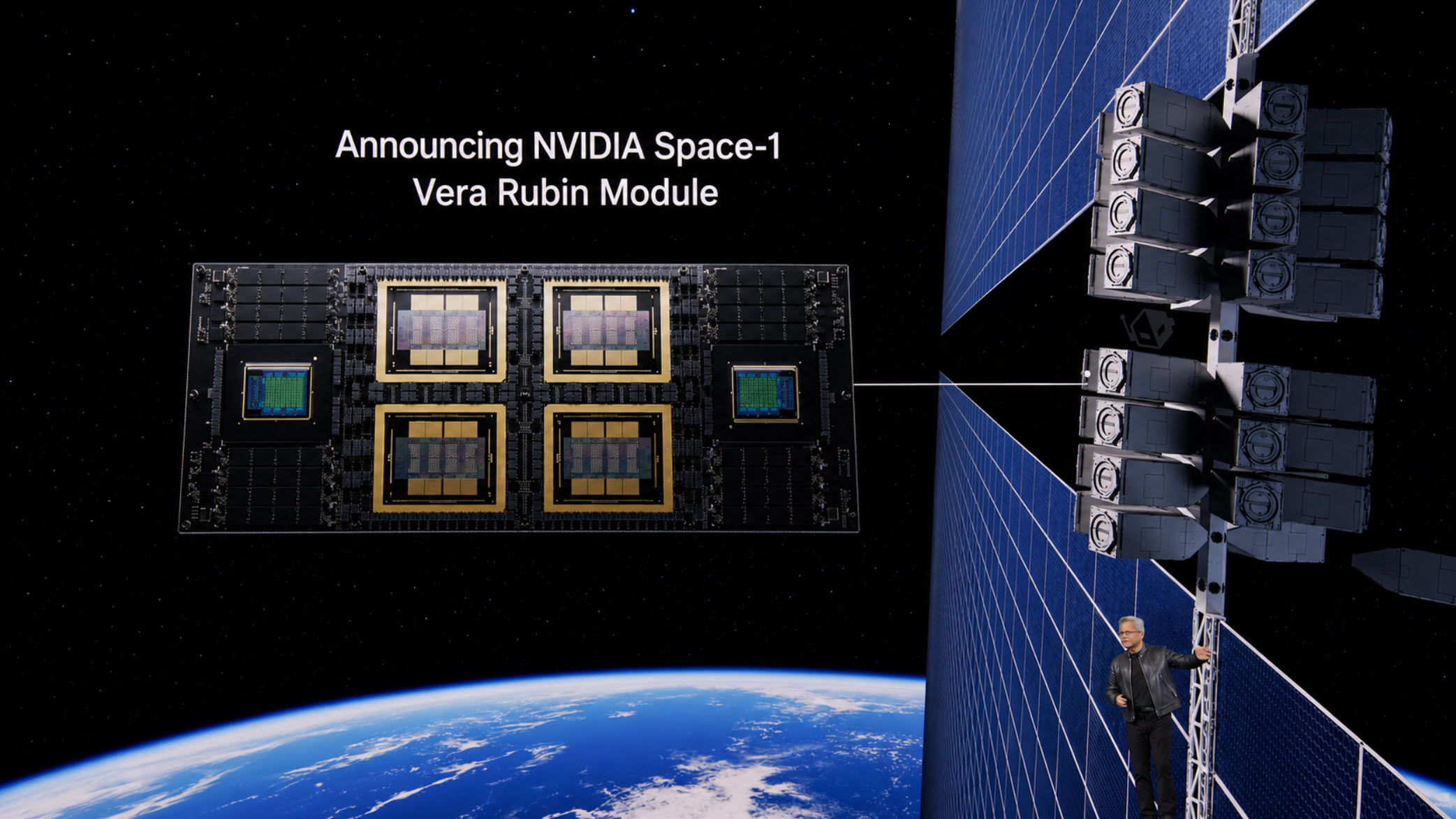

That is the backdrop behind NVIDIA’s more aggressive space narrative. In March, the company officially announced NVIDIA Space Computing, introducing the Space 1 Vera Rubin Module as a future platform for data center class AI in orbit. NVIDIA says the module is designed for size, weight, and power constrained environments and can deliver up to 25 times more AI compute per GPU for space based inferencing and orbital data centers compared with H100. It also confirmed that Aetherflux, Axiom Space, Kepler Communications, Planet Labs, Sophia Space, and Starcloud are already using NVIDIA accelerated platforms for next generation space missions.

It is important, though, to separate what is real now from what is still aspirational. NVIDIA has indeed announced the Space 1 Vera Rubin Module and publicly tied it to orbital AI and orbital data center use cases, but the company also says the module will be available “at a later date.” That means this is a roadmap and ecosystem move, not a live commercial orbital data center deployment today. The platforms already available are IGX Thor, Jetson Orin, and RTX PRO 6000 Blackwell Server Edition for related space and ground use cases.

Starcloud is one of the clearest examples of where this vision is headed. NVIDIA highlighted the startup in a 2025 official blog post, describing its goal of building space based data centers that could eventually offer lower energy costs and reduce terrestrial energy pressure. Starcloud’s own public material says it is pursuing orbital data centers that use continuous solar power and radiative cooling, and pitches them as a route to gigawatt scale compute without many of the permitting and infrastructure limits found on Earth. Starcloud’s site does not make all of the exact claims repeated in secondary reporting, but it clearly shows that orbital data center design is central to its business plan.

NVIDIA’s partner list also shows that this is not only about giant orbital server farms. There are more immediate, practical steps already underway. Planet is using NVIDIA systems to accelerate geospatial intelligence, Kepler is using Jetson Orin for smarter in orbit network management, Sophia Space is embedding Jetson Orin into modular hosted computing platforms, and Firefly Aerospace announced in April that it is integrating NVIDIA Jetson for on orbit lunar image processing in its Ocula service. That tells us the first commercial wins are likely to come from edge inference, onboard analytics, and autonomous mission operations before full orbital AI cloud infrastructure arrives.

So the core thesis is not really that Earth can no longer sustain AI data centers at all. That overstates the situation. The stronger and more accurate reading is that the next wave of AI demand is making terrestrial expansion harder, more expensive, and more contested, which is pushing companies like NVIDIA to explore space as a strategic long term overflow layer for compute. That does not mean hyperscale AI is leaving Earth anytime soon. It means the industry is beginning to treat orbit as a future compute frontier worth serious R and D attention.

There is also still a huge execution gap between concept and reality. Launch economics, hardware survivability, orbital servicing, radiation tolerance, data transfer, and sheer deployment logistics remain major barriers. Even with reusable launch systems improving the economics, getting hundreds of thousands of high end AI accelerators into orbit is a very different challenge from deploying them in Arizona, Texas, or Taiwan. On top of that, orbital data centers would not eliminate the need for Earth based infrastructure, because training, storage, networking, and user facing services would still depend heavily on terrestrial systems. This is one reason the current NVIDIA strategy is focused on hybrid space to ground computing, not a total replacement model. That is an inference based on NVIDIA’s current product mix and partner use cases.

Still, the direction is real. NVIDIA has moved from talking about AI for satellites to talking about AI infrastructure in orbit, and that is a meaningful escalation. If space based solar power, radiative cooling, and onboard inference continue to improve, then orbital compute could eventually become a serious niche for geospatial, defense, scientific, and edge AI workloads before it ever becomes a true hyperscale training frontier. For now, the orbital AI data center dream is still early stage. But it is no longer just a dream, and NVIDIA has already placed a visible strategic bet on it.

What do you think is more realistic first: AI assisted satellite analytics at scale, or true orbital data centers running major AI inference workloads?