NVIDIA Expands Open Agentic AI Push With Nemotron 3 Nano Omni as Foxconn, Palantir, and Oracle Join New Multimodal Rollout

NVIDIA has officially introduced Nemotron 3 Nano Omni, a new open multimodal model designed to unify video, audio, image, and text reasoning inside one system instead of relying on separate model chains for each modality. In its announcement on the NVIDIA Blog, the company says the model is aimed at enterprise and developer workflows that need faster, more accurate agentic AI with more deployment flexibility and lower inference cost. NVIDIA also says the model is available from April 28, 2026 through Hugging Face, OpenRouter, build.nvidia.com, and more than 25 partner platforms.

One important correction is worth making immediately. The model name is Nemotron 3 Nano Omni, not “Neomotron.” NVIDIA consistently uses the Nemotron branding across both its main announcement and its technical blog.

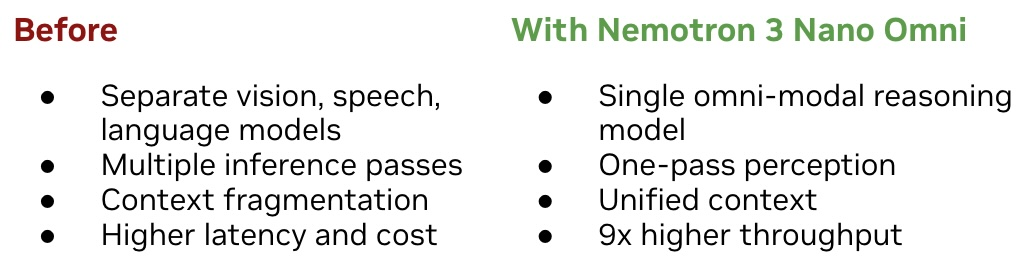

The main pitch behind Nemotron 3 Nano Omni is efficiency. NVIDIA says the model delivers up to 9x higher throughput than other open omni models with the same interactivity by combining vision and audio encoders into a single 30B A3B hybrid mixture of experts architecture. Rather than handing work from one model to another, Nemotron 3 Nano Omni is positioned as the multimodal perception and context layer inside agentic systems, which should reduce inference hops, lower orchestration complexity, and improve cross modal consistency.

NVIDIA is also making a stronger enterprise adoption argument around the launch. According to the company, businesses already adopting Nemotron 3 Nano Omni include Aible, Applied Scientific Intelligence, Eka Care, Foxconn, H Company, Palantir, and Pyler, while Dell Technologies, DocuSign, Infosys, K Dense, Lila, Oracle, and Zefr are evaluating it. That gives the model a stronger rollout narrative than a typical research release, especially because the list mixes manufacturing, enterprise software, healthcare, and AI infrastructure aligned names. Foxconn, Palantir, and Oracle are the highest profile names in that group and clearly help NVIDIA frame this launch as an enterprise scale push rather than a niche model drop.

Performance claims are another major part of the story. NVIDIA says Nemotron 3 Nano Omni tops 6 leaderboards for complex document intelligence, video understanding, and audio understanding, while its technical post adds that it leads on benchmarks such as MMlongbench Doc, OCRBenchV2, WorldSense, DailyOmni, and VoiceBench. NVIDIA also says MediaPerf results show the model delivering the highest throughput across every task it was tested on and the lowest inference cost for video level tagging. Those claims come directly from NVIDIA’s public materials, so they should still be read as vendor presented benchmark positioning rather than neutral third party review conclusions.

In practical terms, NVIDIA is pitching the model for 3 main workflow types. The first is computer use agents, where Nemotron 3 Nano Omni acts as the visual perception loop for software agents navigating interfaces and understanding screen state over time. The second is document intelligence, where the model handles charts, tables, screenshots, and mixed media inputs in a single reasoning flow. The third is audio and video understanding, where the model maintains context across what was shown, said, and documented instead of splitting that work into disconnected summaries. NVIDIA’s Hugging Face explainer is referenced by the company for the throughput comparison, while the technical blog goes deeper into those use cases.

The enterprise angle becomes more interesting when viewed through NVIDIA’s broader strategy. Nemotron 3 Nano Omni is not being presented as a giant standalone reasoning model that replaces everything else. NVIDIA instead positions it as a multimodal sub agent that can work alongside Nemotron 3 Super for higher frequency execution, Nemotron 3 Ultra for more complex planning, or even proprietary models from other vendors. That modular framing fits the current enterprise AI direction, where companies increasingly want specialized components they can plug into larger agentic workflows instead of one oversized general model doing every job. That interpretation is an inference based on NVIDIA’s published deployment framing.

The bigger takeaway is that NVIDIA is clearly trying to strengthen its position in open enterprise AI, not just AI hardware. By offering open weights, open datasets, open recipes, and broad deployment support from local systems to cloud and data center infrastructure, the company is pushing Nemotron as a controllable production path for customers that care about sovereignty, customization, and regulatory flexibility. If the efficiency and adoption claims hold up in real deployments, Nemotron 3 Nano Omni could become one of the more commercially relevant open multimodal models released this year.

Do you think open multimodal models like Nemotron 3 Nano Omni can seriously challenge proprietary enterprise AI stacks, or will the biggest customers still prefer closed ecosystems?