Goldman Sachs Sees Agentic AI Driving a Massive Token Boom, but Warns Bad Data Could Undercut the Payoff

Goldman Sachs is making a bold long range call on agentic AI, arguing that software agents could become one of the biggest growth engines for AI infrastructure over the next decade. According to coverage citing the bank’s latest research, Goldman expects global token consumption to rise 24 times by 2030 versus 2026 levels, with total AI queries climbing from 5 billion in 2025 to 23 billion by 2030 as non human agents take on a larger share of activity. The report highlighted by UDN says about 30% of those 2030 queries could come from agentic use cases rather than traditional human prompted interactions.

The logic behind that forecast is straightforward. Agentic AI systems do far more than answer a single prompt and stop. They monitor environments, verify outputs, call external tools, and continue operating in the background, which drives far heavier token use than a standard chatbot style interaction. Goldman’s thesis is that this higher usage intensity could finally help justify the enormous capital spending now pouring into AI infrastructure, especially if falling compute costs continue to improve the economics of inference at scale. Goldman has already projected that hyperscaler AI capital spending could reach 527 billion dollars in 2026, with upside beyond that if current trends persist.

The bank is reportedly even more bullish beyond 2030. The same research says token consumption could reach 55 times current levels by 2040 if enterprise grade agents move from early experimentation into broader autonomous deployment. That is the key strategic assumption in Goldman’s outlook. Enterprise adoption today is still relatively early, and even where companies are using agentic systems, many deployments are not yet fully autonomous. Goldman’s argument is that once those systems mature across workflows like supply chain management, programming, legal operations, and machine to machine coordination, token demand could expand much faster than many investors currently expect.

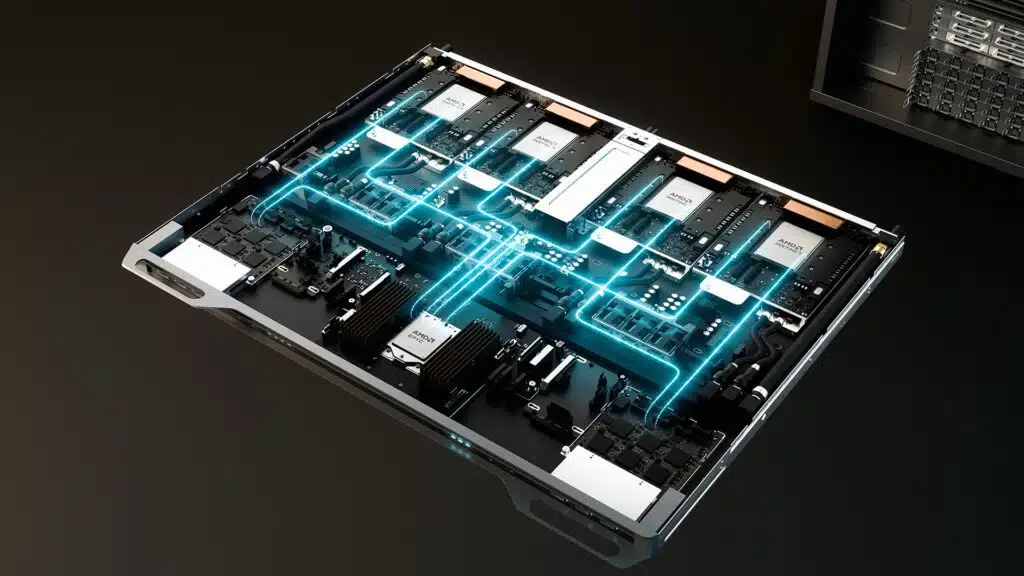

There is also a hardware angle that fits neatly with the broader market narrative around agentic AI. Recent Wall Street research has increasingly argued that the next wave of AI demand will widen spending beyond GPUs and put more emphasis on CPUs, memory, and other general purpose compute layers. Reuters reported in April that Morgan Stanley expects agentic AI to widen chip spending beyond graphics processors, with CPUs and memory becoming more important as AI systems shift from simple generation to autonomous multistep coordination. That makes Goldman’s token consumption forecast especially relevant for companies across the full compute stack, not just NVIDIA.

Still, Goldman is not presenting a purely optimistic story. One of the most important warnings in the report is that bad or low quality data could lead to substantial compute consumption without generating proportional value. That caveat matters because agentic systems are designed to act more independently, which means poor inputs, weak verification, or flawed enterprise data environments can scale inefficiency just as easily as they scale productivity. A future where agents burn through far more tokens is only attractive if those tokens are producing useful outcomes, not just more automated noise.

That warning also lines up with emerging academic evidence. A recent arXiv study on agentic coding tasks found that agent based workflows can consume vastly more tokens than standard code reasoning or code chat, while higher token usage does not always translate into better accuracy. The paper also found large variation in token use from run to run, which reinforces the idea that raw usage growth alone does not guarantee efficient returns. In other words, Goldman may be right that agentic AI can unlock a new monetization phase for AI infrastructure, but the quality of orchestration, data, and execution will determine whether that demand is profitable or wasteful.

For the market, this is the real takeaway. Goldman Sachs is effectively saying that agentic AI could help transform AI from a capital intensive buildout story into a sustained usage story, where token demand grows enough to support margins and justify ongoing infrastructure spend. But that upside comes with a clear condition. If enterprises feed weak data into autonomous systems, the result may be a lot more compute, a lot more cost, and not nearly enough business value.

Do you think agentic AI will become the true profit engine of the AI boom, or will data quality end up being the bottleneck that slows the whole story down?