JEDEC Pushes DDR5 MRDIMM to 12,800 MT/s as AI Servers Demand More Memory Bandwidth

JEDEC has announced a major new step for the DDR5 MRDIMM ecosystem, moving the roadmap forward with new interface logic and second generation designs targeting up to 12,800 MT/s. That is a meaningful jump for server memory, especially as AI datacenters, cloud platforms, and high performance computing systems continue to put more pressure on memory bandwidth and overall system efficiency. According to the new JEDEC update, the organization has now published a new DDR5 multiplexed rank data buffer standard, is preparing a matching clock driver standard, and says the MRDIMM Gen2 module standard is nearing completion.

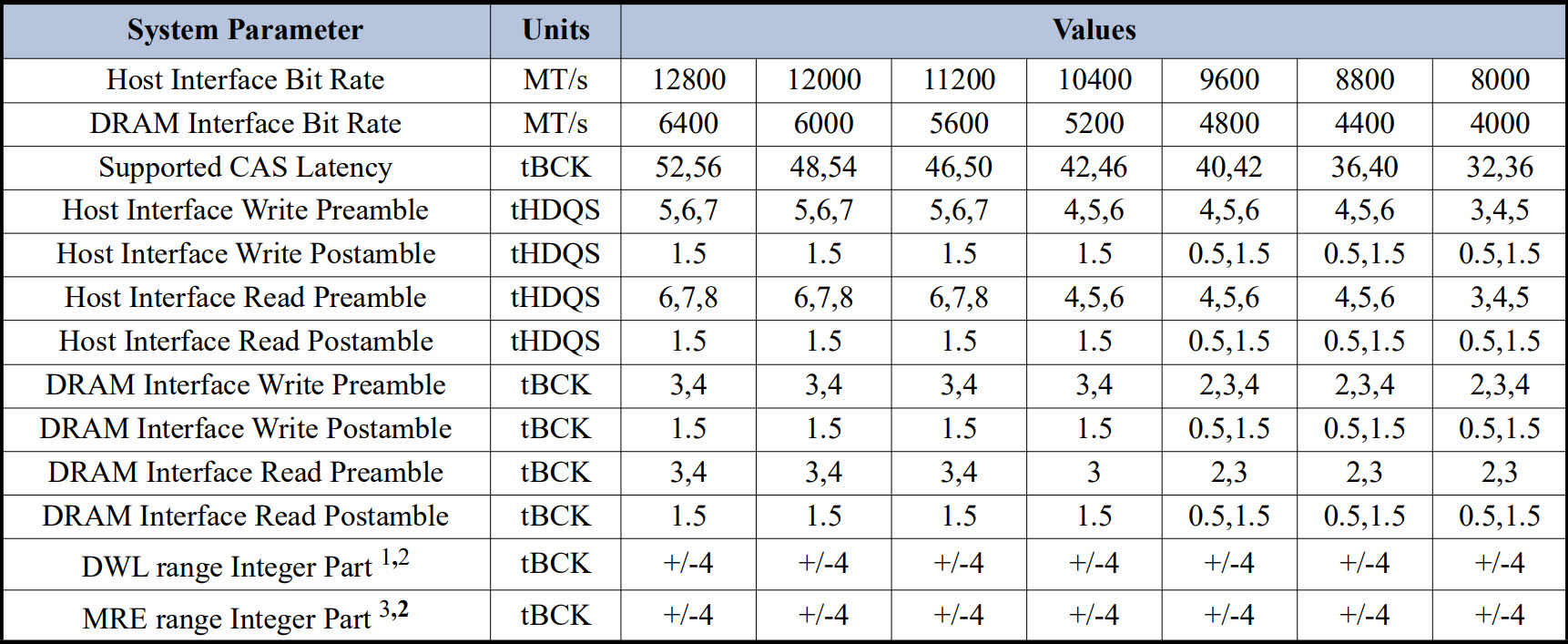

The most important performance milestone is the 12,800 MT/s target for Gen2 DDR5 MRDIMM raw card designs. Compared with the original Gen1 MRDIMM roadmap at 8,800 MT/s, that works out to roughly a 45% increase in transfer speed, giving datacenter platforms a much stronger path to scale memory bandwidth without immediately moving to an entirely new memory generation. JEDEC also confirmed that work on the Gen3 MRDIMM standard is already underway, which shows this is not a one step update but part of a longer server memory roadmap built around rising bandwidth demand.

The standards progress behind that roadmap is equally important. JEDEC has published JESD82 552, also known as DDR5MDB02, which defines the next generation multiplexed rank data buffer for MRDIMM architectures. It also says JESD82 542, the DDR5MRCD02 multiplexed rank registering clock driver standard, is expected soon. Together, these pieces are meant to strengthen signal integrity, timing control, and reliable operation as module bandwidth continues to scale higher.

From a market perspective, this matters because MRDIMM is built for the exact segment where memory pressure is rising fastest. Unlike mainstream desktop DDR5, MRDIMM is designed for server environments where feeding more data to high core count CPUs and AI accelerators can have a direct impact on throughput. Industry coverage around the new JEDEC update points out that MRDIMM uses additional onboard logic such as MDB and MRCD components to improve signal integrity and unlock much higher validated speeds on large capacity server platforms, making it especially relevant for AI training, inference, and other bandwidth hungry datacenter workloads.

This is also why JEDEC’s timing is notable. The organization is not just polishing an existing standard. It is strengthening the memory interface logic underneath MRDIMM while the industry keeps demanding more scalable server memory designs. In practical terms, that means the industry is trying to extend DDR5 further and faster in enterprise platforms rather than waiting for a clean generational reset. For hyperscalers and enterprise server vendors, that is a practical and commercially attractive path because it helps raise bandwidth ceilings inside an already active ecosystem.

The bigger story here is that memory bandwidth is becoming even more central to datacenter competitiveness. Compute alone is not enough when AI systems are constantly starving for faster data movement. JEDEC’s latest MRDIMM push shows the industry understands that pressure clearly, and 12,800 MT/s now looks like the next important checkpoint in DDR5 server memory’s evolution.

What do you think, will MRDIMM become one of the most important memory technologies for the next AI server wave, or will the market push even faster toward entirely new memory standards?