AMD Highlights OpenClaw Performance on Ryzen AI MAX APUs and Radeon AI PRO GPUs

AMD is pushing further into the local AI agent conversation with a new guide focused on running OpenClaw on its latest high memory AI PC and workstation hardware. In the company’s newly published walkthrough, AMD details 2 reference style configurations, RyzenClaw for Ryzen AI MAX+ systems and RadeonClaw for Radeon AI PRO graphics, while also sharing performance figures that position both platforms as serious local inference options for large model and multi agent workloads.

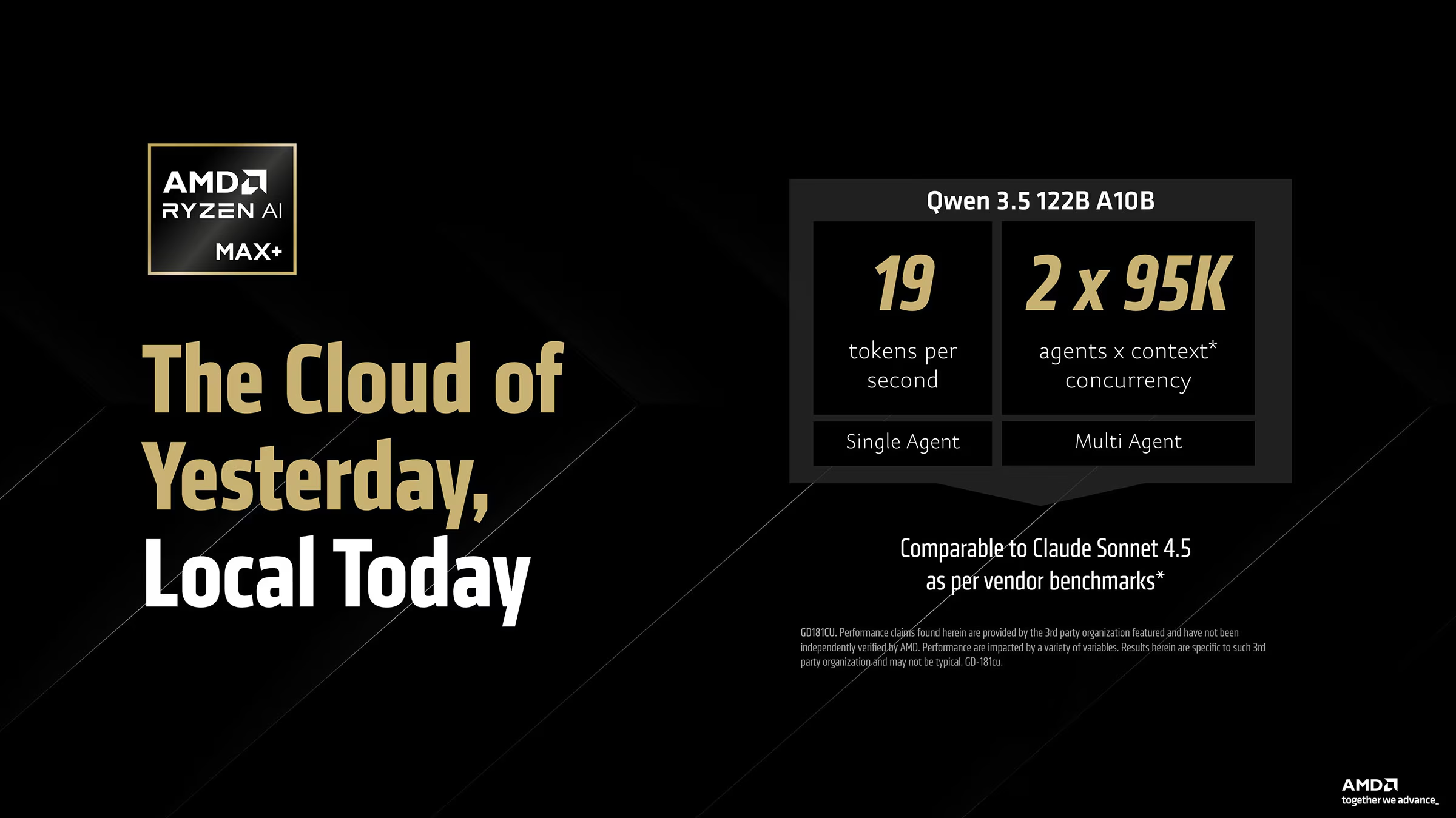

The most important part of AMD’s pitch is memory capacity. The company says Ryzen AI MAX+ platforms with 128 GB of unified memory can handle models such as Qwen 3.5 122B A10B locally, with the guide specifically instructing 128 GB systems to set Variable Graphics Memory to 96 GB in AMD Software before setup. That is a key advantage for this class of machine, because large local agent workloads are often limited not just by raw compute, but by whether enough fast accessible memory is available on a single compact system.

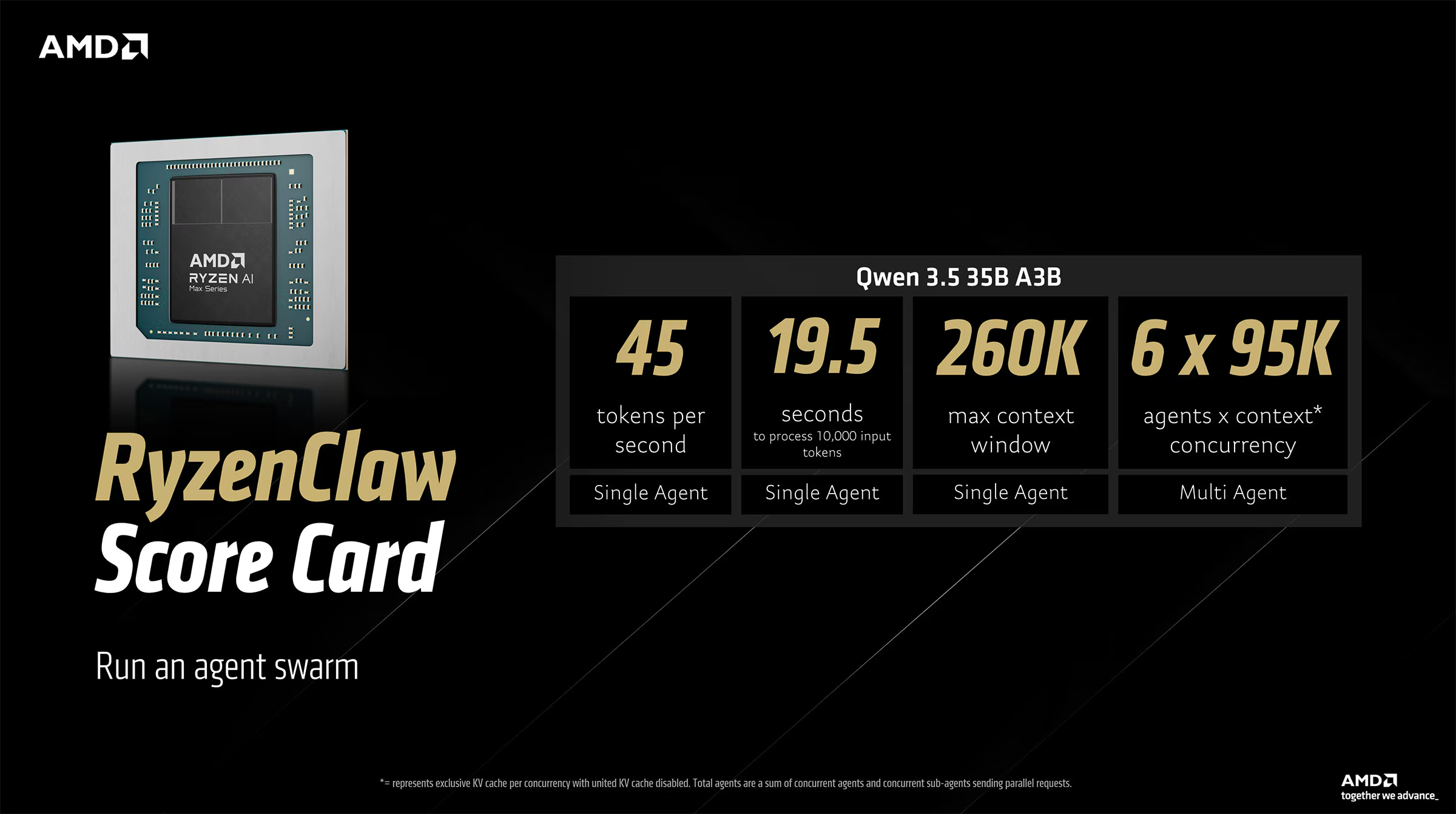

AMD’s published RyzenClaw scorecard claims that a Ryzen AI MAX+ platform running Qwen 3.5 35B A3B can deliver around 45 tokens per second, process 10,000 input tokens in about 19.5 seconds, support a 260K token context window, and scale to up to 6 concurrent agents in the described setup. For the larger Qwen 3.5 122B A10B workload, AMD says the same class of platform can reach roughly 19 tokens per second on a single agent and support 2 agents concurrently at 95K context.

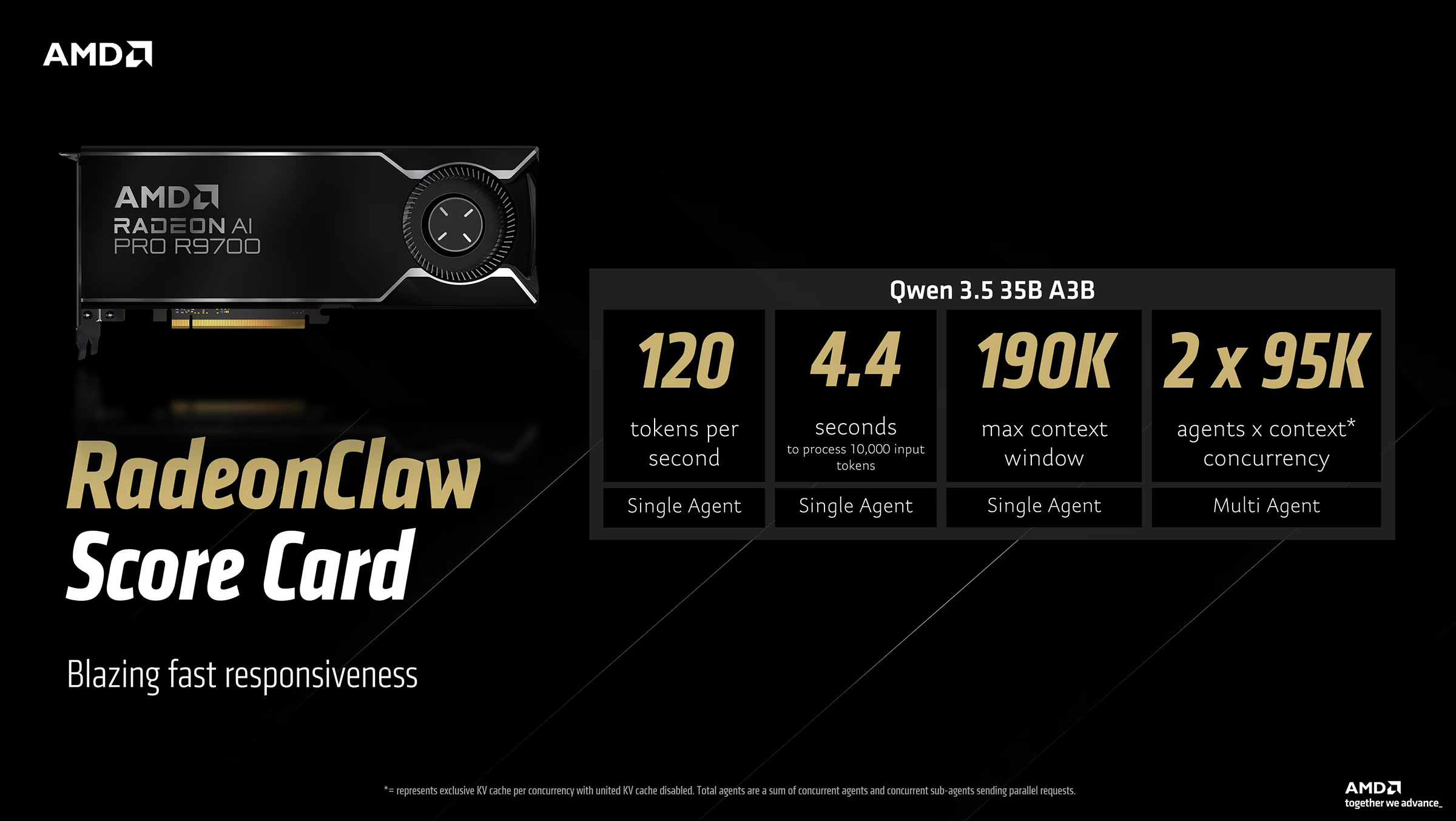

On the workstation side, AMD’s RadeonClaw example built around the Radeon AI PRO R9700 is positioned as the faster local inference option. The company says a single R9700 can run Qwen 3.5 35B A3B at about 120 tokens per second, handle 10,000 input tokens in around 4.4 seconds, and support a 190K token context window with up to 2 concurrent agents. AMD also notes that up to 4 Radeon AI PRO R9700 cards can be combined in a workstation, enabling 128 GB of total VRAM for larger local model deployments.

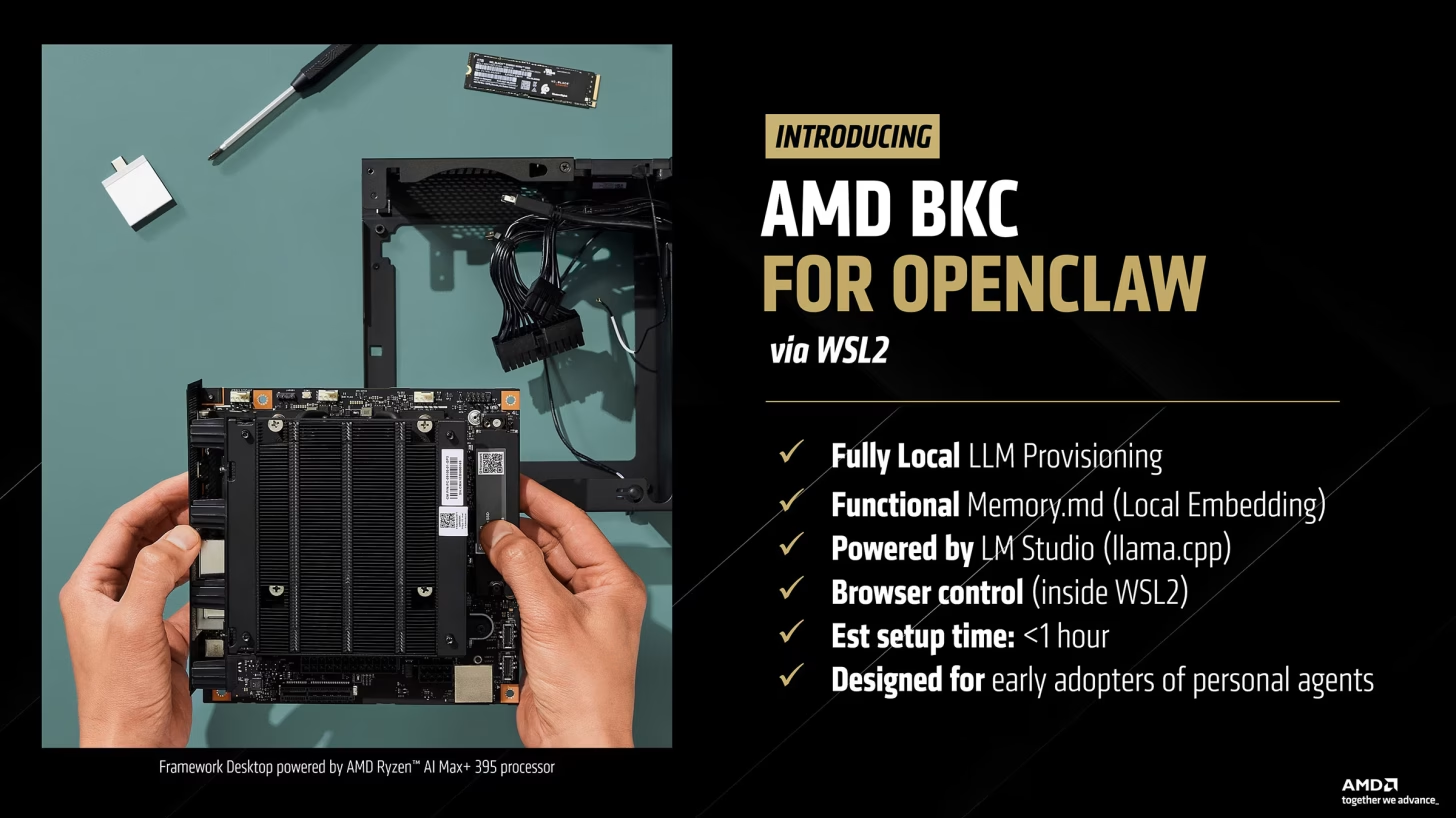

AMD is also trying to lower the barrier to entry for early adopters. The company says its OpenClaw best known configuration uses WSL2, includes fully local LLM provisioning, local Memory.md embedding, LM Studio with llama.cpp, and browser control inside WSL2, with an estimated setup time of less than 1 hour. That matters because one of the biggest problems with local agent experimentation is not always the hardware itself, but the operational friction around getting everything installed and tuned correctly.

There is, however, an important caveat in AMD’s own messaging. The company explicitly warns that OpenClaw is a highly autonomous AI agent and says users should run it with caution, ideally on a separate clean PC or in a virtual machine, because agent behavior can be unpredictable and may lead to unintended outcomes. That warning is a useful reminder that AMD is not just marketing AI capability here, but also acknowledging the security and workflow risks that come with handing broad control to agentic software.

From a hardware strategy standpoint, this is one of the clearest examples yet of AMD trying to move beyond abstract AI branding and into concrete local use cases. The company is not only promoting silicon, but also publishing a reproducible workflow that shows where Ryzen AI MAX+ fits as a compact high memory local agent machine and where Radeon AI PRO fits as a more aggressive throughput oriented workstation option. In practical terms, that makes the value proposition easier to understand for developers, prosumers, and advanced users who want to run models locally instead of depending entirely on cloud infrastructure.

For the broader PC market, the takeaway is straightforward. Ryzen AI MAX class systems are shaping up as highly portable local AI boxes with unusually strong memory flexibility, while Radeon AI PRO is being positioned as the faster and more scalable desk side route for heavier sustained workloads. Whether OpenClaw itself becomes a mainstream killer app is still an open question, but AMD is clearly trying to make sure its hardware is part of that conversation early.

Do you think local AI agents like OpenClaw will become a real reason to buy high memory AI PCs and workstation GPUs, or is this still a niche use case for early adopters?