NVIDIA Launches Ising, the First Open AI Models Built to Speed Up Quantum Calibration and Error Correction

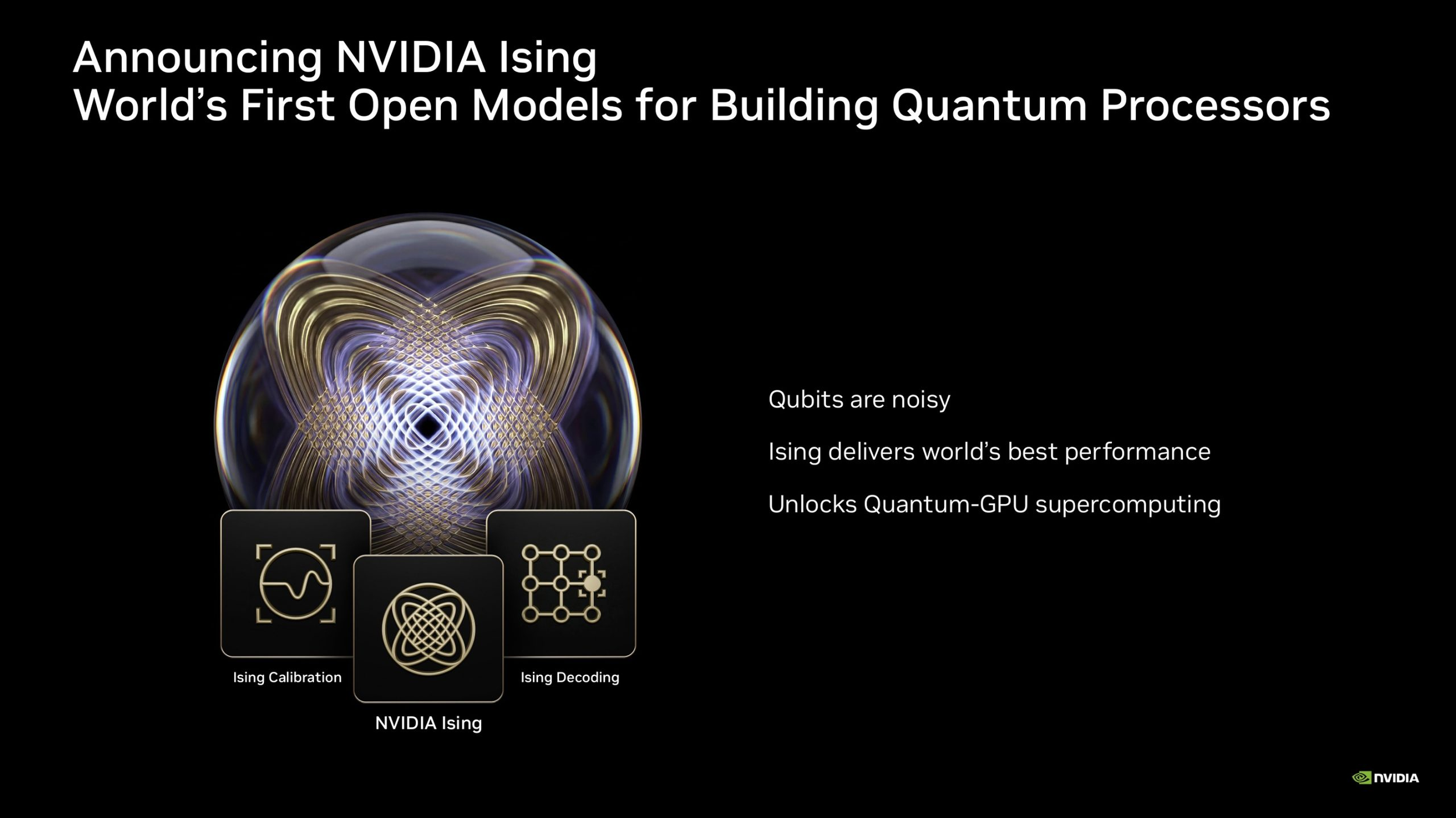

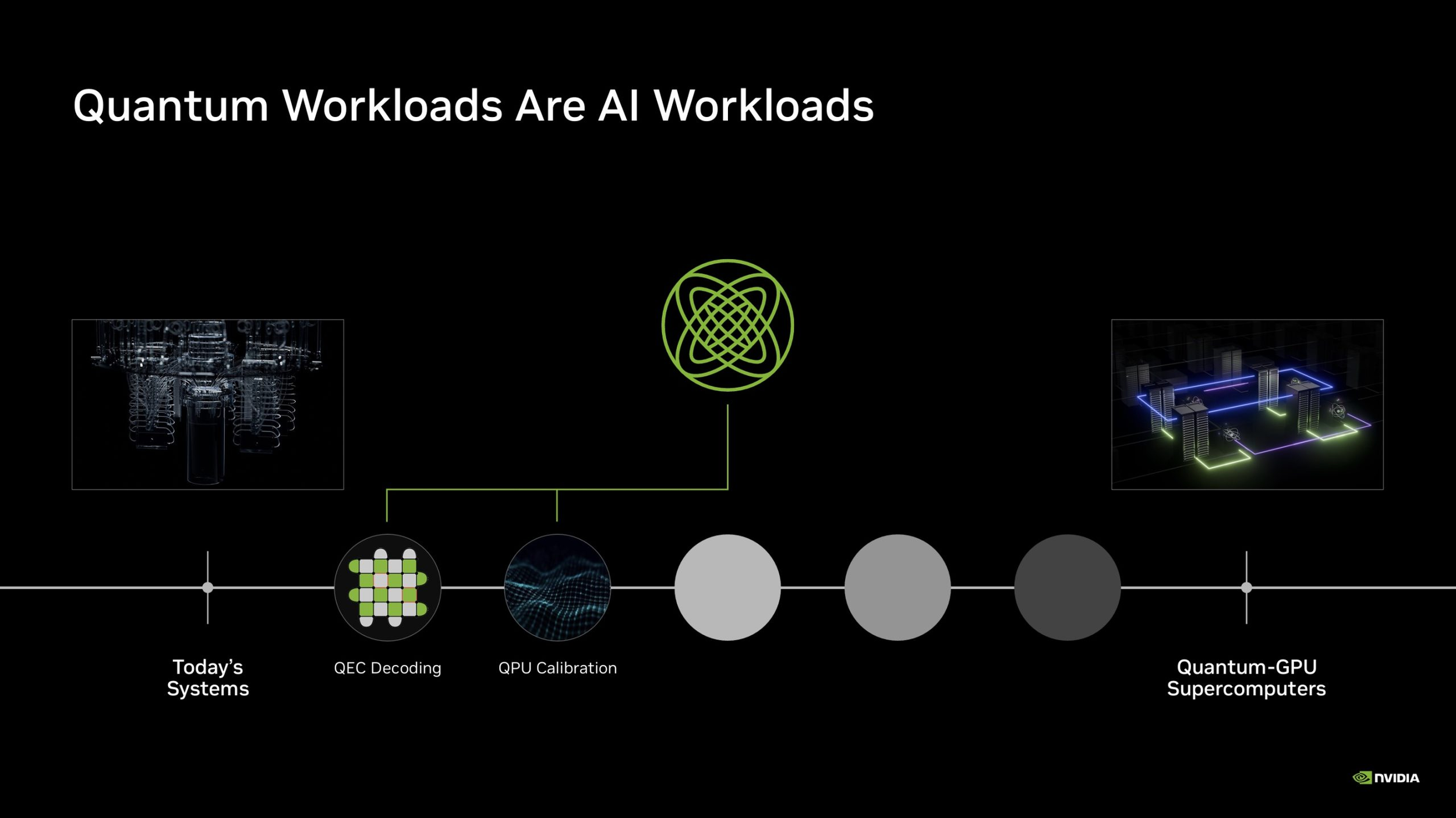

NVIDIA has unveiled Ising, a new family of open AI models created specifically for quantum computing workflows. Rather than making quantum computing instantly “practical” on its own, Ising is aimed at 2 of the biggest engineering bottlenecks still holding the field back today: processor calibration and quantum error correction. NVIDIA says the models are designed to help researchers and enterprises build quantum processors that are more stable, more scalable, and more capable of supporting useful applications over time.

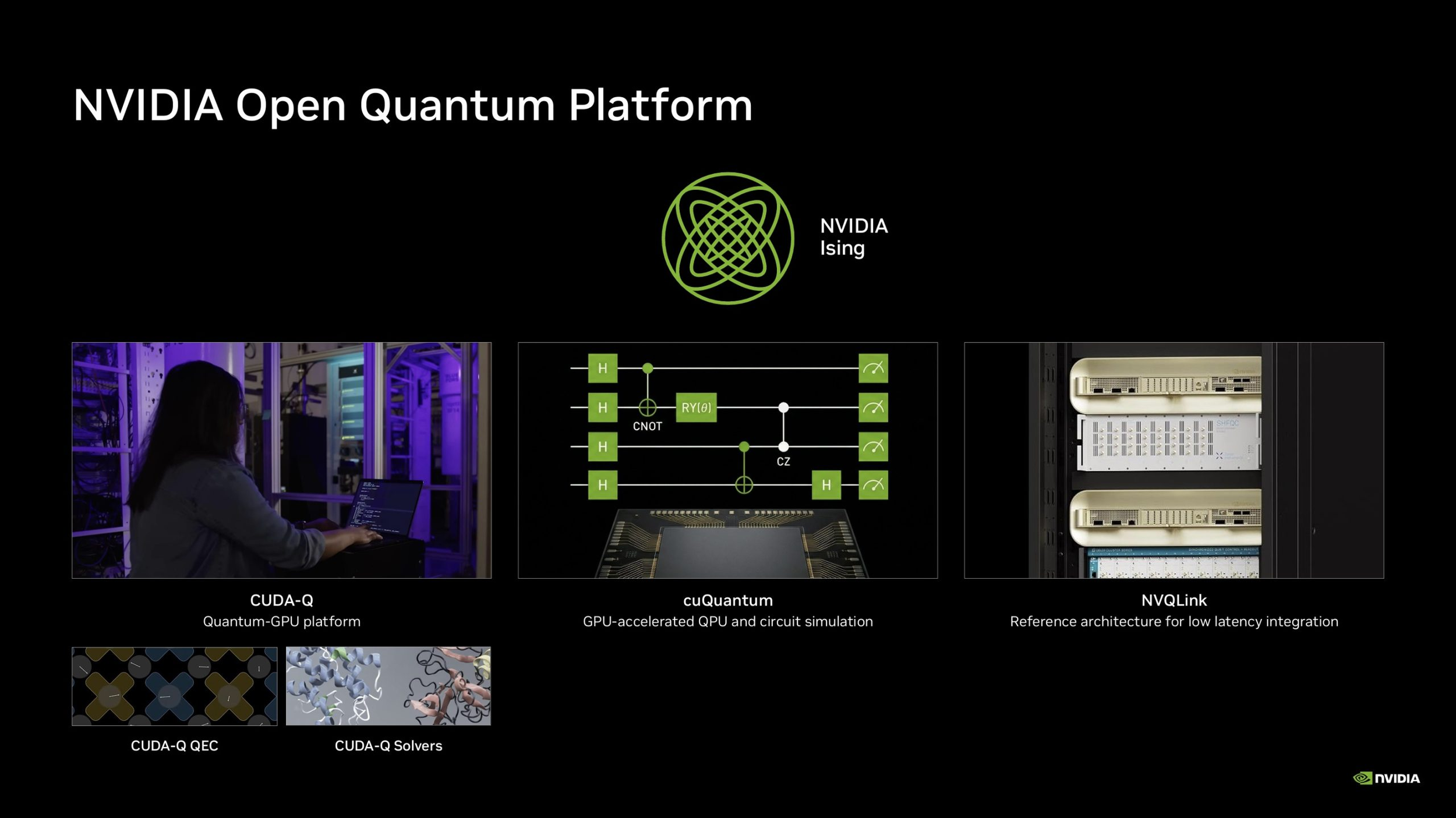

This launch also fits directly into NVIDIA’s broader quantum strategy. The company already offers CUDA Q, its open source and QPU agnostic platform for hybrid quantum classical computing, and Ising now becomes a new AI layer on top of that foundation. NVIDIA describes CUDA Q as a platform that lets developers work across CPUs, GPUs, and QPUs inside one environment, with support for multiple qubit types and vendor backends. In that context, Ising is less a standalone product and more a new acceleration layer for the company’s expanding quantum software and infrastructure stack.

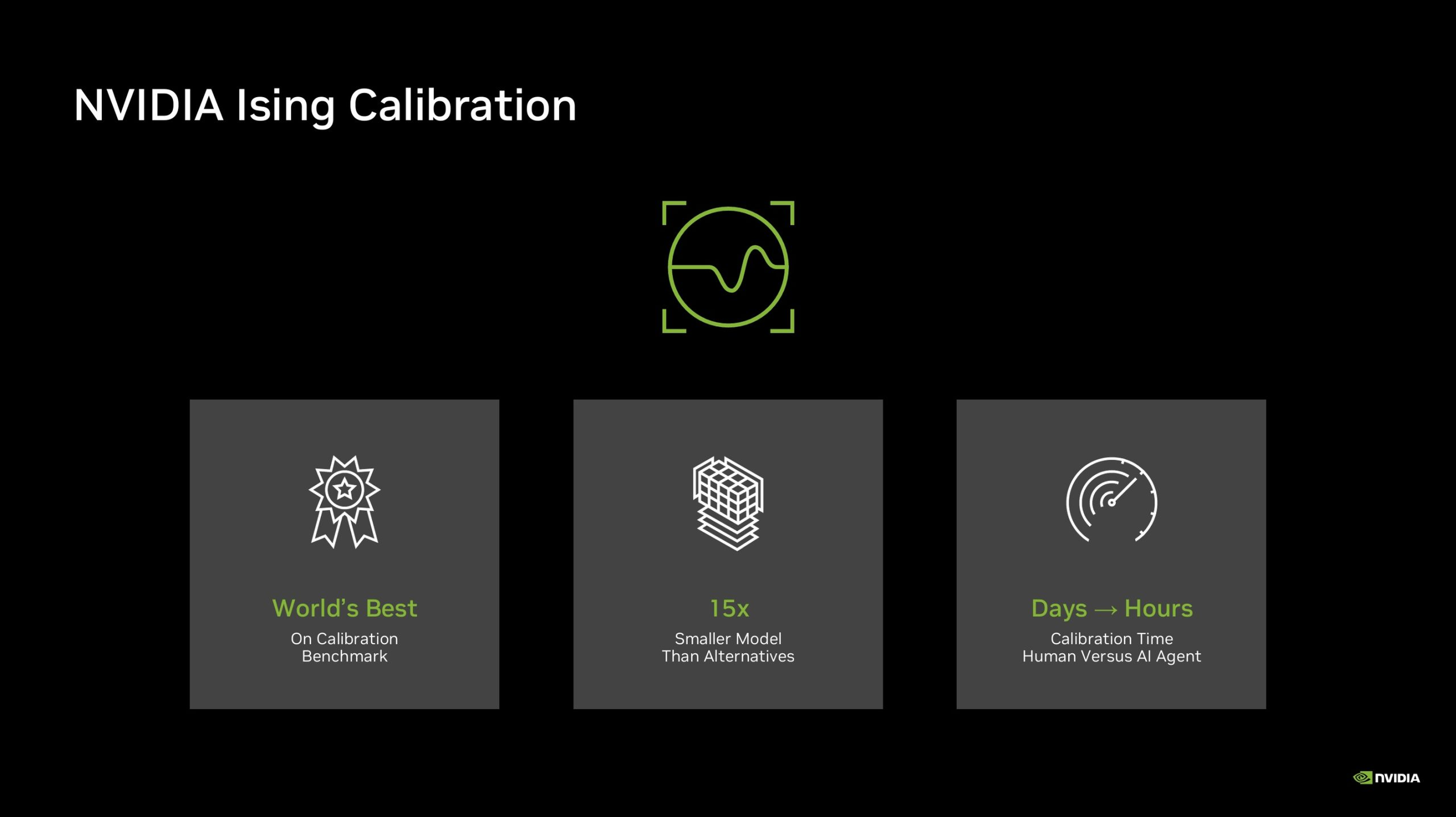

The reason this matters is simple. Quantum systems are still extremely noisy, and that noise makes useful large scale computing difficult. Before a quantum processor can be trusted for serious workloads, it must be calibrated constantly, and errors must be detected and corrected with much higher efficiency than what current approaches allow. NVIDIA is positioning AI as a practical tool to attack that problem. In its official announcement and technical blog, the company says Ising is built to reduce calibration time from days to hours and improve real time quantum error correction so future fault tolerant systems can scale more effectively.

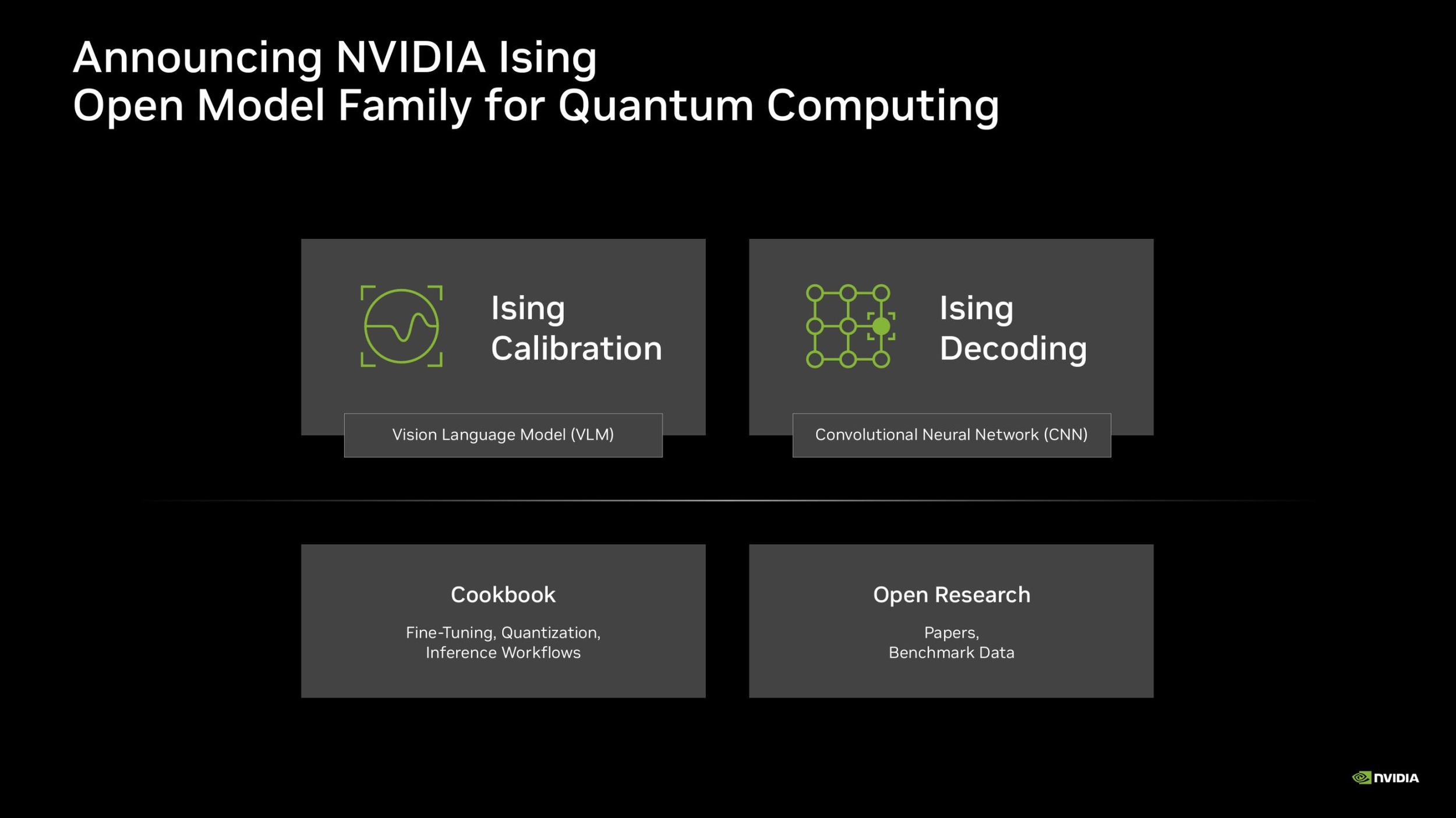

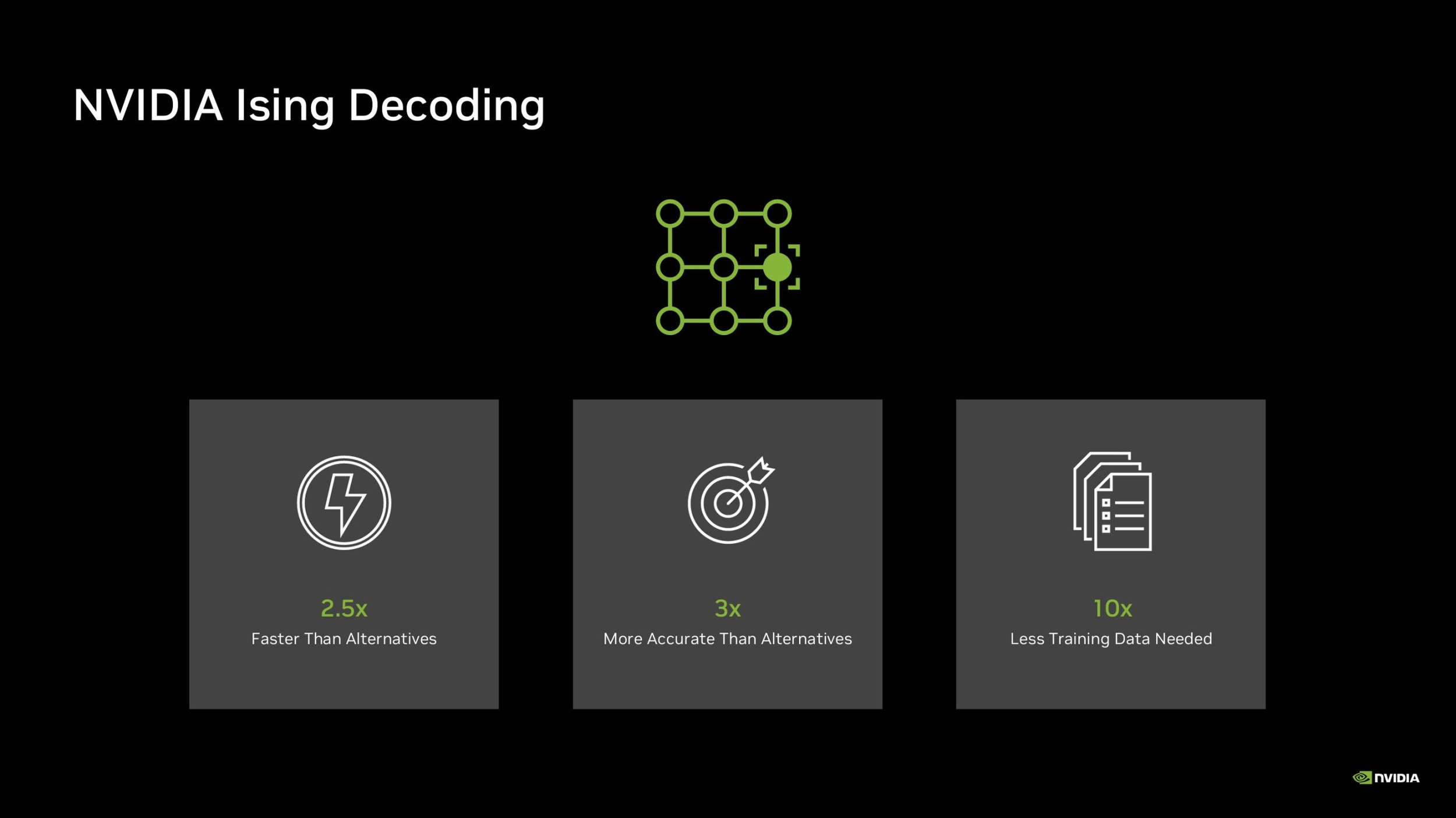

The Ising family currently includes 2 major components. The first is Ising Calibration, which NVIDIA describes as a vision language model designed to interpret measurements from quantum processors and automate continuous calibration workflows. The second is Ising Decoding, which comes in 2 variants built around a 3D convolutional neural network architecture, with one version tuned more for speed and the other for accuracy in quantum error correction decoding. According to NVIDIA, this decoding stage is a critical part of making quantum systems reliable enough for practical use.

In terms of headline gains, NVIDIA says the Ising Decoding models are up to 2.5 times faster and 3 times more accurate than pyMatching, which it identifies as the current open source industry standard for this task. The company also says Ising Calibration is 15 times smaller than alternative approaches, while Ising Decoding needs 10 times less training data. Those are strong claims, and if they hold up broadly across real deployments, they could make Ising one of the more meaningful software side advances in quantum infrastructure this year. At the same time, it is important to frame this correctly: these models do not solve quantum computing outright. They improve important support systems around it.

That distinction is the key to understanding what NVIDIA has actually announced. Ising does not mean the industry suddenly has fully practical, everyday quantum computers ready to replace classical machines. What it does mean is that NVIDIA is trying to make quantum hardware easier to tune, easier to stabilize, and easier to manage through AI driven workflows. In other words, this is an enabling step, not the finish line. For a field that has struggled for decades with calibration complexity and error correction overhead, that is still a significant move.

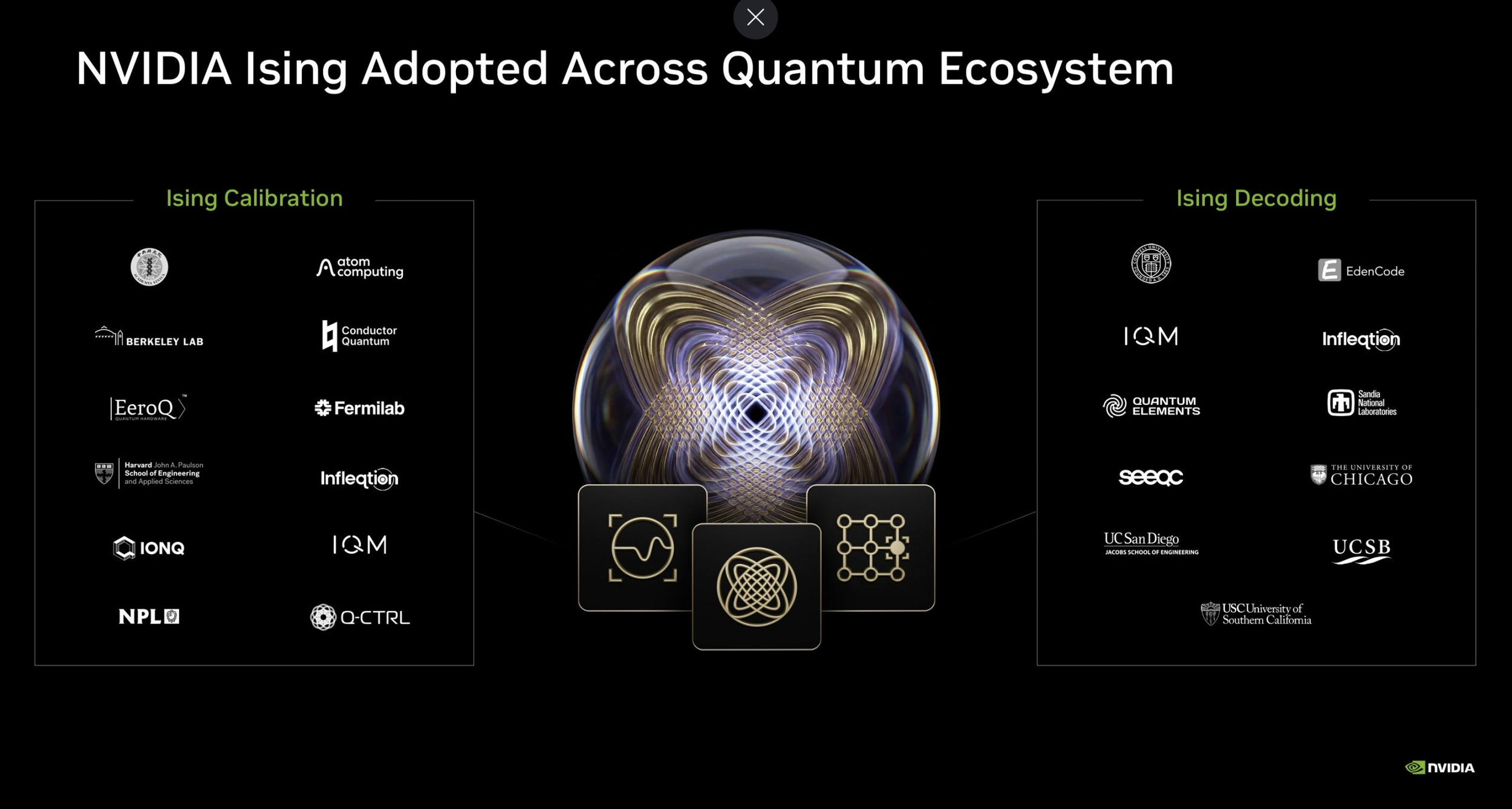

NVIDIA also says the models are already being used by researchers, academic institutions, and enterprise teams. The company has published Ising as an open model family and tied it into its broader quantum GPU computing platform, which suggests it wants adoption to happen through the same ecosystem strategy it has used successfully in AI and accelerated computing more broadly. The newly launched NVIDIA Ising portal also positions the project around open models, training frameworks, datasets, and workflows for quantum GPU supercomputing.

From an industry perspective, this is one of the more strategically interesting quantum announcements NVIDIA has made so far. The company is not trying to build a quantum processor itself as the centerpiece of the message. Instead, it is doing what NVIDIA tends to do best: building the software, AI, and accelerated computing layers that can sit at the center of an emerging ecosystem. If quantum computing does move closer to large scale usefulness over the next several years, infrastructure like CUDA Q and AI model families like Ising could end up becoming just as important as the underlying qubit hardware itself.

For now, Ising should be seen as a serious and credible advance in the quantum toolchain, not as proof that the sector has fully crossed into mass practical deployment. It is a meaningful step toward useful quantum computing, especially in the areas where classical AI can help quantum hardware behave more like a scalable computing platform and less like a fragile lab experiment. That alone makes it one of NVIDIA’s most important frontier computing announcements of 2026 so far.

Do you think AI driven calibration and decoding will become the breakthrough that finally moves quantum computing closer to real world usefulness?