SOCAMM Memory Standard May Expand Beyond NVIDIA as AMD and Qualcomm Explore Adoption for Next Gen AI Racks

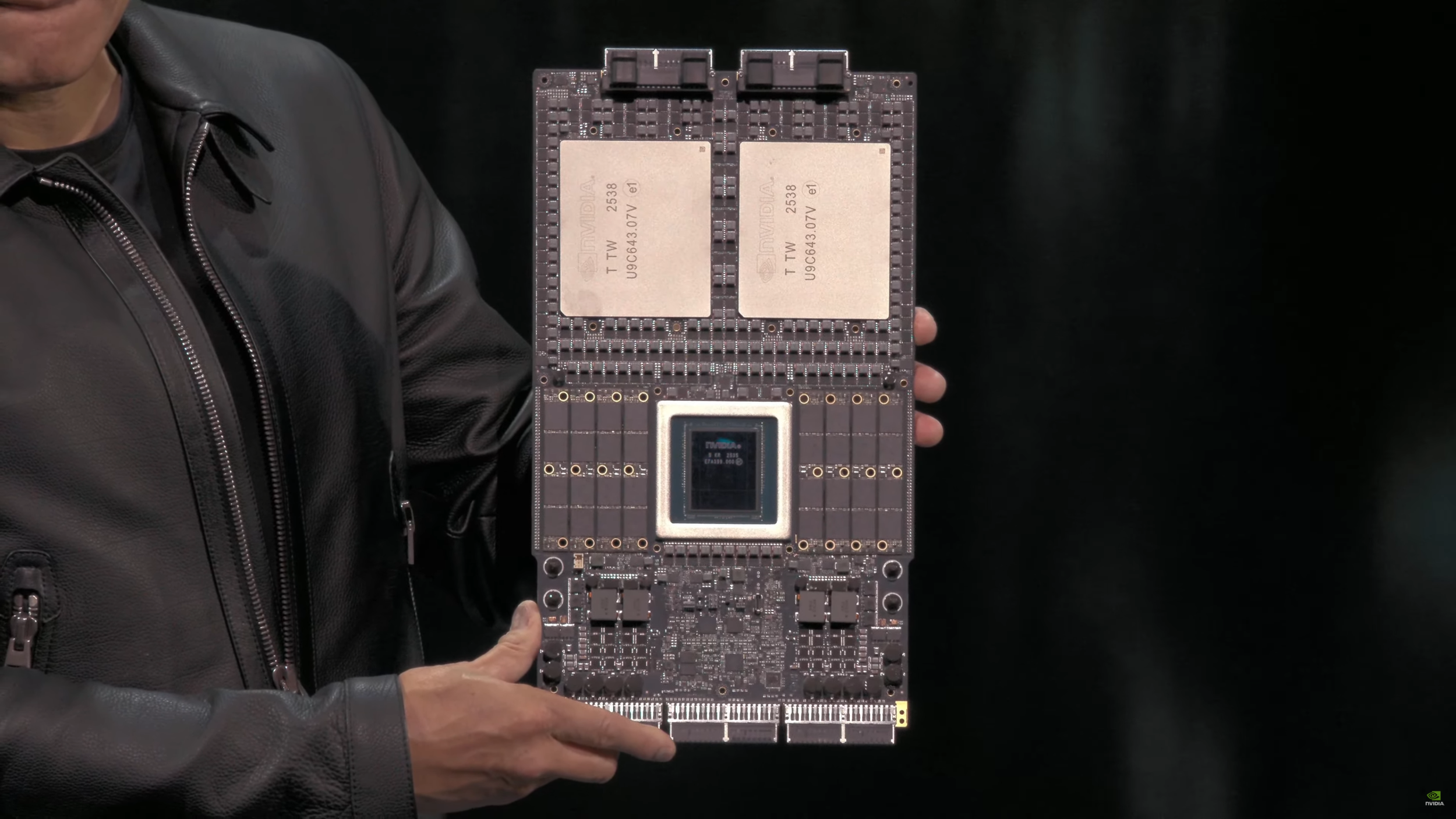

SOCAMM is starting to look less like an NVIDIA only memory play and more like an emerging AI infrastructure layer that competing platform vendors want in their own racks. SOCAMM is based on LPDDR DRAM, a memory type historically associated with mobile and low power devices, but the key difference here is serviceability. SOCAMM is designed to be upgradable rather than soldered directly to the board, positioning it as a practical middle ground between traditional DIMM style memory and ultra high bandwidth solutions like HBM for workloads that are increasingly memory bound.

According to a Hankyung report, NVIDIA, AMD, and Qualcomm are exploring SOCAMM integration for upcoming AI rack designs. The report claims AMD and Qualcomm may pursue a different physical layout than NVIDIA, with a square module approach that places 2 DRAM rows on the module. The strategic angle behind that layout is power delivery and stability at scale. By integrating the PMIC directly onto the SOCAMM module, the design can enable more direct power control at the module level, reduce motherboard power circuitry complexity, and potentially improve stability when pushing high operating speeds.

If SOCAMM adoption expands beyond NVIDIA, it has real implications for DRAM utilization and supply planning. The larger context is that agentic AI is pushing system designers toward a more layered memory hierarchy, where HBM remains the premium bandwidth tier, but a second tier of high capacity memory is needed to keep more context active without constantly paging data out. SOCAMM is being framed as that high capacity companion layer, enabling TB class memory capacity per compute node while keeping power efficiency and serviceability as first order priorities. Throughput will still trail HBM, but the value proposition is about expanding usable memory headroom for real AI workloads rather than chasing peak bandwidth alone.

On NVIDIA’s side, the report suggests the company plans to deploy SOCAMM 2 with Vera Rubin AI clusters. If AMD and Qualcomm follow through with their own module level power management approach, SOCAMM could become a more broadly contested standard in next gen AI platforms, not just a single vendor feature. That would also create a new competitive axis for memory suppliers and server builders: module design, power architecture, and platform validation could matter almost as much as raw capacity and bandwidth.

What do you think drives SOCAMM adoption more, the serviceability and upgrade path, or the need for a power friendly high capacity memory layer to complement HBM in modern AI racks?