Samsung PM9E1 Gen5 SSD Packs 4 TB Into M.2 2242 With Up To 14.5 GB/s Reads for AI Focused PCs

Samsung has introduced its new PM9E1 PCIe Gen5 SSD, delivering a rare combination of high capacity, flagship Gen5 throughput, and a compact M.2 2242 footprint that is designed to fit the next wave of small form factor AI systems. The company positions PM9E1 as an AI optimized drive built for consistent low latency behavior under mixed workloads, and it specifically calls out qualification and mass production for the recently introduced NVIDIA DGX Spark platform.

At a headline level, PM9E1 aims to solve a real product design bottleneck. High performance PCIe Gen5 SSDs typically scale best in physically larger formats with more thermal and component headroom, but Samsung is pushing that performance envelope into M.2 2242, a size that matters for dense compact builds where every millimeter of board space is budgeted.

Samsung says PM9E1 reaches these performance targets:

Sequential read up to 14500MB/s

Sequential write up to 12600MB/s

Random read up to 2000K IOPS

Random write up to 2640K IOPS

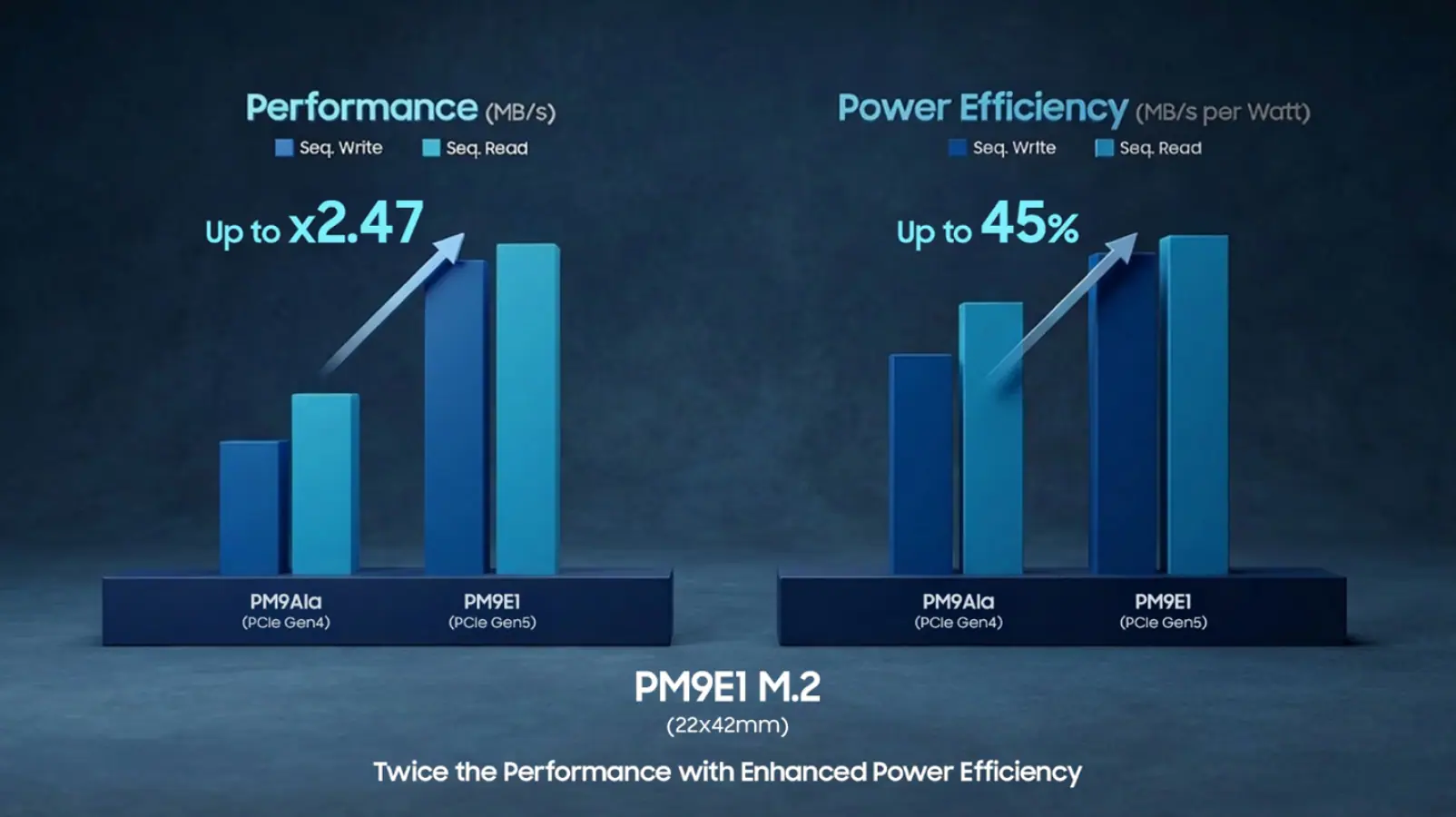

On the hardware side, Samsung describes a dual sided PCB design integrating 8th generation 1Tb V NAND and dedicated DRAM, targeting high capacity and sustained performance consistency despite the smaller form factor. Samsung also claims up to 45% power efficiency improvement versus the previous generation, and up to 2.47x overall performance improvement based on its internal comparisons.

Samsung states PM9E1 uses an in house Presto controller built on 5nm Samsung Foundry technology, with firmware tuned around the DGX Spark OS software stack, NVIDIA CUDA, and AI user experience requirements. The direct message here is that PM9E1 is not only a peak throughput play, it is a platform integration play where predictable behavior and responsiveness under AI flavored access patterns are treated as first class goals.

Samsung also emphasizes built in security, stating PM9E1 supports SPDM v1.2, including device authentication, firmware attestation, and secure channel capability. The positioning is clear: as AI systems scale, component level trust and integrity checks become part of the performance story, because burning compute cycles to validate insecure storage transactions is an efficiency tax no one wants.

The most strategic detail is not only 4TB capacity or 14.5GB/s sequential reads, it is doing this inside M.2 2242 while still including DRAM for consistent access behavior. For compact AI PCs and dense edge workstations, the storage device is now part of the system architecture conversation. Higher SSD power efficiency reduces thermal load, and a shorter physical signal path in a tight layout can translate into better design flexibility for the whole box.

If you were building a compact AI PC, would you prioritize maximum Gen5 speed like 14500MB/s reads, or would you trade some peak throughput for even higher endurance and lower sustained thermals?