Micron Pushes AI Server Memory Forward With 256 GB DDR5 RDIMMs at 9200 MT/s

Micron has announced that it is now sampling its new 256 GB DDR5 RDIMM modules to key server ecosystem partners, marking a major step forward for AI server memory as capacity, bandwidth, and power efficiency become increasingly critical for large language models, agentic AI, real time inference, and high core count CPU platforms. The new module is based on Micron’s 1 gamma DRAM process and is designed to give hyperscalers and server architects a much larger memory footprint per socket without losing sight of power and thermal limits. The company revealed the launch in its official Micron press release.

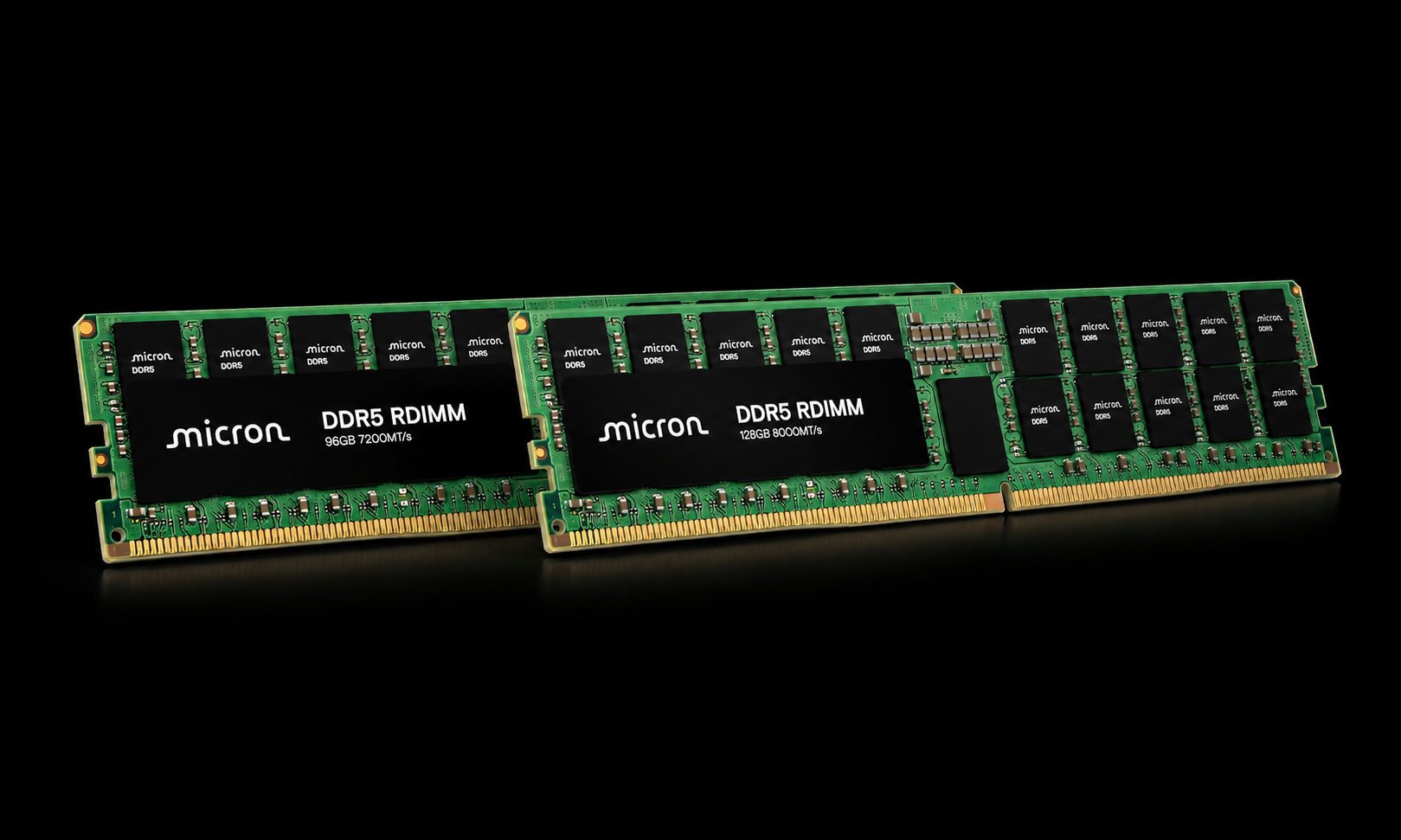

The headline figure is speed. Micron says the new 256 GB DDR5 RDIMM is capable of reaching up to 9200 MT/s, which the company says is more than 40% faster than modules currently in volume production. Micron’s own comparison notes that this advantage is measured against 6400 MT/s products, making the new module one of the most aggressive DDR5 RDIMM announcements yet for enterprise and AI infrastructure.

Beyond raw transfer rate, Micron is also leaning hard into packaging innovation. The 256 GB module uses advanced 3D stacking and through silicon via packaging to connect multiple memory dies, combining that architecture with its 1 gamma DRAM technology to improve capacity, speed, and power efficiency in a single server class module. For data center operators building denser AI systems, that combination is arguably the bigger story than speed alone, because modern AI deployments are increasingly constrained by total system efficiency rather than just theoretical bandwidth.

Micron is also making a strong efficiency claim. According to the company, a single 256 GB module can reduce operating power by more than 40% compared with using 2 separate 128 GB modules. That matters in AI infrastructure where memory population, power draw, and thermal behavior all scale quickly as systems grow larger and more complex. In practical terms, Micron is positioning this module not only as a faster RDIMM, but as a more efficient way to scale memory capacity for next generation servers.

The company says it is already working with key ecosystem enablers to validate the new 256 GB 1 gamma DDR5 RDIMM across both current and next generation server platforms. That co validation effort is meant to accelerate production deployment and broader platform compatibility, which is important because AI and HPC customers typically need much more than raw memory speed before rolling out hardware at scale. Right now, Micron’s announcement is about sampling and validation, not general availability.

From a market perspective, this launch shows exactly where server memory is heading. AI workloads are demanding more memory capacity per socket, more bandwidth, and better efficiency all at once, and Micron is clearly trying to position itself at the front of that transition. A 256 GB DDR5 RDIMM at 9200 MT/s is not just a spec bump. It is a signal that memory vendors are now racing to support the infrastructure layer behind the next wave of AI growth, where bottlenecks are no longer limited to GPUs alone.

Do you think the next big AI server bottleneck will be memory bandwidth, total capacity, or power efficiency?