AMD Launches Instinct MI350P PCIe Card With 4.6 PFLOPs AI Compute, 144 GB HBM3E, and 600W Power

AMD has officially introduced the Instinct MI350P, a new PCIe accelerator built for enterprise AI deployment inside standard server infrastructure. The new card brings CDNA 4, 144 GB of HBM3E memory, up to 4 TB/s of memory bandwidth, and a 600W maximum typical board power, while targeting organizations that want to scale inference and agentic AI workloads without moving to more complex rack level accelerator platforms. AMD is positioning the MI350P as a dual slot, drop in option for standard air cooled servers, making it one of the company’s clearest pushes yet toward easier on premises AI expansion.

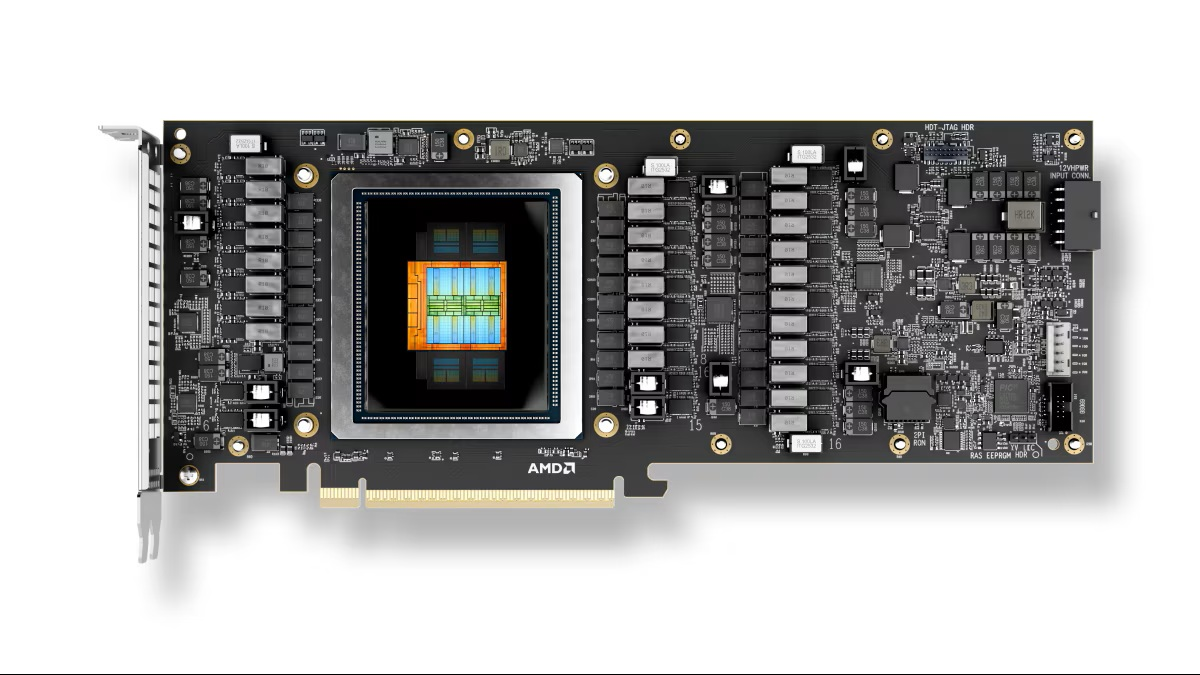

At the silicon level, the Instinct MI350P uses the AMD CDNA 4 architecture and carries 128 compute units, 8,192 stream processors, and 512 matrix cores, with a peak engine clock of 2200 MHz. AMD also lists the chip at 73 billion transistors and notes that it is produced using TSMC 3nm and 6nm FinFET technologies. In practical terms, this is a trimmed configuration compared with the larger MI350X class design, but it still lands with substantial compute density for a PCIe card aimed at inference heavy enterprise deployments.

The performance profile is where AMD wants the MI350P to stand out. According to the company, the card delivers 4.6 PFLOPs of MXFP4 matrix performance and the same 4.6 PFLOPs in MXFP6, while reaching 2.3 PFLOPs in MXFP8 and 2.3 PFLOPs in sparse FP16 workloads. Standard FP16 and FP32 are both rated at 72 TFLOPs, while FP64 lands at 36 TFLOPs. AMD’s own launch messaging says the card is designed to bring the highest performance currently available in an enterprise PCIe card at MXFP4, which is an important positioning point as vendors race to optimize for lower precision AI inference formats.

Memory is another major part of the value proposition. The MI350P integrates 144 GB of HBM3E on a 4096 bit interface and pairs that with 128 MB of last level cache. AMD lists peak memory bandwidth at 4 TB/s, which gives the card a very strong local memory footprint for large model inference and high throughput enterprise tasks. The board itself uses a PCIe 5.0 x16 interface, measures 10.5 inches or 267 mm in length, and comes in a double slot passive cooled design intended for server deployment. External power is handled through a 12V 2x6 connector, and AMD says the board can also be configured down to 450W TBP.

In terms of performance, the AMD Instinct MI350P offers:

4.6 PFLOPs MXFP4

4.6 PFLOPs MXFP6

2.3 PFLOPs MXFP8

2.3 PFLOPs FP16 with structured sparsity

1.15 PFLOPs FP16 matrix

72 TFLOPs FP16

72 TFLOPs FP32

36 TFLOPs FP64

2.3 POPs INT8

4.6 POPs INT8 with structured sparsity

1.15 PFLOPs bfloat16

2.3 PFLOPs bfloat16 with structured sparsity

AMD is also emphasizing software openness and deployment flexibility. The company says MI350P supports an open enterprise AI stack with ROCm, alongside frameworks and tools including PyTorch, TensorFlow, JAX, Triton, SGLang, OpenMP, and HIP. In its launch blog, AMD also highlights its enterprise AI reference stack, Kubernetes GPU Operator integration, and inference microservices as part of the broader effort to reduce deployment friction and lower operating expense for customers building inference infrastructure on premises.

From a market perspective, the MI350P gives AMD a more direct answer in the enterprise PCIe space, particularly against products like NVIDIA’s H200 NVL, which also targets high memory capacity server acceleration and uses HBM3E. The competitive difference here is not just raw throughput, but platform philosophy. AMD is clearly selling the MI350P as a more accessible AI accelerator for businesses that want serious model serving capability inside current data center power, cooling, and rack limits, rather than requiring a larger scale redesign. That angle could resonate strongly with enterprise customers that are interested in AI adoption but are still cautious about infrastructure cost and rollout complexity.

AMD says the Instinct MI350P is now available through a broad partner ecosystem, and its launch messaging includes support statements from vendors such as Dell, HPE, Cisco, Lenovo, Supermicro, and Gigabyte, as well as software side collaboration from companies including Red Hat, Akamai, Broadcom, Nutanix, and others. That matters because success in this segment will depend just as much on validated server availability and software readiness as it does on the silicon itself. For AMD, MI350P is not just another accelerator announcement. It is a strategic attempt to widen adoption of Instinct by making enterprise AI deployment more practical, more modular, and easier to justify financially.

Do you think AMD’s MI350P could become one of the most practical enterprise AI accelerators for companies that want strong inference performance without rebuilding their entire data center?