NVIDIA Shows Neural Texture Compression Slashing VRAM Use by 85%, or Delivering Better Texture Quality Within the Same Budget

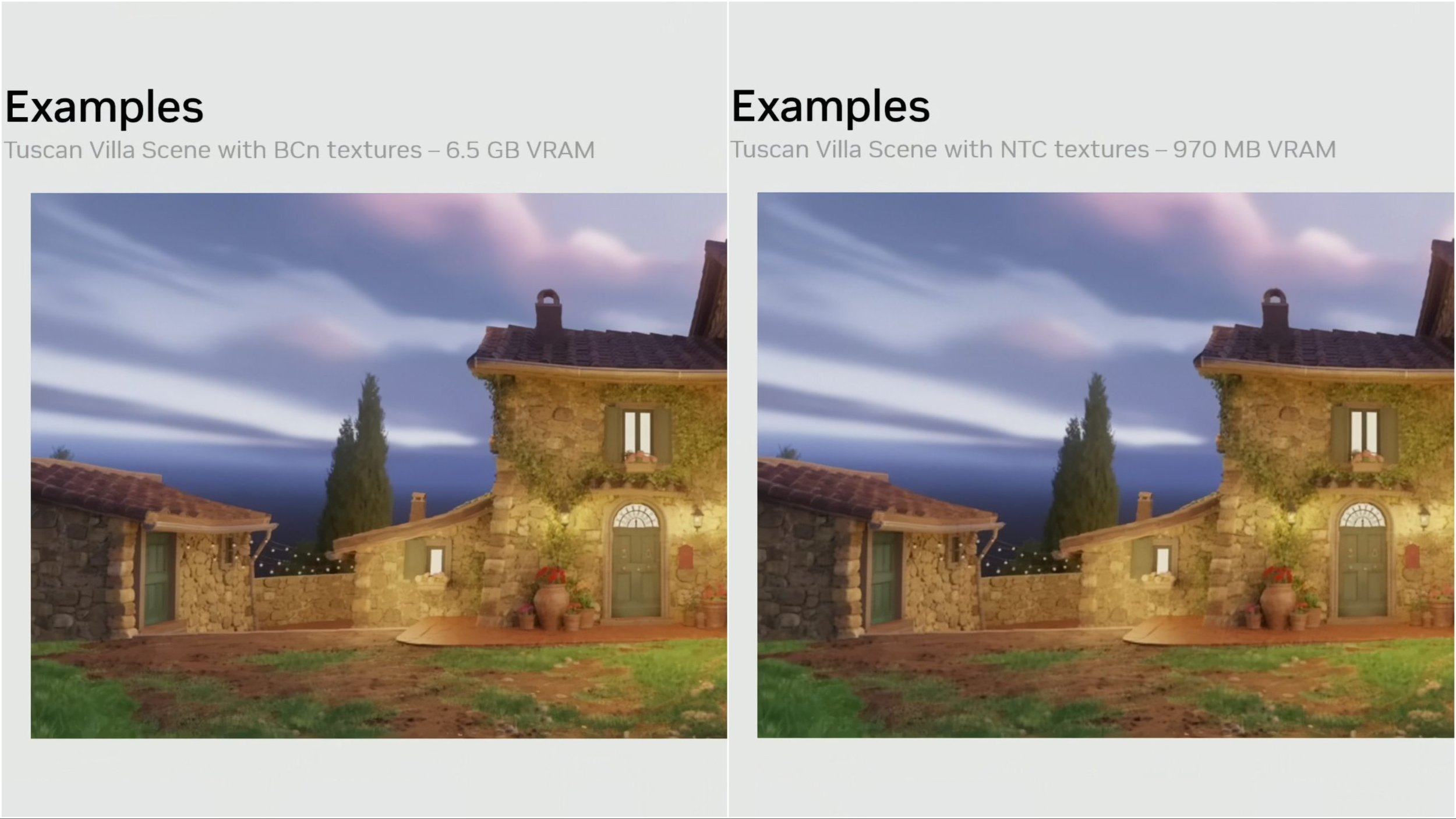

NVIDIA has once again put Neural Texture Compression, or NTC, back in the spotlight during its GTC 2026 session, Introduction to Neural Rendering. The company used the presentation to show why it believes NTC could become one of the more practical AI driven rendering upgrades for future games, not because it generates content, but because it can dramatically reduce texture memory requirements or raise image quality without demanding a larger VRAM budget. According to NVIDIA’s own demo, a Tuscan Villa scene that used 6.5 GB of VRAM with conventional BCn texture compression was reduced to just 970 MB with NTC, an 85% drop in texture memory use.

That headline number is exactly why NVIDIA is pushing the technology again. Neural Texture Compression was first introduced several years ago, but its RTX NTC SDK is now publicly available in beta through GitHub, where NVIDIA describes it as an algorithm designed to compress all physically based rendering textures for a single material together. The repository currently labels the release as RTX Neural Texture Compression SDK v0.9.2 BETA, which shows the technology is available to developers, even if no major shipped game has publicly adopted it yet.

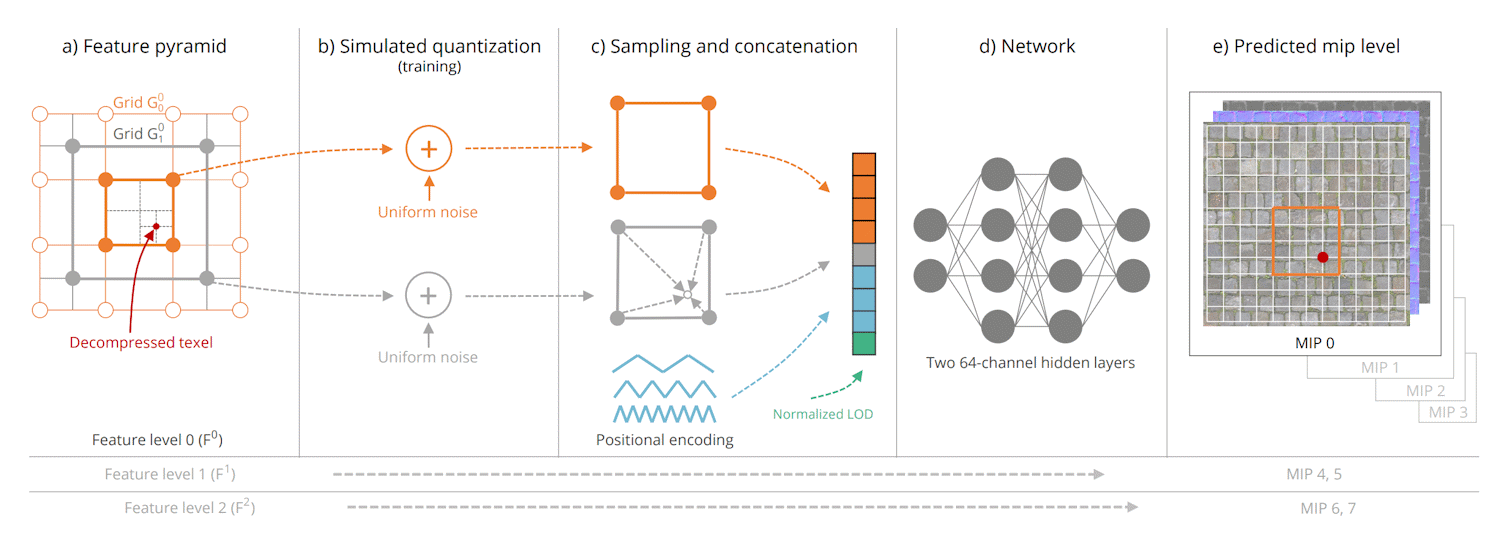

The technical idea behind NTC is straightforward, even if the implementation is much more advanced than traditional texture compression. Instead of storing every texel directly in memory, the system compresses texture information into smaller learned latent features, then uses a compact neural network decoder on the GPU to reconstruct the texel data when needed. NVIDIA says the decoder is deterministic, which is an important distinction in a game development environment. This is not a generative AI system inventing new visual content on the fly. It is a reproducible compression and reconstruction pipeline designed to recreate the same source texture consistently every time.

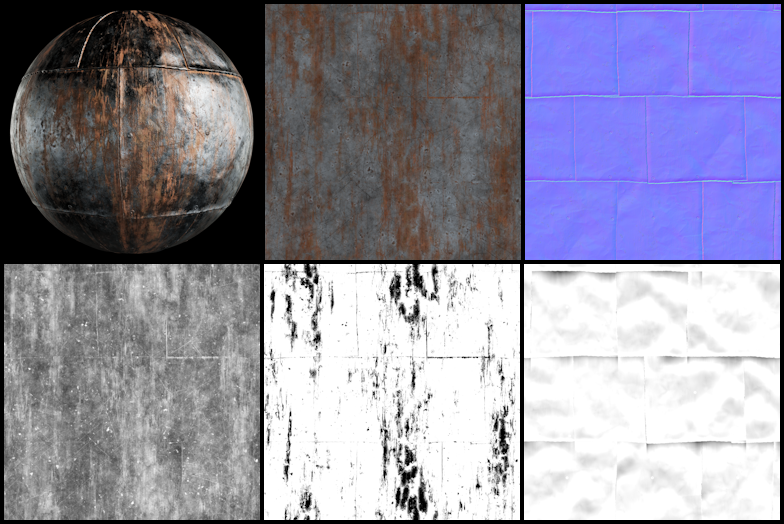

NVIDIA also argues that NTC holds several practical advantages over traditional block compression formats like BCn. In the SDK documentation, the company says NTC can compress up to 16 texture channels into a single texture set, which is particularly useful for modern material stacks that combine albedo, normal data, roughness, metalness, ambient occlusion, opacity, and other packed properties. The company’s own example shows that a real world material bundle that might occupy 12 MB with BCn compression could be reduced to around 2.5 MB with NTC in its on sample mode, while still targeting image quality broadly comparable to BCn.

That is where the technology becomes especially attractive for game developers. A reduction in texture memory use does not only help VRAM constrained GPUs. It can also reduce install sizes, shrink patches, lower bandwidth demands, and potentially make it easier for developers to target higher quality assets without blowing out memory budgets. NVIDIA has previously described the technology as capable of cutting texture memory consumption by up to 8 times compared with traditional block compression, and the newer GTC 2026 demo appears to reinforce that broader value proposition with a more dramatic scene level example.

The catch, of course, is adoption. NVIDIA has been talking about Neural Texture Compression for a while, but there is still no widely known commercial game using it at launch. That may partly explain why the company used GTC 2026 to revisit the technology in public and why it continues expanding the SDK. NVIDIA’s August 2025 developer update said the SDK had gained a Direct3D 12 path for Cooperative Vectors, alongside evaluation, compression, and engine integration tools, which suggests the company has been actively maturing the implementation rather than treating it as a research curiosity.

The broader significance is that NTC looks like one of the more practical and less controversial uses of AI in graphics. It is not trying to replace art creation, invent assets, or change the authored look of a game. Instead, it is trying to store the same textures more efficiently and reconstruct them more intelligently at runtime. In an industry where texture quality, memory pressure, and install size are all growing at once, that makes NTC a much easier pitch than many of the more headline driven AI features attached to gaming.

There is also an interesting future facing angle here. The current SDK documentation says NTC can run with fallback implementations on any platform supporting at least Direct3D 12 Shader Model 6, though newer architectures with better inference acceleration see much stronger performance. That does not guarantee broad console use, but it does show why developers and hardware watchers are paying attention to where this could go next, especially if future systems need to balance asset quality, memory limits, and storage costs more aggressively.

For now, the message from NVIDIA is clear. With Introduction to Neural Rendering, the company is trying to remind developers that Neural Texture Compression is no longer just a research concept. The tools are out, the SDK is in beta through RTX NTC on GitHub, and the potential upside is significant. What happens next depends on whether studios decide the memory savings and texture quality gains are worth integrating into real production pipelines.

Would you rather see developers use Neural Texture Compression to cut VRAM usage for better performance, or use the same memory budget to push texture quality much higher?